April 28th, 2026

How to Optimize Content for SEO Using AI Tools

WD

WDWarren Day

You've tried AI writers. The content still isn't ranking.

The problem isn't the AI itself. It's the workflow around it.

In 2026, figuring out how to optimize content for SEO isn't about finding the right tool. It's about building a process that doesn't fall apart when you scale it. A semi-automated pipeline that uses AI for volume and human judgment for the stuff AI keeps getting wrong.

Most technical marketers I work with are stuck in the same gap. They've tried ChatGPT or Jasper, got decent drafts... and then watched the content sit there. Either it feels generic, or worse, it has subtle inaccuracies that Google's EEAT signals now penalize hard.

Meanwhile, over 58.5% of Google searches end without a click. AI-sourced sessions are up 527% year-over-year. There's a whole visibility layer most teams aren't even measuring yet.

This is the blueprint. A six-step hybrid workflow built specifically for B2B SaaS and tech companies sitting around DR 30-50.

We'll cover how to connect tools like Ahrefs and specialized AI platforms into something deterministic, with actual quality gates. You'll see the validation layer that catches hallucinations before they publish (they still show up in 15%+ of AI outputs on benchmark tasks). And we'll get into which KPIs actually matter now, like AI citation rate, not just the traditional stuff.

One more thing: google seo cost comes up a lot when people start building this kind of system. We'll get into that too, because "how much does this actually cost to run" is a real question with a real answer, not a "it depends" non-answer.

This is infrastructure. Not just content.

Audit Your SEO Foundation and Gather Required Tools

Before you automate anything, fix your foundations. I've seen teams spend weeks building an AI pipeline only to fail because their site was technically broken or they were targeting impossible keywords. This step is non-negotiable.

First, get your tool stack sorted. You need tools across three areas:

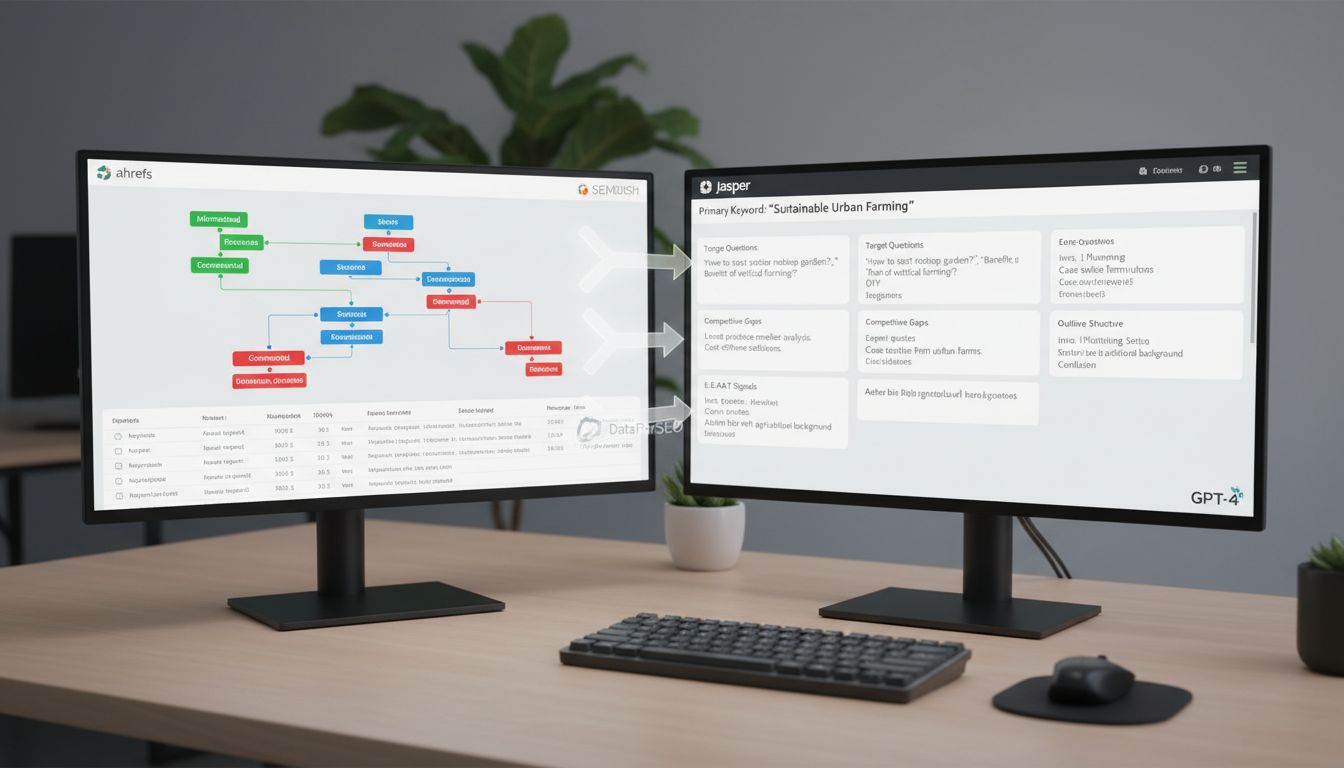

- Keyword & SERP Research: Ahrefs or SEMrush. Their APIs are what make automation actually possible.

- AI Writing & Briefing: Jasper or a direct GPT-4 API integration set up for structured outputs.

- Your CMS: Make sure it has an API (WordPress REST API, Contentful, etc.) for programmatic publishing.

The most important component here isn't a tool. It's a person.

Assign a dedicated editor for mandatory review before anything publishes. AI hallucination rates on complex tasks can exceed 15% Aimultiple. Skip human QA and factual errors are basically guaranteed.

Next, run a hard domain rating (DR) assessment in Ahrefs. This dictates everything about your keyword strategy. If your site is at DR 32 and the top five results for a keyword are all DR 70+, you're wasting resources. Be realistic about where you can actually compete.

Then do a technical audit. Google PageSpeed Insights and Mobile-Friendly Test are your starting points. Fix the critical stuff: render-blocking resources, unplayable content, anything that tanks your load time. Google's crawlers prioritize technically sound sites, and so do the AI systems pulling from that index. If your page takes eight seconds to load, knowing how to optimize content for SEO won't save you.

Skip this audit and your automated workflow will just produce irrelevant content very efficiently. Get the basics right first.

Build a Cost-Effective AI SEO Tool Stack

Once your foundations are solid, the next question is which tools to actually buy. Not the most expensive platform. A pipeline where each piece passes clean data to the next, so you're not spending half your day on manual exports and reformatting.

For a site around DR 30, you're balancing capability against budget. Map tools directly to your workflow stages:

- Research & Briefing: Use DataForSEO's API for SERP analysis and keyword clustering. It's programmatic and far cheaper than manual dashboard work at any real volume.

- Drafting & Structuring: Template-based AI writers like Jasper or Surfer SEO work well here. Their structured prompts and brand voice memory cut editing time significantly, but never skip the human review. Hallucination rates on some tasks exceed 15%.

- Validation & QA: Mostly human judgment, augmented by fact-checking prompts in your AI writer and plagiarism checks via Copyscape or Originality.ai.

- Publishing & Integration: Pick a CMS with native automation, like Contentful AI Actions or WordPress with Advanced Custom Fields. Brittle Zapier chains will eventually break at the worst possible time.

- Monitoring: Stick with Ahrefs or Semrush for tracking rankings and the AI visibility metrics they've started rolling out.

The real google seo cost here isn't the subscription. It's the integration tax.

A cheaper tool that requires manual CSV exports every time will waste more in developer or marketer hours than a slightly more expensive API-native option. Evaluate every tool on how well it shares data with your CMS and data warehouse. That's the actual question.

87% of content marketers use AI now. Few have a connected stack. If you're trying to figure out how to optimize content for seo at scale, the workflow is the advantage, not which widgets you're running.

Step 1: Conduct AI-Powered Keyword Research and Briefing

Start with keyword clustering in Ahrefs' Content Gap or Semrush's Topic Research. Not just gathering keywords, grouping them by search intent.

For something like "how to optimize content for seo," you'll find transactional variants ("seo content optimization tool"), informational ("what is seo content optimization"), and commercial ("seo content optimization services"). Export those clusters as structured JSON.

Then analyze the SERP for each cluster using an API like DataForSEO. I use this in Spectre to pull the top 10 results and extract their semantic structure. You want to see:

- Which entities appear consistently (tools, metrics, methodologies)

- Content length and structure patterns

- Missing angles your competitors haven't covered

That API output becomes the backbone of your content brief. Instead of telling an AI writer "write about SEO optimization," you feed it actual data: "The top 3 results average 2,800 words, all include FAQ sections, and mention 'E-E-A-T' 4-6 times. Competitor A covers technical optimization but misses AI visibility metrics."

Build your brief with these sections:

- Primary keyword and variants (from your clustered JSON)

- Target questions to answer (pull from "People Also Ask" via the API)

- Competitive analysis gaps (what top results miss)

- Required E-E-A-T signals (specific studies to cite, expert quotes needed)

- Outline structure (H2s and H3s based on SERP patterns)

This matters because when an AI gets vague instructions, it invents. When it gets specific JSON with competitor word counts, missing entities, and required citations, it produces grounded content. The brief is what controls the output.

Cross-reference with at least two data sources before finalizing. Check Ahrefs' keyword difficulty against Semrush's volume estimates. If they disagree significantly, find out why before you commit to targeting that keyword.

Store your briefs somewhere with version control, Notion or Airtable both work. As you publish and track performance, you'll see which assumptions actually held up and which didn't.

Step 2: Draft Content with AI Assistance Using Templates

Brief ready? Open your AI writer and pick a template that matches your content type. Don't start with a blank chat interface. That's where consistency breaks down.

I use Jasper's Article Writer template because it handles the whole workflow, research, outline, draft, in one sequence.

Import your brief directly into the template fields. Most decent AI writers have dedicated sections for target keywords, competitor URLs, and SERP analysis. Paste your structured data from Ahrefs or Semrush here. Concrete guardrails beat vague instructions every time.

Configure the template to inject E-E-A-T elements automatically. Tell it to include specific expertise markers: "Cite statistics from [Source Name]", "Reference industry standards like ISO 27001", "Include real-world implementation examples." This avoids the generic "according to experts" phrasing that readers just scroll past.

Generate three draft variants, not one. Review each for factual accuracy, structural alignment with your brief, and natural keyword placement. You're looking for the version that best captures user intent while keeping an authoritative tone.

74% of new web content was created with generative AI in May 2025. Differentiation matters more than it did a year ago.

Treat the AI output as a sophisticated first draft, not publishable content. Check that headings follow your prescribed structure, that key entities appear where planned, and that the intro directly addresses the searcher's problem. If sections feel thin, use the AI's "expand" function with specific prompts, "Add a practical example of implementing X in WordPress" or "Include a comparison table between Y and Z alternatives."

Part of figuring out how to optimize content for seo with AI is realizing the template isn't doing the thinking for you. You're steering it. The google seo cost of getting this wrong is wasted crawl budget and content that ranks for nothing.

Featured snippet format:

- Select AI templates matching your content type (guides, blog posts, comparisons)

- Import structured briefs with keywords, entities, and competitor analysis

- Configure templates to automatically inject E-E-A-T elements

- Generate multiple draft variants for comparison

- Treat AI output as sophisticated first drafts requiring human refinement

Step 3: Validate AI Outputs with Fact-Checking Prompts

Your AI-generated draft is a sophisticated first draft. Not a finished product.

This is where you build your quality gate. LLMs hallucinate. Reported hallucination rates for recent models exceed 15% on benchmark tasks. In YMYL niches like finance or health, that number can spike far higher.

You must verify everything.

-

Run deterministic verification prompts. Feed the AI draft back into your LLM with a strict prompt. I use this template in Spectre: "You are a fact-checker. For every claim in the following text, cross-reference it against the top 3 SERP URLs provided in the brief. List any factual statements that cannot be verified, appear contradictory, or are unsupported by the sources. Flag any statistics without attribution."

-

Check for relevance and completeness. The AI might miss the core search intent entirely. Manually skim the draft against your brief's "questions to answer." Does it address each one? Does it include the key entities and secondary keywords? AI often omits nuanced points that show up in competitor analyses.

-

Inject human expertise for E-E-A-T. This one is non-negotiable. A human editor needs to read the piece and add experiential insight, a "gotcha" from implementation, a counter-intuitive finding, a specific tool recommendation. For a technical guide, I'll always add a paragraph on a common API error and its fix. No LLM would know that. That's the point.

-

Enforce a mandatory editorial review gate. Nothing moves to optimization until a human, preferably a subject matter expert, signs it off. Document this in your workflow (a required "Approved" status in your CMS works fine). This is your last line of defense against inaccuracies that damage credibility and rankings.

A big part of figuring out how to optimize content for seo is accepting that AI gets things wrong, regularly. The google seo cost of skipping this step isn't just a rankings hit, it's published misinformation with your name on it.

Treat AI like a powerful, error-prone research assistant. Your validation protocol is what makes the output actually trustworthy.

Step 4: Apply On-Page and Technical Optimizations

Draft validated, now you switch modes. Content creation is done. This is technical implementation, and it's where most AI workflows quietly fall apart, they generate text and stop, ignoring all the machine-readable signals that actually determine whether search engines can find and surface what you built.

Start with structured data. Use Google's Structured Data Markup Helper to generate Article, FAQPage, or HowTo schema depending on your content type. Drop the JSON-LD script in your <head> section, then validate it with Google's Rich Results Test to catch syntax errors before they become invisible problems.

Google won't guarantee schema gets you into AI Overviews. But without it, you're not even in the conversation.

Next, audit your technical foundations. Run the page through Google PageSpeed Insights and look at Core Web Vitals, LCP, FID, CLS. I've watched pages with genuinely good content fail to rank because Largest Contentful Paint crept past 2.5 seconds. Also check mobile friendliness with Google's Mobile-Friendly Test. Not optional.

Add internal links to a few related articles on your site. This isn't just about passing authority around, it builds semantic relationships that AI systems read to understand whether your site actually knows this topic. While you're at it, fix broken links. Semrush's Site Audit tool catches them fast. Broken links read as neglect to crawlers.

Optimize your meta tags. Primary keyword in the title tag, under 60 characters. Meta description under 160, written to earn the click. Write actual descriptive alt text for every image, and name the files properly, something like how-to-optimize-content-for-seo-technical-checklist.jpg instead of IMG_1234.jpg.

Then submit to Google Search Console. Use the URL Inspection tool to confirm Google can actually render the page, especially if you're on a JavaScript-heavy setup where AI crawlers sometimes struggle to see what users see.

That's it. Structured data gives the machines clear signals. The technical layer makes sure nothing blocks those signals from getting through.

Step 5: Publish with Automated CMS Integration

Automation breaks down at the last step more often than anywhere else.

Connect your AI workflow directly to your CMS using APIs or native integrations like Contentful's AI Actions. This turns your pipeline into something deterministic instead of a series of handoffs where errors sneak back in.

Configure your CMS to receive drafts from your AI writer via webhook. Most modern platforms support this, WordPress with the REST API, Contentful, Sanity. The draft arrives as structured JSON, so your headings, schema markup, and optimized sections all survive the transfer. No copy-paste corruption.

For meta descriptions, set up a Contentful AI Action to read your H1 and first paragraph, then produce a few description options. Schedule these for editorial review. Don't auto-publish them. That's the balance point between automation and actually catching problems before they go live [Source: Contentful's documentation on AI Actions].

Use CMS hooks to apply schema markup dynamically. Based on your earlier analysis, trigger different JSON-LD templates: HowTo schema for tutorials, FAQPage for Q&A content, Article for standard posts. Every published piece gets machine-readable structure. No manual intervention.

Before you hit publish, add a verification step. Run a crawl simulation with something like Screaming Frog to confirm internal links are intact and no critical tags got stripped during import. Your CMS scheduler can handle timing, but let this check gate the release.

Then, immediately after publishing, submit your URL to Google Search Console via its API. Don't sit around waiting for organic discovery, force-index new content to start ranking evaluation faster. Watch the initial crawl status to catch integration failures early. This is part of how to optimize content for seo that most people skip, and it's also where you avoid the hidden google seo cost of losing days to a crawl delay you didn't know happened.

Think of publishing like deploying code: automated, repeatable, with clear rollback points if something breaks.

Step 6: Monitor AI Visibility and Traditional Metrics

Your content is live. Now what are you actually tracking?

There are two distinct layers here. Traditional SEO metrics (rankings, organic traffic) tell you how you're doing in blue-link search. AI visibility metrics tell you whether you're showing up in AI Overviews and chat-based search, which now account for over 58.5% of Google searches that end without a click [Source: Goodfirms 2026].

Start with your AI citation rate. This measures how often your content gets cited in AI-generated answers.

Set up Ahrefs Custom Prompts for your core questions. Create a prompt for something like "What is the 80/20 rule in SEO?" and track which URLs appear in the response. You'll see a citation count and visibility share against competitors. And only 12% of AI Mode citations match URLs in the organic SERP [Source: Moz], so this isn't just a variation on traditional ranking, it's a genuinely different channel.

Next, look at conversion quality. AI search visitors convert 4.4× better than traditional organic visitors [Source: Semrush]. Segment your analytics to compare conversion rates from AI-sourced sessions against standard organic traffic. That tells you pretty quickly whether your content is pulling in people who actually want what you're offering.

Then build a simple dashboard. Google Looker Studio or a shared spreadsheet works fine. Combine:

- AI Visibility: Citation rate per prompt, visibility share.

- Traditional Metrics: Target keyword rankings, organic traffic, click-through rate.

- Business Metrics: Conversions, conversion rate by source, lead quality.

Review it weekly. High AI citation but low traditional ranking? Double down on that content format. AI traffic converting poorly? Revisit the commercial intent of that piece.

This is where figuring out how to optimize content for seo gets real, not in the setup, but in the feedback loop. It's also where you start to see the actual google seo cost of ignoring AI visibility entirely, because that's traffic and conversions you're just not measuring.

Avoid Common AI SEO Mistakes

Your AI-powered workflow is only as strong as its weakest link. I've seen these mistakes derail otherwise solid strategies, especially when teams get excited about scale and forget fundamentals.

Mistake 1: Publishing AI output without human editorial review. This is the cardinal sin. Even the best models hallucinate, reported rates exceed 15% on benchmark tasks.

Every piece of AI-generated content needs a human editor who fact-checks claims, verifies citations, and confirms brand voice before publishing. Non-negotiable.

Mistake 2: Ignoring technical SEO foundations. You can't out-optimize a broken site. AI search engines still need to crawl and render your content.

Before scaling, fix the basics: broken links, Core Web Vitals, mobile-friendliness, indexation issues. Semrush recommends this as a baseline for AI search performance. Crawlability is a prerequisite, not an optimization.

Mistake 3: Not tracking AI visibility metrics. If you're only watching traditional rankings, you're blind to over 58.5% of Google searches that now end without a click.

Set up Ahrefs Custom Prompts or similar to monitor your "AI citation rate", how often your content gets cited in AI-generated answers. That's your new share of voice.

Mistake 4: Assuming structured data guarantees AI inclusion. Adding FAQPage or HowTo schema helps AI systems understand your content's structure, but it doesn't guarantee citation. Only 12% of AI Mode citations match URLs in the organic SERP.

Use structured data as a clarity signal. Not a silver bullet.

Mistake 5: Treating AI as a ranking factor shortcut. Google's guidance is clear: creation method isn't a ranking factor. The March 2024 update targeted scaled content abuse precisely.

Focus on quality, helpfulness, and E-E-A-T first, then use AI to scale that quality, not replace it. This is the part that determines the real google seo cost of getting it wrong, and it's the same principle that applies when you're figuring out how to optimize content for seo at any scale.

AI is a force multiplier for sound strategy. Build your pipeline around human judgment, then automate everything else.

Conclusion

Knowing how to optimize content for SEO in 2026 comes down to one thing: building a deterministic pipeline, not just grabbing the latest AI tool.

You need a workflow that combines AI's scale with human judgment, specifically built to capture both traditional rankings and AI search visibility.

Start by auditing your foundations. Then connect tools that actually integrate, not just coexist. Use AI for drafting and research, but validate everything with fact-checking prompts and real editorial review.

Track traditional metrics, but also track AI citation rates. Over 58.5% of searches now end without a click [Source: goodfirms.co], so if that's not on your dashboard, you're missing most of the picture.

Your domain authority dictates your keyword strategy. For sites with DR 30-50, focus on long-tail questions with clear, extractable answers. Structured data helps AI understand your content, but it doesn't guarantee inclusion. Quality and accuracy are still your best defense.

Don't try to do all of this at once.

Pick one step, maybe the validation layer, implement it, measure the impact, then scale. The goal isn't to remove human input. It's to make that input go further.

Your competition is already somewhere in this process, 87% of content marketers use AI in some capacity [Source: taylorscherseo.com]. That's also what drives the real google seo cost of getting it wrong: everyone's scaling, but not everyone's scaling carefully.

The question isn't whether you'll use AI. It's how systematically you'll build around it.

Frequently Asked Questions

What is the 80/20 rule in SEO?

The 80/20 rule means 80% of your results come from 20% of your efforts. In practice, that critical 20% is targeting keywords you can actually rank for given your domain authority, and using AI to handle the repetitive stuff, meta description generation, initial drafts, that kind of thing.

The other 80% AI can do. But strategic validation, fact-checking against hallucination risks, injecting E-E-A-T signals, that's where your human effort goes. AI consistently misses those.

Can ChatGPT do SEO?

It's a powerful assistant. Not a replacement. It's good at scale tasks: brainstorming long-tail keyword variations, drafting outlines from a brief, generating meta description options.

But you still have to provide the direction, validate every factual claim, and bring the expertise that search engines now prioritize. It's a tool in the workflow, not the workflow itself.

Is SEO dead or evolving in 2026?

Aggressively evolving. Over 58.5% of Google searches now end without a click [Source: goodfirms.co], but AI-sourced sessions grew 527% year-over-year [Source: taylorscherseo.com].

The game has shifted from ranking position to citation rate and AI visibility. Quality and relevance still matter, but your tactics and KPIs need to catch up with where traffic is actually coming from now.

Will AI replace SEO?

It won't replace SEOs. It'll just change what the job is. The role moves from manual execution to strategic oversight: designing the pipelines, setting quality gates, interpreting AI visibility data.

AI handles the scale. Humans handle the judgment. The teams that figure out how to optimize content for SEO inside that hybrid model are the ones that won't be scrambling to catch up, and they're the ones keeping their google seo cost from quietly spiraling.