April 25th, 2026

Top AI Writing Tools for SEO: How to Build Your Content Automation Stack

WD

WDWarren Day

Most people aren't missing a better tool. They're missing a system.

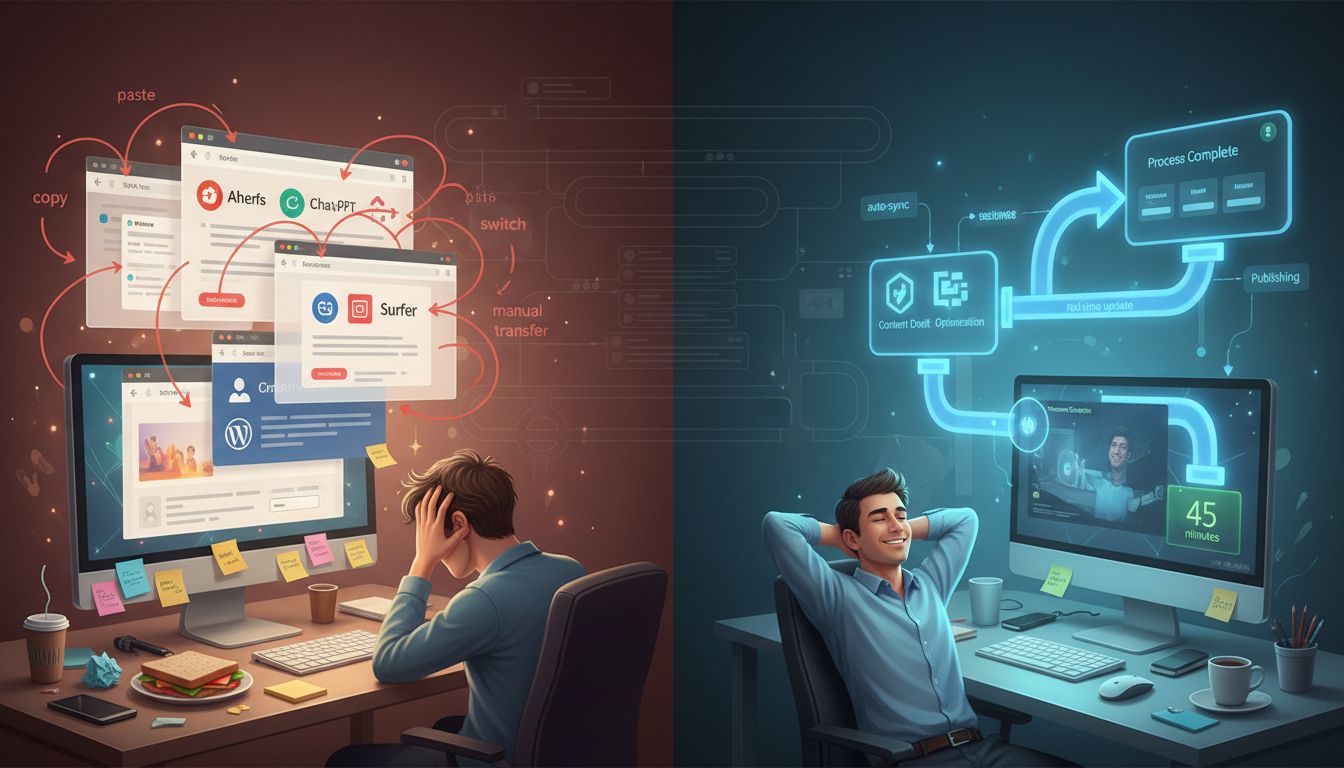

You've probably seen a dozen "top ai writing tools" lists. You try a few, get mediocre content, and your workflow ends up more fragmented than before.

The tools aren't the problem.

28% of marketers put over 40% of their budget toward AI infrastructure, and 74% use at least one AI tool at work [Source: amraandelma.com, hubspot.com]. But most teams still treat AI writing like a grab bag of point solutions. They grab Jasper for copy, something else for research, maybe a sudowrite alternative for long-form, and nothing talks to anything else.

So the content comes out generic. Not because the tools are bad, but because there's no pipeline connecting them.

Sustainable SEO growth in 2026 doesn't come from finding the "best" tool. It comes from building a connected system that handles research, creation, optimization, and publishing as one cohesive thing, not four separate tabs.

I've spent years building SEO automation platforms and working with everyone from early-stage startups to major media companies. The pattern is always the same. The teams winning aren't the ones with the fanciest best free ai writing tools or the most aggressive sudowrite pricing plans. They're the ones who treat content like software infrastructure, designed around interoperability and measurable outputs.

In this guide, you'll get a four-pillar framework for evaluating tools by their role in your workflow, not their feature lists. You'll see how platforms like Frase, Surfer, and Jasper actually perform when they're connected to real keyword research and SERP data. And if you're looking for a sudowrite alternative or a free ai for research paper writing, there are practical options here too.

The goal isn't another tool to try. It's a system that runs without you babysitting it.

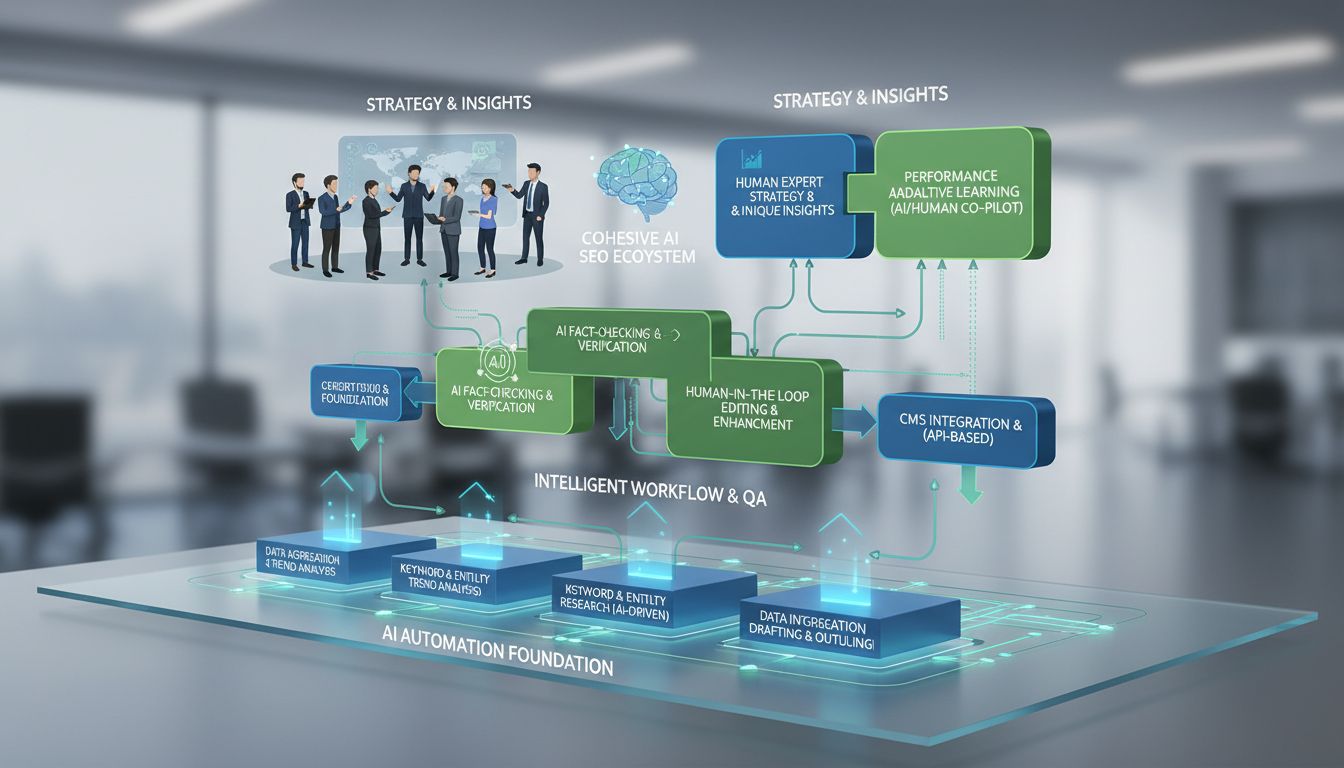

The AI SEO Stack Framework: The 4 Pillars of Automation

Think of your SEO workflow as a production line. Not a collection of disconnected apps you tab between all day.

The real bottleneck isn't finding another writing tool. It's the manual handoffs between research, creation, optimization, and publishing. Every time you copy-paste keywords from Ahrefs into ChatGPT, then into Surfer for scoring, then into WordPress, you're creating friction that doesn't scale.

The fix is building an automation stack: a connected pipeline where data flows between four functional pillars. When it's set up right, article production drops from 9-14 hours to under an hour. Frase's agentic SEO pipeline is a good example of this working in practice.

Here's how each pillar works.

1. Research & Briefing: The Foundation

This pillar takes raw keyword data and outputs structured content briefs. Not just keyword research, automated SERP analysis, competitor breakdown, intent mapping.

Surfer's Keyword Research clusters semantically related terms and surfaces content gaps automatically. Frase's Auto-brief feature analyzes the top 20 ranking pages, pulls their structure, and flags the questions your content is missing.

The output isn't a spreadsheet. It's a production-ready brief with target word count, heading structure, and required semantic terms.

From building Spectre, the thing that clicked for me: treat keyword clusters as atomic units, not individual terms. The system analyzes the full SERP, then tells you exactly what to write about, not just which keywords to stuff in.

2. Content Generation & Drafting: The Assembly Line

With a structured brief, this pillar handles initial content creation. The distinction that matters here is between generic AI writing and SEO-aware drafting that actually follows the brief's architecture.

Jasper's Optimization Agent translates research outputs into bulk SEO/AEO/GEO briefs. Copy.ai's Workflow Builder lets you customize each step, from keyword input to draft generation, while keeping brand voice consistent.

The goal isn't a perfect draft. It's a 70-80% complete draft that follows the brief and hits the semantic requirements.

The engineering angle: treat the AI writer as a component. Structured input goes in (the brief), structured output comes out (the draft). That's what stops the generic, flat content that comes from winging it in ChatGPT.

3. On-Page & Technical Optimization: Quality Control

This is where most teams fall apart. Decent drafts, but the technical elements that actually move rankings are missing.

Surfer's Auto-Optimize rewrites paragraphs to lift SEO score without changing the meaning. Writesonic's SEO AI Agent Mode gives real-time recommendations on keyword density, heading structure, and meta elements.

The system should flag missing schema, suggest internal links, and check technical compliance before anything gets near publishing.

Working inside large media companies, this is the stage that separates ranking content from filler. Writing about a topic versus writing for search intent, the system should enforce that distinction, not leave it up to whoever's having a good day.

4. Publishing & Orchestration: The Delivery System

The final pillar handles deployment and workflow management. This is where APIs and integrations turn individual tools into something that actually runs on its own.

Frase offers 50+ API endpoints for content management, SERP analysis, and optimization. Surfer integrates natively with Zapier and Make.com. These connections let you schedule content, push to your CMS, and trigger quality checks without touching it manually.

From an engineering standpoint, the goal is idempotent workflows, processes that can run repeatedly without duplication or error. Versioning, approvals, publishing, audit trails. The system handles it.

Here's how these pillars connect in practice:

graph LR

A[Keyword Research<br/>Ahrefs/SEMrush] --> B[Research & Briefing<br/>Surfer/Frase]

B --> C[Content Generation<br/>Jasper/Copy.ai]

C --> D[On-Page Optimization<br/>Surfer/Writesonic]

D --> E[Publishing & Orchestration<br/>Frase API/Zapier]

E --> F[CMS/WordPress]

Data flows one direction. Each pillar adds value and passes structured output to the next.

When you evaluate tools through this lens, you stop asking "which of the top ai writing tools is best?" and start asking "which components plug cleanly into my production line?" That's a different question, and it gets you a different answer.

That architectural shift is what allowed Opinion Stage to save 500+ hours while improving SERP positions. Not more tools, tools that talk to each other.

Top AI Writing Tools for SEO in 2026: A System Architect's Review

Forget ranking tools in a vacuum. The only question worth asking is: how well does this thing plug into the four-pillar stack? A tool with brilliant features and a closed ecosystem is just a dead end for automation. Here's my breakdown.

Frase: The Automation Powerhouse

If you want to automate the entire content production line, Frase is your control panel. Its strength is in the Research & Briefing and Publishing & Orchestration pillars, and the differentiator isn't the AI writing itself, it's the API surface. Over 50 endpoints covering content management, SERP analysis, and AI visibility tracking [Source: Frase.io].

That API-first design is what enabled their six-stage agentic SEO pipeline to cut article production from 9-14 hours down to 30-60 minutes [Source: Frase.io]. You can trigger research, generate a brief, draft, optimize, and push to your CMS through a sequence of automated calls.

The Opinion Stage case study is worth reading: 500+ hours saved, 41% boost in impressions. That happened because they plugged Frase into a system, not because they used it as a standalone writer.

Ideal for: Technical teams or agencies building end-to-end, API-driven content pipelines. Caveat: You need someone comfortable with API integrations or platforms like Zapier. This is a system component, not a point-and-click solution.

SurferSEO: The SERP-Data Engine

Surfer owns the Research & Briefing and On-Page Optimization pillars by being ruthlessly data-driven. The key differentiator is SERP analysis based on actual ranking pages, not guesses. It tells you exactly what terms, headings, and structure your top ten competitors are using.

For system integration, it offers a solid REST API with native connections to Zapier and Make.com. Cluster keywords in Surfer, auto-generate a brief, send that structured data to your drafting tool. Clean, SERP-informed data flowing into the rest of your stack.

Ideal for: Data-driven marketers and agencies running bulk content operations who need their optimization logic reproducible and automated. Caveat: Its recommendations can be prescriptive. Blindly chasing the "SEO score" can produce content that feels engineered rather than useful. Human oversight isn't optional here.

Jasper: The Brand-Voice Conductor

Jasper's domain is Content Generation & Drafting, specifically brand governance. Jasper IQ stores messaging frameworks, tone-of-voice guidelines, and brand facts, which matters when consistent brand voice across all content is a commercial requirement, not just a preference.

Its Optimization Agent handles bulk brief generation from keyword research, which slots into the Research pillar too. It connects directly with SurferSEO and platforms like Webflow.

Think of Jasper as the specialist that ensures whatever your automated pipeline produces still sounds like your brand.

Ideal for: Marketing teams with a mature, well-defined brand voice that needs to scale across high content volume. Caveat: It's less of an API-centric automation hub and more of a branded content factory. You'll use it within a stack, fed by data from tools like Surfer or Ahrefs, not as the thing running the whole show.

Copy.ai & Writesonic: The Workflow Orchestrators

These two are good at codifying the Content Generation process. Copy.ai's Workflow Builder lets you design a repeatable, multi-step SEO process, keyword input, brief generation, drafting, optimization, review. A visual way to build your own content assembly line.

Writesonic matches that with its SEO AI Agent mode, plus real speed. Both offer best free ai writing tools tiers or trials, which is genuinely useful for prototyping a workflow before committing.

Ideal for: Teams that want to systemize a repeatable content process without deep technical integration work. Caveat: They orchestrate their own workflows well, but get less flexible when you need to insert a bespoke tool or a custom API call from outside their ecosystem. They're platforms, not loose components.

Scalenut & NeuronWriter: The Budget-Conscious Foundations

For solopreneurs or small teams just starting to systemize, these two cover the foundational Content Generation and On-Page Optimization features at accessible price points. Scalenut's Growth plan includes unlimited AI words and SEO articles. NeuronWriter starts around $23/month.

They're capable sudowrite alternative options if you're moving from creative writing aids into commercial, SEO-focused content. Their role in a stack is usually the entry point, you start here to get consistency and basic optimization, then graduate to more modular, API-driven tools as your volume grows.

Worth knowing: sudowrite pricing is built around creative fiction use cases, so if you're switching to SEO content, the tooling needs to switch too. Scalenut and NeuronWriter are the natural landing spots for that transition, and they also work as a free ai for research paper writing starting point before you need anything heavier.

Ideal for: Bootstrapped startups or solo creators who need core AI writing and SEO guidance without a large upfront investment. Caveat: Automation and integration capabilities are limited. You're buying a simplified all-in-one, which is fine until you need to connect specialized best-in-class components. Then you'll outgrow it.

The common thread across all of the top ai writing tools: the "best" one is the one that fulfills a specific role in your system and connects cleanly to the next stage. A brilliant writer that can't export structured data is useless. A powerful optimizer with no API is a bottleneck.

Choose based on the connectors, not just the features.

How to Choose: A Decision Matrix for Your Reality

Stop asking which tool is "best." Ask which tool solves your specific constraints. Your company size, budget, technical capability, and your domain rating create a reality that dictates the right choice.

Here's the decision logic I use when advising clients. Pick your primary constraint, then work backwards.

Primary Constraint: Budget (<$50/month)

- Prioritize: Scalenut Essential ($39/month), NeuronWriter Bronze ($23/month), Frase's basic API plan ($39/month).

- Accept: You'll trade deep automation for core functionality. API access will be limited or non-existent. You're buying a drafting assistant, not a system component.

- Gotcha: The "unlimited words" tier often hides restrictive article limits. Scalenut's Growth plan offers 30 SEO articles/month, count your output before committing.

Primary Constraint: Need API/Deep Automation

- Prioritize: Frase (50+ endpoints for content, SERP analysis, and audits) or Surfer (REST API with Zapier/Make.com integrations).

- Why: If you're building a connected stack, the tool's integration surface matters more than its UI. Frase's API availability on plans starting at $39/month is unusually accessible for programmatic use [Source: frase.io/features/api-integrations].

- Gotcha: Check rate limits and webhook support. Some "API access" is just a basic content export, not a real automation primitive.

Primary Constraint: Small Team, Strong Brand Voice

- Prioritize: Jasper with Jasper IQ for stored messaging frameworks, or Copy.ai's Workflow Builder for customizable tone controls.

- Why: When every piece of content has to sound like your brand, the tool's ability to learn and enforce voice matters more than SERP data depth.

- Expert Insight: Brand voice consistency is a ranking signal Google's E-E-A-T framework rewards. A tool that helps maintain it is defending your domain authority.

Primary Constraint: High Volume, Agency Model

- Prioritize: Surfer for bulk brief creation (32 briefs in 4.5 hours is documented) or Frase for its agentic pipeline claiming 90% time reduction.

- Why: Your bottleneck is briefing and optimization at scale. Surfer's sharing links and bulk editor features are built for this.

- The Domain Rating Reality Check: This is where most guides fail. Your site's Domain Rating (DR) fundamentally changes what you actually need. A site with DR 70+ can often rank with lighter optimization, the domain's authority carries the content. A startup at DR 20 needs every SERP-data advantage a tool like Surfer provides. Don't buy enterprise-grade optimization if you lack the domain authority to use it.

Primary Constraint: Technical Founder Building a System

- Prioritize: The tool with the most extensible API (Frase) and the one that complements your custom code.

- My approach: I use Frase's API for SERP analysis and brief generation, then pipe that structured data into custom GPT workflows. I'm buying data access, not a writer.

- Real Cost Calculation: The sticker price is the smallest part. Factor in 10-15 hours for learning curves and integration effort. A $79 tool that takes 20 hours to implement has a true first-month cost of ~$1,500+ in lost productivity.

The matrix isn't about finding the perfect tool. It's about figuring out which compromise you're willing to make. Choose the tool that solves your hardest constraint, then build the rest of your stack around that anchor point.

Building Your Stack: Integration Patterns and Automation Recipes

Picking tools is just step one. The real value comes from wiring them together into automated workflows that run without you babysitting them. That's when you stop buying software and start building a system.

Here are three production-tested automation patterns I've built for clients. Each one targets a different bottleneck in the SEO content pipeline.

1. The Hands-Off Briefing Pipeline

This flow automates the most tedious part of content production: turning keyword research into actionable briefs. Surfer's data shows users can create 32 briefings in 4.5 hours manually. This system does it in minutes.

The Workflow:

- Trigger: Weekly keyword cluster export from Surfer's Research tool

- Action: Zapier sends new clusters to a Google Sheet (your editorial calendar)

- Approval: Your editor marks rows "approved" in a status column

- Automation: A Make.com scenario watches for approved rows

- Execution: Make calls Frase's API to generate a complete SEO brief

- Delivery: Brief lands in a designated Google Drive folder, with Slack notification

graph TD

A[Surfer Keyword Cluster] --> B[Zapier to Google Sheets]

B --> C[Editor Approval]

C --> D[Make.com Watcher]

D --> E[Frase API Brief Generation]

E --> F[Google Drive + Slack]

The technical gotcha here is rate limiting. Frase's API has sensible limits, so you need to add delays between calls. I typically set a 2-second pause between brief generations to stay safe.

2. The Optimized Publishing Flow

This pattern solves the "optimization gap", drafts get written but never properly optimized before publishing. It uses Jasper's native Surfer integration, which most teams barely touch.

How it works in practice:

- Writer drafts in Jasper with SEO Mode enabled

- Jasper's Optimization Agent reviews against SERP competitors

- The draft gets an initial Surfer score directly within Jasper

- When ready, a webhook triggers a Make.com scenario

- Make fetches the optimized content via Jasper's API

- It then publishes to WordPress via the REST API, setting all meta tags, featured images, and categories

The key insight is using Jasper as the unified interface. Writers never leave one tool, but the automation handles all the technical publishing details most marketing teams struggle with.

I've seen this cut publishing time from 45 minutes per article to under 5.

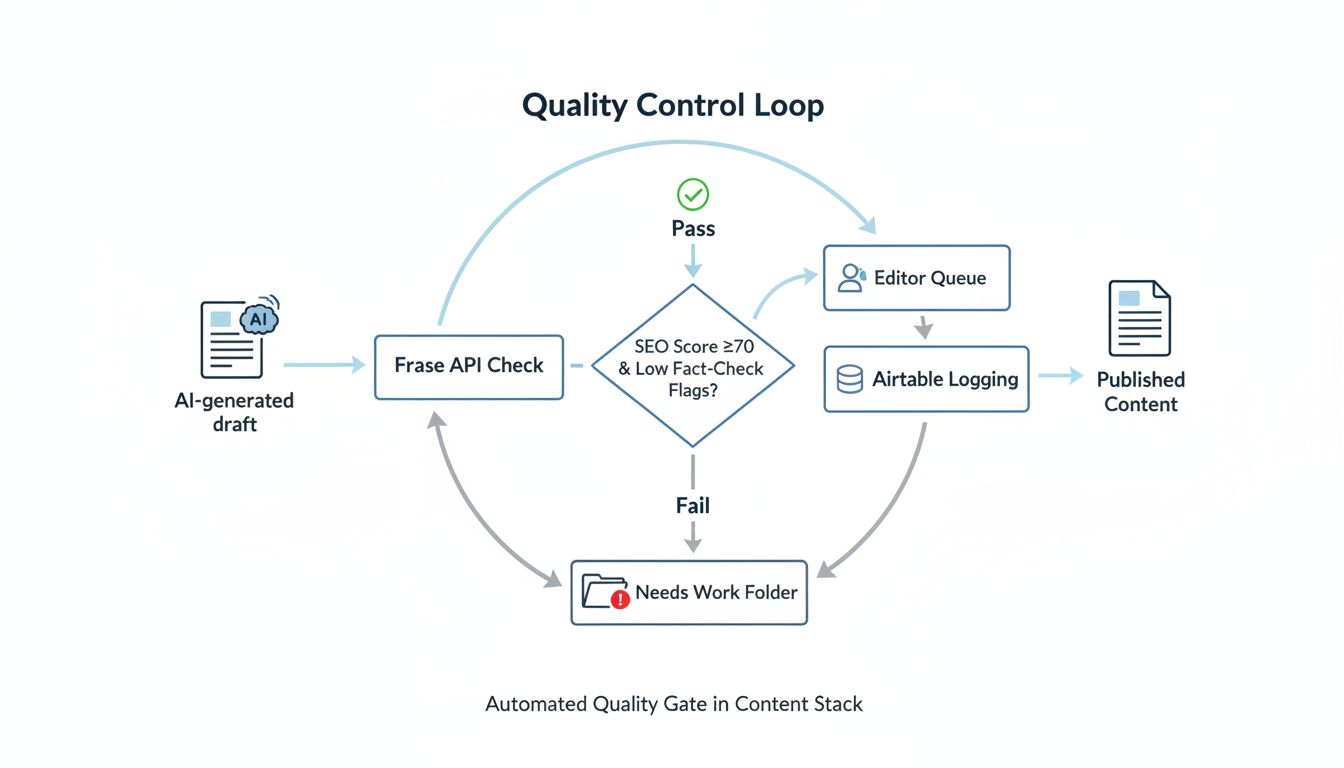

3. The Quality Control Loop

The most common automation failure point is quality degradation. This workflow adds automated gates before human editors waste time on substandard content.

The circuit breaker pattern:

- AI generates a draft (from any tool)

- A script sends it to Frase's API for fact-check and SEO scoring

- If the SEO score is below 70 OR fact-check flags > 3 issues

- The draft gets routed to a "needs work" folder

- If it passes, it goes directly to the editor's queue

- All scores and flags are logged in Airtable for trend analysis

This is where Frase's 50+ API endpoints show their value. You're not just checking word count, you're validating against their full optimization engine, including AI visibility tracking across eight platforms.

The Reality of "Agentic" Workflows

Vendors love promising 90% time savings with their "agentic" pipelines. The truth is messier. Frase's six-stage agentic SEO pipeline can reduce article production from 9-14 hours to 30-60 minutes, but only after you've put in real configuration time upfront.

Here's what they don't tell you in the sales demo:

- Initial setup: 8-16 hours of mapping your brand voice, templates, and approval workflows

- Maintenance: Weekly reviews of automation outputs (things drift)

- Edge cases: 15% of articles will still need manual intervention for complex topics

- Integration debt: When one tool changes its API, your entire workflow can break

The most successful teams I've worked with treat automation like a product feature with its own roadmap. They allocate 20% of their content budget to maintaining and improving these systems, not just running them.

Your First Automation: Start Simple

Don't try to build all three patterns at once. Pick one bottleneck that's causing real pain this month. For most teams, that's the briefing pipeline.

Here's a minimal version you can build in an afternoon:

- Export your top 10 keyword clusters from Surfer to CSV

- Upload to Google Sheets

- Use Frase's bulk brief generator (manual upload)

- Save outputs to a shared Drive folder

Even this manual-but-systematic version will save you hours. Once that's working, layer in the automation pieces one at a time.

The goal isn't perfection. It's consistent, measurable improvement to your throughput and quality.

Common Pitfalls and How to Avoid Them

Even a solid AI SEO stack will fall apart if you don't know where automation breaks down. I've watched teams burn months and budget on mistakes that were completely avoidable. Here's what actually goes wrong.

The Beige Content Problem AI is good at synthesizing what already exists. It is not good at original insight. Feed it a keyword and you'll get competent, generic text that reads like every other page ranking for that term. Search engines are getting better at spotting the absence of real E-E-A-T signals.

Solution: Use AI for the heavy lifting, research, outlining, drafting. Then spend 20-30% of your production time injecting something that can't be synthesized: case studies from your actual work, proprietary data, contrarian takes that the textbooks miss. The AI builds the scaffold. You provide the architecture.

The Hallucination Liability Factual errors aren't just embarrassing. In regulated industries, they're a legal and reputational risk. AI will confidently cite the wrong spec, misattribute a stat, or invent a "study" that doesn't exist. I've personally had to clean up client content where AI hallucinated compliance standards that weren't real.

Solution: Human verification on all claims, statistics, and technical specs. No exceptions. Where you can, use tools with built-in fact-checking, Frase's SERP analysis or Surfer's Fact-Check Schema validator for healthcare content. Think of AI as a brilliant research assistant who you genuinely cannot trust to be accurate without supervision.

The Automation Blind Spot AI follows patterns in existing SERPs. Which means it optimizes for what worked yesterday. I've seen tools recommend "best practices" content when the actual SERP is full of troubleshooting guides, you miss the intent shift entirely.

Solution: Always start with manual SERP analysis. Go beyond the top 10. Read the forum threads, the review comments, the "People also ask" sections. Use AI to execute your strategy, not define it. The tool should answer "how to write this," not "what to write about."

The Short-Term Illusion The SE Ranking experiment found that AI-generated pages initially gained traction, then fell from 28% to 3% of the top 100 results after three months. The early ranking bump usually comes from technical optimization, not lasting relevance.

Solution: Plan for iterative, human-led optimization from day one. Schedule audits at 30, 60, and 90 days post-publish. Update stats, refresh examples, expand wherever a competitor has moved ahead. Every published piece is version 1.0.

The Integration Overload Teams build Frankenstein workflows, five or six tools connected by fragile Zapier automations that break the moment one API changes. The maintenance overhead eats all the time savings. I've inherited systems where nobody could tell you which automation was failing or why.

Solution: Start with the minimal viable integration. Connect your AI writer to your CMS, then add one automation at a time. Document every connection point. Have a manual fallback. Complexity should come from necessity, not ambition.

The pattern is clear: the tools handle volume and consistency, your team provides judgment and quality control. Skip either half and the whole thing falls apart.

Measuring Success: Beyond Word Count to Real ROI

If you're feeling pressure to scale content output, you're probably measuring the wrong thing.

Word count and article volume are vanity metrics. They lead straight to low-quality, low-impact content. The real test of any AI SEO stack, whether you're using top ai writing tools or the best free ai writing tools, isn't how much it produces. It's how much it achieves with less wasted effort.

Start by establishing baselines before you implement any automation. What's your current time per article? Your average SERP position for target keywords? Your organic conversion rate? Without a starting point, you're just guessing at improvement.

Track three categories of metrics.

Efficiency Metrics measure time saved. According to Thomson Reuters research, projected time savings from AI tools average 5 hours per week per user, equivalent to about $19,000 in annual value per user. Measure time saved per article, editing hours reduced, and content calendar throughput.

Effectiveness Metrics measure business impact. Track SERP position movement, impression growth (Opinion Stage saw a 41% increase using Frase), organic traffic, and conversions, not just clicks.

Quality Metrics make sure you're not trading quality for speed. Monitor plagiarism scores, factual accuracy checks, and human editing time as a percentage of total production time. If editing time goes up as you scale, your system is generating more work, not less.

The counterintuitive part? Sometimes the best outcome is producing less content. If your stack helps you kill low-potential topics before they eat up resources, whether you're working with a tool like Sudowrite, evaluating sudowrite pricing, comparing a sudowrite alternative, or using a free ai for research paper writing, that's a win worth tracking.

Your goal isn't more words. It's more effective content with less wasted effort. When you can show that your AI stack connects directly to business outcomes, reduced costs, more qualified traffic, higher conversions, you're not just running an SEO operation.

You're running a business.

Conclusion

So what's actually worth your time here, hunting for the top ai writing tools, or building something that works?

The answer is the second one. Always has been.

No single platform is going to fix your content operation. The real gains come from connecting research, creation, optimisation, and publishing into one system that runs without you babysitting it. That's where the ROI lives in 2026.

Pick tools based on your actual constraints, budget, team size, what you're already using. Not based on feature lists or hype. Whether you're weighing sudowrite pricing, looking at a sudowrite alternative, or trying to find the best free ai writing tools for your budget, the question is always the same: does this solve a real bottleneck in my specific workflow?

Automate the repetitive stuff. But keep a human in the loop for strategy, brand voice, and anything that requires actual judgment.

And build in guardrails. Automation without measurement is just chaos at scale. Track real outcomes, production costs, qualified conversions, time saved. Not vanity numbers.

The "beige content" problem is real. Over-automate without oversight and everything starts sounding the same. That's how you end up with more content and less impact.

Start with one bottleneck. Slow briefing, tedious optimisation, whatever's actually slowing you down right now. Pick one tool, even a free ai for research paper writing workflow counts, and see if it moves the needle before adding anything else.

One solved problem at a time.

Frequently Asked Questions

Which AI writing tool is the best?

There's no single answer here, it depends entirely on what you're trying to do.

For deep automation and API access, Frase or SurferSEO are the obvious picks. If you need brand voice consistency across a whole marketing team, Jasper's IQ system handles that well. On a tighter budget, Scalenut or NeuronWriter are solid starting points.

The real question is which of the top ai writing tools actually fits your existing stack. Not which one has the longest feature list.

What is the 30% rule in AI?

It's a guideline: at least 30% of AI-generated content should be meaningfully rewritten by a human. Not light copyediting, actual reworking. Adding original insight, fixing unnatural phrasing, catching factual errors.

I've seen teams skip this and end up with what I call "beige content." It ranks for a while, then loses ground because there's no real expertise behind it.

Can I legally publish a book written by AI?

You can publish it. Copyright protection is where it gets complicated. The US Copyright Office has stated that works without significant human authorship aren't protected [Source: copyright.gov].

The practical move is to treat AI as a tool for a human author, not the author itself. A lot of publishers now require disclosure of AI assistance anyway. And for SEO content, being upfront about it tends to build more reader trust than hiding it.

What AI does Elon Musk use?

Grok, from his company xAI. It's built for real-time information with a deliberately provocative personality.

For SEO and marketing work though, it's not the right tool. Jasper (built on GPT-4) or Frase (using Claude and other models) are more purpose-built for that. General-purpose chatbots like Grok or ChatGPT just don't have the SERP analysis or workflow automation that dedicated SEO writing tools do.

What are the big 5 in AI?

The major LLM providers: OpenAI (ChatGPT), Google (Gemini), Anthropic (Claude), Meta (Llama), and xAI (Grok).

For content teams, the thing worth knowing is that most SEO writing tools are just applications sitting on top of these models. Frase might run Claude for certain features while Surfer uses GPT-4. The "big 5" provide the raw capability. The writing tools add the SEO-specific logic and workflow layer on top.