February 14th, 2026

AI Agents for SEO: Automating Data Tasks to Scale Content Production

WD

WDWarren Day

You're a SaaS founder. Your content calendar is sparse, your competitor's blog is relentless, and "scale content" is the board's new mandate. You've searched for the best ai seo tools, tried a few, and gotten... generic fluff that doesn't move the needle.

What if the solution isn't a better tool, but a better system?

Here's what nobody tells you: AI-written pages already appear in 17.31% of top Google search results. The cat's out of the bag. Your competitors aren't winning because they found some magic app. They're winning because they built a process that turns AI from a content generator into a strategic asset.

I've spent the last year helping B2B SaaS companies scale from 4 articles a month to 20+ without hiring a content team. The secret? Stop looking for the best AI SEO tool as if it's a single product you can buy off a shelf.

For SaaS founders like you, the best AI SEO tool isn't a single app. It's a governed system of specialized agents that automates the entire content lifecycle from discovery to publication, while embedding mandatory human oversight to ensure quality, trust, and ROI.

This isn't about prompt engineering your way to mediocrity or letting ChatGPT write your entire blog. It's about architecting a three-layer framework where Intelligence, Production, and Governance each handle specific jobs with clear constraints and human checkpoints that prevent the generic garbage plaguing most AI content.

In this guide, I'll show you exactly how to build this system. You'll get a blueprint for a scalable AI agent workflow that actually works for pillar content, a build-vs-buy analysis of the platforms worth your money, and the metrics that separate vanity from real ROI. No hype. No fluff. Just the framework I wish someone had handed me when the board first said "we need more content."

Let's build something that scales.

The Myth of the Silver Bullet: Why Single Tools Fail and Systems Win

I've watched dozens of founders make the same mistake. They Google "best ai seo tools," skim a listicle, grab whatever's trending (Surfer, Jasper, Frase), and assume their content problem is solved.

Three months later? Still stuck.

A single AI writing tool is just a faster typist. Sure, it'll spit out 2,000 words on "best project management software" in twenty minutes. But it won't tell you which keywords actually matter, whether that topic fits your funnel, or if the output even answers what people are searching for. You're still doing strategy, research, optimization, and QA manually. You've automated one step in a ten-step process.

That's not scaling. That's expensive procrastination.

The alternative? Stop thinking about software. Start thinking about structure. Real content operations have researchers finding opportunities, writers executing, editors refining, and SEO specialists optimizing. AI agent systems replicate this with specialized agents handling distinct roles. One agent scrapes SERP data and spots content gaps. Another clusters topics semantically. A third generates briefs. A fourth handles on-page optimization.

Each agent owns a narrow job. They hand off work sequentially, like your ideal content team would. The research agent doesn't write. The writing agent doesn't do keyword analysis. Specialization creates consistency at scale.

But here's what trips people up: automation without governance produces mediocrity. You need mandatory human checkpoints. What I call a governed system. Only 9% of users trust AI answers they see, and Google's March 2024 update specifically targeted scaled content published without meaningful human oversight.

A governed system means agents do the grunt work (data analysis, first drafts, technical optimization) while humans approve strategy, verify accuracy, and inject brand voice at defined gates. You're not reviewing every sentence. You're reviewing briefs before production kicks off and final outputs before they go live.

This framework turns AI from a novelty into ROI. Instead of spending four hours writing an article from scratch, you spend twenty minutes reviewing an agent-generated draft that's already optimized, fact-checked against your knowledge base, and structured for search intent.

That's the gap between a tool and a system. One makes you slightly faster. The other makes you scalable.

The 3-Layer AI SEO Automation Framework: Intelligence, Production, Governance

When I audit a SaaS company's content operation, I usually find the same pattern: they've bought three or four AI tools, each one promising to "revolutionize" their SEO. But the tools don't talk to each other. There's no workflow. No quality gate.

Just a pile of subscriptions and a Slack channel full of half-finished drafts.

The solution isn't another tool. It's a framework that treats ai seo optimization as a system with three distinct layers, each with a specific job. Think of it like a factory assembly line: raw materials come in one end, finished products leave the other, and quality control happens at every checkpoint.

Here's how the three layers work together:

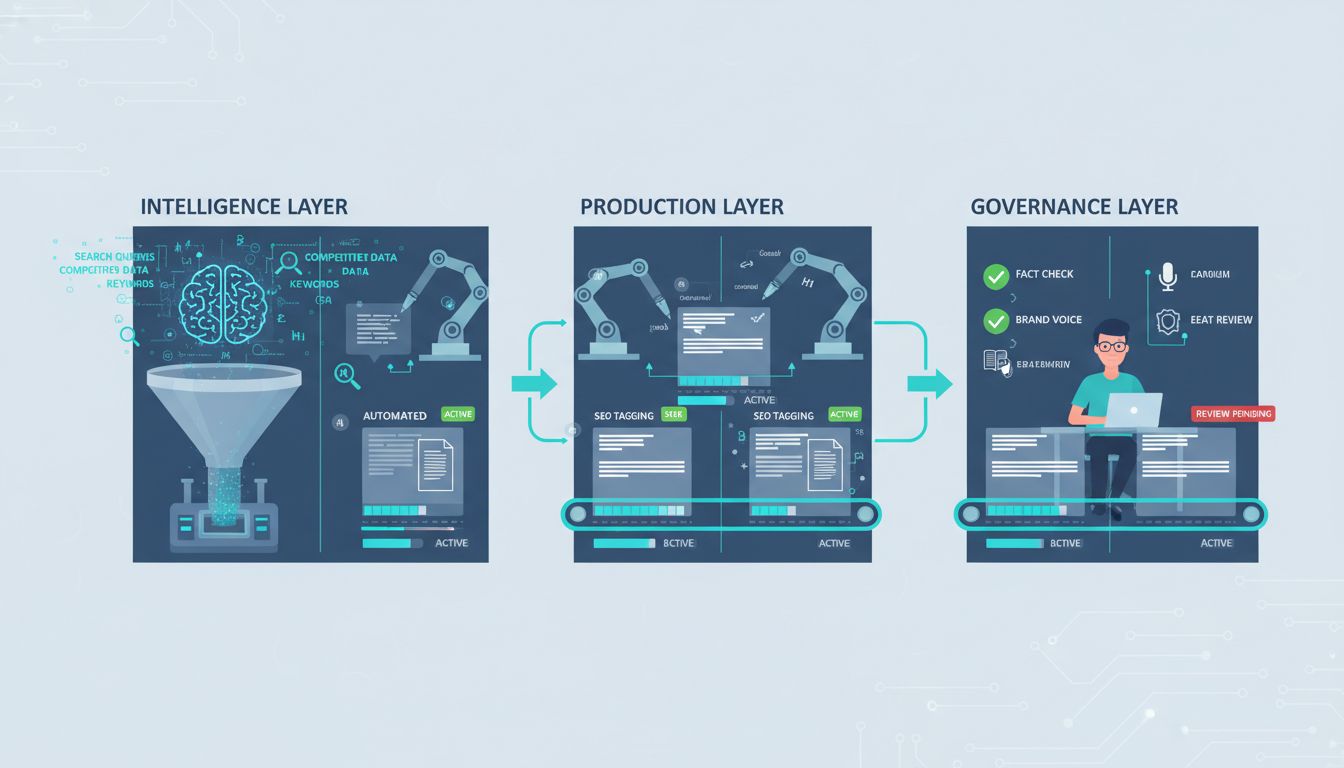

Intelligence Layer → Identifies what to create and why

Production Layer → Generates the optimized content

Governance Layer → Ensures it's trustworthy and brand-aligned

Each layer depends on the one before it. Skip Intelligence, and you're writing content nobody searches for. Skip Governance, and you're publishing garbage that tanks your domain authority.

Let me break down what actually happens in each layer.

The Intelligence Layer: Automating Discovery & Strategy

This is where most manual SEO workflows die. You're supposed to do keyword research, analyze competitors, map search intent, and identify content gaps, all before writing a single word.

In practice, founders skip this and just guess.

The Intelligence Layer automates the heavy lifting. A well-designed agent does three things that would otherwise eat your entire Monday:

Striking-distance keyword discovery: It connects to your Google Search Console, pulls queries where you rank positions 11-20, and prioritizes the ones with the highest click potential. These are your quick wins. Pages that need optimization, not net-new content.

Live SERP scraping for competitor gaps: Instead of relying on stale keyword databases, the agent scrapes current SERPs for your target terms. It identifies what's ranking, what content formats Google prefers (listicles, how-tos, comparison pages), and where competitors are weak. One of my clients found that competitors dominated "vs." comparison pages but had zero coverage on implementation guides. So we built a content cluster around that gap and captured traffic they didn't even know existed.

Semantic clustering for topical authority: The agent groups related keywords into topic clusters, showing you where to build pillar content and supporting articles. This is how you signal topical authority to Google instead of publishing random one-off posts.

The tools here are a mix of commercial platforms like Semrush and Ahrefs for data, plus custom agents that use SERP APIs to pull real-time competitor intel. The key is connecting these data sources so your agent makes decisions based on what's actually working today, not what worked six months ago.

The Production Layer: From Brief to Optimized Draft

Once Intelligence tells you what to create, Production handles the how.

This is where Retrieval-Augmented Generation (RAG) becomes critical. Standard AI content generators hallucinate. They make up statistics, invent case studies, and confidently state things that are completely wrong. RAG fixes this by grounding the AI's output in real source material, your internal documentation, competitor analysis, industry reports, and verified data.

Here's the typical workflow:

Agent-driven brief generation: The agent pulls insights from the Intelligence Layer (target keyword, search intent, competitor gaps) and combines them with your internal knowledge base. It generates a structured brief: headline options, required H2s, semantic keywords to include, word count target, and factual anchors (stats, quotes, examples) to cite.

Draft generation with on-page SEO constraints: The agent writes the first draft, embedding on-page optimization as it goes. Meta descriptions, header hierarchy, internal linking opportunities, keyword density. Tools like Surfer AI can generate a complete SEO-optimized article in approximately 20 minutes, but only if the brief is solid.

Content refinement: The agent checks readability (Flesch score, sentence length), ensures semantic keyword coverage, and flags sections that need more depth or supporting data.

The ai seo optimization tools I see working best here are Surfer AI for speed, Clearscope for semantic analysis, and MarketMuse for content gap identification. But honestly, you can also build a custom agent using ChatGPT with specific instructions and a RAG pipeline. It just takes more setup.

Look, the Production Layer isn't about replacing writers. It's about eliminating the blank-page problem and the tedious optimization work so your team can focus on injecting brand voice and strategic insights.

The Governance Layer: The Non-Negotiable Human Touch

Here's the uncomfortable truth: only about 9% of users always trust AI answers they see.

That means 91% are skeptical. And they should be.

If you publish AI-generated content without rigorous human oversight, you're not "scaling content." You're scaling risk. Google's March 2024 core update specifically targeted sites publishing low-value automated content without meaningful human involvement. The Governance Layer is how you avoid that fate.

This isn't a philosophical debate about "keeping humans in the loop." It's an operational checklist you enforce on every piece of content before it goes live. Here are the four non-negotiable checks:

1. Fact-checking against RAG sources: Every claim, statistic, or case study in the draft must trace back to a verified source in your RAG database. If the AI cited a stat, confirm it's accurate and properly attributed. If it invented something, cut it.

2. EEAT verification: Google wants Expertise, Experience, Authoritativeness, and Trustworthiness. That means adding expert quotes, original data, or firsthand experience that an AI can't fabricate. One SaaS client adds a "Founder's Take" callout box to every article. It's 100 words of original insight that signals human involvement.

3. Brand voice alignment: AI writes in generic corporate-speak unless you train it otherwise. Your editor's job is to inject personality, cut the fluff ("in today's digital landscape"), and make sure it sounds like your company, not a content mill.

4. Final compliance and readability pass: Check for accessibility (alt text, header structure), legal compliance (especially for regulated industries), and readability. If a sentence is confusing, rewrite it. If a section drags, cut it.

You enforce this with workflow tools like Asana or ClickUp. Each article moves through status gates: Draft → Fact-Check → EEAT Review → Voice Edit → Final Approval.

No one can publish until all boxes are checked.

The Governance Layer is what separates a sustainable AI content system from a short-term traffic spike followed by a manual penalty. You're not slowing down production. You're ensuring everything you publish actually builds authority instead of eroding it.

Blueprint: A Scalable AI Agent Workflow for Pillar Content

Let me show you exactly how this works in practice.

We're building a pillar page on "Customer Success Software for SaaS." Competitive keyword cluster. Worth targeting. This isn't theory, it's the exact sequence I've used to produce pillar content that ranks within 60 days without hiring a content team.

The 8-Step Agent Workflow

Step 1: Intelligence Agent discovers the opportunity

Your Intelligence Agent monitors Google Search Console and spots "customer success software" sitting at position 12. Striking distance. It pulls related queries, "csat surveys," "customer health scores," "churn prediction tools", and groups them using semantic clustering. The output: a target keyword cluster with search volume, difficulty, and current ranking data.

Step 2: Research Agent builds the knowledge base

The Research Agent scrapes the top 10 SERP results for your target keyword. It extracts headings, key claims, cited statistics, and content structure from each competitor.

Then it identifies gaps. Topics they missed. Outdated stats. Weak explanations. All of this gets indexed into a RAG (Retrieval-Augmented Generation) knowledge base, so your Writing Agent can reference real competitor data instead of hallucinating facts.

Step 3: Brief Agent synthesizes the strategy

The Brief Agent takes the research and creates a structured content brief: target keywords, recommended headings, competitor weak points to exploit, required word count, and internal linking opportunities. This brief becomes the blueprint your Writing Agent follows. No ambiguity, no drift.

Step 4: Writing Agent generates the first draft

Now the Writing Agent drafts the article. It sticks to the brief, follows on-page SEO rules (keyword placement, header hierarchy, meta descriptions), and pulls facts from the RAG knowledge base. The draft includes proper citations with URLs, maintains consistent tone, and structures content for readability.

This step happens in about 20 minutes, faster than any human writer could produce a comparable first draft.

Step 5: Editor/QC Agent runs automated checks

The Editor Agent scans the draft for readability (Flesch score), keyword density, internal link opportunities, and structural issues like missing H2s or orphaned sections. It flags sentences that are too complex, suggests where to add examples, and validates that all citations have working URLs.

This isn't a replacement for human editing. It's a filter that catches obvious problems before a human ever sees it.

Step 6: Human Gatekeeper applies governance

Here's where the system earns its ROI.

A human reviews the draft against your Governance Layer checklist: Do the facts check out? Does this demonstrate actual expertise? Is the brand voice consistent? Are we making claims we can defend? This is the mandatory human-in-the-loop step that prevents the "scaled content abuse" Google penalizes. You're not rewriting from scratch, you're polishing and validating.

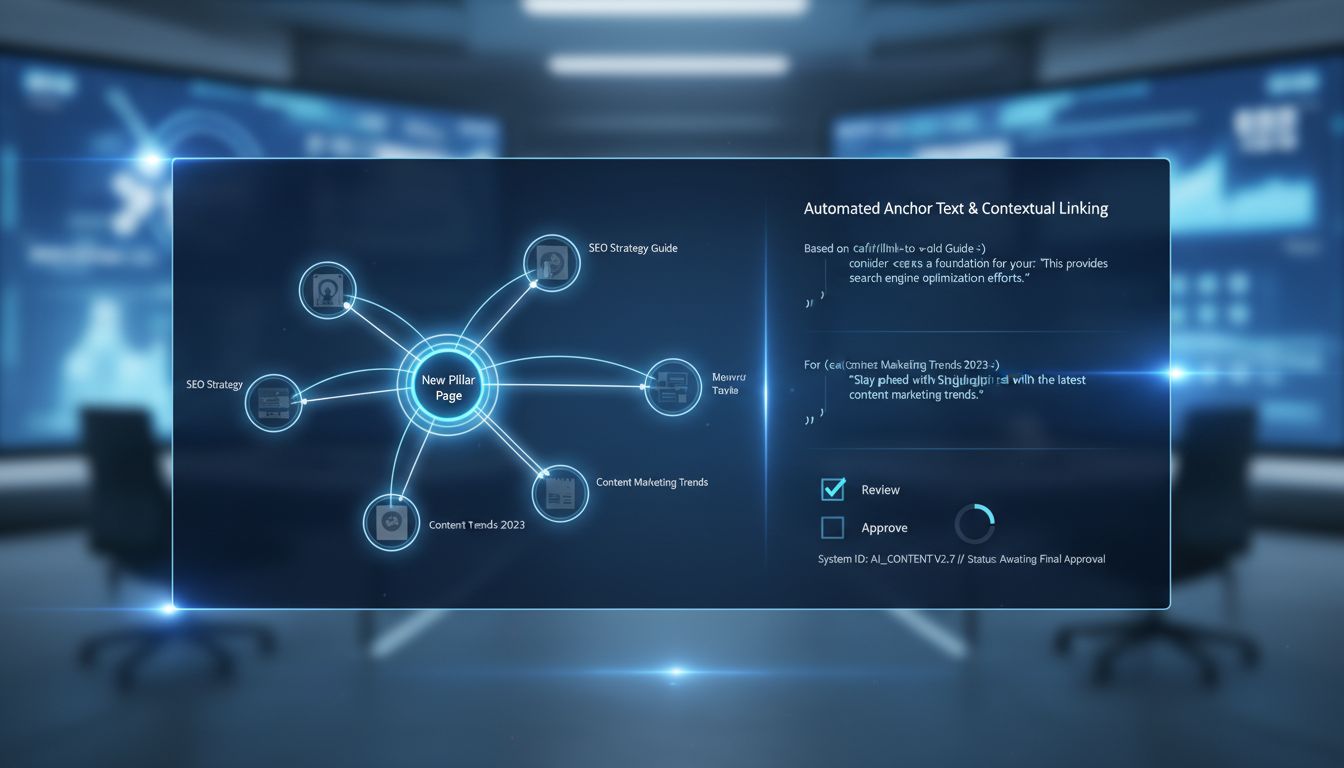

Step 7: Publishing Agent pushes to CMS

Once approved, the Publishing Agent formats the content (adds proper HTML tags, meta fields, featured images) and publishes directly to your CMS via API. WordPress REST API, Webflow, or even a Zapier webhook, whatever your stack uses. No copy-paste, no formatting errors.

Step 8: Linking Agent updates existing content

The final agent scans your existing blog posts and suggests contextual internal links pointing to your new pillar page. It can even draft the anchor text and surrounding sentence.

You review and approve, then it auto-updates the posts.

Integration and Error Handling

This workflow isn't fragile. Build it on existing orchestration tools, LangChain, Airflow, or similar frameworks, rather than custom code. Include retry logic, idempotence checks, and monitoring alerts.

If the SERP scraper fails, the system should pause and notify you, not publish garbage.

The entire sequence runs in 2-4 hours, end to end. Your involvement: 30 minutes of human review at Step 6. That's how you scale pillar content from one per quarter to one per week.

Top AI SEO Tools & Platforms: Build vs. Buy Analysis

You've mapped the framework. You've designed the workflow. Now comes the question every founder asks: do I buy pre-built tools or build something custom?

The answer isn't binary. Most successful setups blend both, commercial platforms for the heavy lifting, custom glue code for the connective tissue. Your decision hinges on three variables: technical resources (do you have a dev who can spare 20 hours/month?), budget (can you afford $300-500/month in tooling?), and control requirements (do you need proprietary data handling or custom logic?).

Here's how the landscape breaks down.

The Tool Stack: What Fits Where

Not all tools are created equal. Some excel at intelligence gathering, others at production speed, and a few attempt (poorly) to do everything.

The key is matching capability to your framework layer.

| Tool/Platform | Core Capability | Best For | Integration Ease | Approx. Cost/Month |

|---|---|---|---|---|

| Semrush | Keyword research, SERP analysis, competitor intelligence | SaaS teams building the Intelligence Layer; need comprehensive data across multiple domains | API available; integrates with most automation platforms | $129+ |

| Surfer SEO | Content optimization, AI drafting with SERP context | Teams prioritizing production speed; generates SEO-optimized articles in ~20 minutes | WordPress plugin; API for custom workflows | $89+ |

| Clearscope | Search intent analysis, semantic term extraction | SaaS content teams with existing writers who need optimization guidance, not full drafts | Google Docs add-on; manual workflow | $170+ |

| MarketMuse | Content inventory analysis, topical authority scoring | Enterprises managing 100+ pages; need strategic gap analysis, not just keyword lists | API available; steeper learning curve | $149+ (custom pricing for AI features) |

| Jasper | General AI writing with brand voice training | Marketing teams creating diverse content types (emails, ads, blogs) beyond just SEO | Chrome extension; integrates with most platforms | $49+ |

| ChatGPT (GPT-4) | Flexible drafting, ideation, prompt-based tasks | Solo founders testing AI content workflows before committing to specialized tools | API for automation; otherwise manual | $20/month for Plus |

The pattern you'll notice: specialized tools cost more but integrate better into automated workflows. General-purpose LLMs are cheap and flexible but require more human orchestration.

The Build Path: When Custom Makes Sense

If you have engineering resources, building custom agents offers maximum control and often better economics at scale.

Frameworks like LangChain and Vellum let you orchestrate multi-step workflows without reinventing the wheel. A typical build stack: OpenAI or Anthropic API for language generation ($0.01-0.06 per 1K tokens), Pinecone or Weaviate for vector storage ($70-100/month), and a workflow orchestrator like n8n or Airflow (open-source). Total recurring cost: $200-300/month, plus 40-60 hours of initial development.

The advantage? You control the prompt logic, data flow, and governance checkpoints. The disadvantage? You own the maintenance, debugging, and inevitable prompt drift as models update.

For most SaaS teams under 20 people, this math doesn't pencil out unless you're processing 100+ pieces of content monthly. You'll spend more time fixing broken pipelines than writing.

The Reality of Free AI SEO Tools

Let's address the elephant in the search query: can you build this system with free ai seo tools?

Yes, but with severe limitations.

Here's what actually works at the free tier:

ChatGPT (GPT-4o mini) handles ideation and rough drafting. You'll spend 15-20 minutes per brief manually feeding it context. No automation, no governance layer, no SERP integration.

Google Search Console provides your keyword and performance data. It's comprehensive but requires manual analysis, there's no "find striking-distance keywords" button.

Semrush/Ahrefs freemium tiers offer limited lookups (10 searches/day). Useful for spot-checking competitors, useless for systematic intelligence gathering.

The constraint isn't quality, it's scale and governance. Free tools force manual handoffs between each framework layer. You can produce 2-3 solid articles per month this way. You cannot produce 20.

If you're a solo founder validating demand, start here. If you're a Head of Marketing tasked with "10x content output," free tools will burn you out in six weeks. Been there.

Tiered Recommendations

Solo founder (0-2 people): ChatGPT Plus + Google Search Console + one production tool (Surfer or Jasper). Automate nothing yet. Focus on learning what good looks like. Budget: $100-150/month.

Small team (3-10 people): Add Semrush for intelligence, connect tools via Zapier or Make for simple handoffs (e.g., new keyword cluster → auto-create Notion brief). Introduce governance checklists in Notion or Airtable. Budget: $300-500/month.

Tech-enabled team (10+ with dev resources): Build custom agents for repetitive tasks (schema generation, meta descriptions, internal linking). Buy best-in-class ai seo tools for strategic layers (Semrush for intelligence, human writers for final production). Budget: $500-1,000/month in tools, plus internal dev time.

The worst move? Buying six tools, using none well, and wondering why AI "doesn't work."

Pick two, integrate them into your actual workflow, then expand. I've watched teams waste months this way, they chase the best ai seo tools instead of mastering what they already have. Start small. Get good at ai seo optimization with a narrow stack. Scale from there.

Measuring Success & ROI: The Metrics That Matter

Your board doesn't care that you rank #3 for "enterprise workflow automation." They care whether your content engine is generating pipeline.

The gap between most AI SEO implementations and actual business value is measurement. Teams track rankings and traffic, then wonder why leadership questions the investment. You need to prove ROI in language finance understands.

Here's the formula I use: (Value of Organic Leads Generated - Cost of Tool Stack & Human Time) / Cost. If your AI system costs $500/month in tools plus 20 hours of oversight at $100/hour, that's $2,500 total. If it generates 15 qualified leads worth $500 each in pipeline value, you're looking at ($7,500 - $2,500) / $2,500 = 200% ROI. Simple math, clear justification.

But you need the right inputs. Forget vanity metrics. Track organic traffic to conversion-focused pages, qualified lead volume from organic (use UTM parameters and CRM attribution), conversion rate by content type, and time saved on production. Nearly 70% of businesses report higher ROI from using AI in SEO, but only if they're measuring what matters.

The real unlock? Production throughput. If you're publishing 4 articles monthly and scale to 20 with the same team, that's a 5x multiplier on your content asset base. About two-thirds of AI-generated content ranks within two months, which means your ROI compounds faster than traditional content operations.

Case Studies in Scale

SnowSEO documented a 68% organic traffic increase, from 120,000 to 201,600 visits, after implementing AI-enhanced SEO, with top-10 keyword rankings growing 55%. But the hidden metric? They likely measured content velocity and cost-per-article reduction.

Stob.ai's work with Nakto e-Bikes is more dramatic: a 17x traffic increase after deploying an automated SEO system publishing 30 articles monthly. The conversion rate lifted 1.2% through AI-driven FAQ automation. That's not just traffic, it's qualified visitors converting at higher rates because the content matched intent better.

What these cases share: they tracked production metrics (articles published, time per piece) alongside business outcomes (leads, revenue attribution). The system's value wasn't just in ranking, it was in sustainable, scalable execution.

The AI Overviews (AIO) Factor: A New KPI

Here's what most guides won't tell you: the SERP you're optimizing for is changing underneath you.

AI Overviews now appear for 10-15% of queries and correlate with lower organic CTRs for traditional results. When Google generates an AI answer, fewer users click through.

This isn't a reason to panic, it's a new optimization surface. If your content gets cited in an AIO, you're building brand authority and still capturing clicks from users who want depth. The new KPIs: citation rate in AIOs (how often your domain appears as a source) and "Answer to Click" conversion (traffic from users who saw your brand in an AIO, then clicked).

Optimize for this by structuring content for clarity, direct answers in the first 100 words, clear sourcing with author credentials, and schema markup that helps Google understand your authority. Then design compelling next-step CTAs that convert the "research mode" visitor who came from an AIO citation.

Track AIO presence using tools that monitor featured snippet and AI Overview appearances. If you're cited, measure referral traffic patterns and time-on-page for those visitors. They're often higher-intent because they've already consumed your summary answer and chose to dig deeper.

Common Pitfalls & How to Avoid Them

I've seen promising AI SEO systems collapse within weeks. The pattern is always the same: founders skip a critical step, chase shortcuts, or ignore warning signs until their traffic tanks.

Pitfall 1: Over-Automation & Brand Voice Erosion

You automate everything, publish 50 articles in a month, and suddenly your brand sounds like every other AI-generated blog on the internet. Generic. Flat. Forgettable.

The fix: Treat your brand voice alignment step in the Governance Layer as sacred. Build a detailed style guide with actual examples from your best human-written content. Feed it to your AI agents. Then have a human editor verify every piece maintains that voice before it ships. If you can't tell which articles were AI-assisted versus human-written, you're doing it right.

Pitfall 2: Ignoring the Governance Layer (The Quality Collapse)

The most expensive mistake is treating human review as optional.

You'll save $2,000 on editing costs and lose $50,000 in organic traffic when Google's next core update hits. Remember: only 9% of users always trust AI answers. Your readers can smell unvetted AI content. So can Google's quality raters.

The fix: Budget for human oversight from day one. It's not a luxury. One experienced editor reviewing AI output is cheaper than three junior writers creating from scratch and produces better results. This is the difference between a scalable system and a spam factory.

Pitfall 3: Poor Data Integration & Silos

Your keyword tool doesn't talk to your content platform. Your AI agent can't access Google Search Console. You're manually copying data between systems like it's 2015.

The fix: Start with API-enabled tools. Use middleware like Zapier or Make to connect systems. Design your data flow during the blueprint stage, not after you've already committed to incompatible tools. If a tool doesn't offer API access or native integrations, it doesn't belong in a scalable system.

Pitfall 4: Chasing Tools Over Defining Process

You buy Surfer, Clearscope, and three AI writing tools because a listicle told you to. Six months later, you're still not publishing consistently because you never mapped the actual workflow.

The fix: Map your desired outcome and workflow before evaluating any tool. Use the blueprint from section 3. Define what success looks like, then find tools that fit your process, not the other way around.

Pitfall 5: AI Keyword Stuffing & 'Scaled Content Abuse'

Your AI agent suggests mentioning your target keyword 47 times. You do it.

Google's March 2024 core update specifically targeted this behavior, and sites that published low-quality scaled content saw rankings crater. Use SEO agents for guidance, not rigid rules. Prioritize user intent over keyword density. If a sentence feels awkward or forced, rewrite it. Your goal is to rank and convert. Keyword-stuffed garbage does neither.

Conclusion

Look, the best AI SEO tools aren't really tools at all. They're systems.

If you're walking away thinking you just need to buy Surfer or Semrush and flip a switch, we need to rewind. The founders winning right now are building governed agent workflows that handle everything from keyword discovery to publication, with human checkpoints at every layer that actually matters.

Here's what that looks like in practice: your Intelligence Layer runs while you sleep, scraping SERPs and flagging striking-distance keywords. Your Production Layer cranks out briefs, outlines, and first drafts in minutes instead of days. Your Governance Layer checks every piece for EEAT standards, brand voice consistency, and factual accuracy before anything goes live. You're not writing less. You're orchestrating more, better, faster.

The numbers back this up. Traffic lifts of 68%, click increases of 28%, production time slashed by 60-80% [Source: snowseo.com, seerinteractive.com]. But only when human oversight is baked into the architecture from the start. Remember: only 9% of users always trust AI answers [Source: elementor.com]. Your job is earning the other 91%.

AI Overviews are eating organic clicks. Google's Helpful Content System is actively hunting scaled spam. The middle ground is where SaaS companies will dominate: high-volume, human-validated content optimized for clarity and proper sourcing.

Start small. Pick one Intelligence Layer task this week. Scrape your top 3 competitors' SERPs, or run an AI agent to cluster your keyword gaps in Search Console. Build the habit of automation with oversight. Then scale the system, not the chaos.

Frequently Asked Questions

Will Google penalize AI-generated content?

Google doesn't care whether a robot or a human wrote your content. They care if it's garbage.

AI-written pages already show up in 17.31% of top search results [Source: semrush.com]. Google's March 2024 core update goes after "scaled content abuse," which is just their way of saying mass-produced junk without human oversight. If you're churning out hundreds of AI articles without fact-checking, adding expertise, or making sure they actually help someone, yeah, you're playing with fire.

But a well-governed AI system with real human review? That passes Google's quality bar just fine.

What's the minimum budget to get started with AI SEO agents?

Roughly $200-$300 per month in tools, plus 2-4 hours of your time each week for governance and review.

That budget breaks down to a primary LLM API like GPT-4 ($20/month), a content optimization platform like Surfer SEO starting at $89/month [Source: vezadigital.com], and a keyword tool like entry-level Semrush at $129/month [Source: vezadigital.com]. The real cost isn't the software. It's the discipline to review outputs, refine prompts, and maintain quality controls. Most people underestimate that second part.

Can I use AI agents for technical SEO?

Yes, but this is an advanced use case. You need agents that can access APIs and actually interpret structured data.

Agents can orchestrate site crawls through Screaming Frog, analyze log files to identify crawl waste, generate schema markup based on page content, and monitor Core Web Vitals through PageSpeed APIs. The trick is connecting your agent to the right data sources and building validation steps. Technical errors at scale get expensive fast, so start with simpler workflows like content optimization before you automate infrastructure tasks.

How do I ensure my AI content is trustworthy and builds E-E-A-T?

Only 9% of users always trust AI-generated answers [Source: elementor.com]. Your governance layer needs to actively combat that skepticism.

Every piece of AI content should pass through a checklist: fact-check claims against primary sources using RAG retrieval to cite real data, add expert quotes or original insights a bot can't replicate, include clear authorship with credentials, and link to authoritative references. If your content reads like it could've been written by anyone about anything, it won't build trust.

Specificity, citations, and human expertise separate helpful content from generic filler. That's the difference.