March 21st, 2026

Automated Content Freshness: How Content Creation Software Keeps Your SEO Current for LLMs

WD

WDWarren Day

You hit publish on a major product update blog post. A week later, it's not ranking. Two weeks later, it's still invisible to AI Overviews and Copilot. The content isn't stale, your SEO is.

In the age of generative search, freshness isn't a single metric anymore. It's a symphony of signals, and playing it manually is a losing game.

Here's what changed: AI-surfaced URLs are on average 25.7% fresher than traditional search results. LLMs don't just check your last-modified date and call it done. They're evaluating temporal consistency across your content, analyzing revision patterns, cross-referencing with other sources, and making split-second judgments about whether your information is current enough to cite. A single timestamp update won't cut it.

Most marketing teams are stuck in the old playbook. Updating a blog post once a quarter, tweaking a meta description, maybe refreshing a statistic or two. Meanwhile, LLMs are reading structured data, monitoring indexing signals, tracking how often you actually update versus how often you claim to, and penalizing inconsistency. The gap between what search engines reward and what your team can manually maintain is widening fast.

To win in AI search, you need to automate a system of composite freshness signals. From structured data updates to instant indexing notifications. Modern content creation software isn't just a writing tool anymore. It's the orchestration layer that makes this feasible at scale for resource-limited teams.

This guide will walk you through the three pillars of LLM-friendly freshness, show you how to build an AI-optimized tech stack, and give you a 90-day roadmap to implement automated freshness without adding headcount.

Why AI Search Sees 'Freshness' Differently (And Why That Matters)

Google's "Query Deserves Freshness" system was simple: breaking news and trending topics got a boost. Update your publish date, catch a news cycle, see a spike. That model worked when search engines were just matching keywords to documents.

LLMs aren't playing that game. They're evaluating whether your content represents the current state of knowledge on a topic. They do this through what researchers call composite freshness signals, a system that's way more sophisticated than a date stamp.

Here's what LLMs actually check when deciding if your content is fresh:

Temporal Consistency: Do the dates in your content, schema markup, and headers all line up? If your article claims to cover "2025 trends" but your dateModified schema says 2023, you've got a problem.

Revision Patterns: Are you making real updates or just shuffling words around? LLMs can tell the difference between substantive refreshes (new data, updated examples, expanded sections) and surface-level tweaks designed to game the system.

Cross-Source Alignment: How does your content fit with other recent sources on the topic? If five authoritative sites published similar findings last month and you're still citing 2022 data, you're falling behind.

The numbers are hard to ignore. AI-surfaced URLs are on average 25.7% fresher than traditional search results, and AI-referred traffic grew 527% year-over-year between January and May 2025.

But here's the thing: managing these composite signals manually across dozens or hundreds of pages isn't realistic. You need an orchestration layer. Content creation software that can coordinate technical signals, on-page updates, and indexing notifications as one unified system. Think of it like conducting an orchestra where every instrument (schema, sitemaps, content updates, IndexNow notifications) needs to play in sync.

The System in Action: A Blueprint for Automated Freshness

Here's what happens when you update a blog post in a properly orchestrated system:

Step 1: You hit "publish" on your content update in your CMS. A webhook triggers immediately.

Step 2: Your content creation software intercepts the trigger and simultaneously updates three things: the lastmod timestamp in your XML sitemap, the dateModified field in your JSON-LD schema markup, and the visible "Last Updated" date on the page itself.

Step 3: The software pings the IndexNow API, notifying Bing, Yandex, and participating engines that this URL changed. Seconds, not hours.

Step 4: It scans your site for related articles and automatically updates internal links to reference your freshly updated content, strengthening topical authority signals.

Step 5: The entire sequence logs to your analytics dashboard with a timestamp, so you can measure time-to-reindex and citation rate changes.

Total elapsed time? Under five minutes.

No manual sitemap editing. No waiting for the next crawl cycle. This is what modern CI/CD workflows enable for content operations.

The Three Pillars of LLM-Friendly Freshness (And How to Automate Them)

LLMs don't judge freshness the way Google did in 2015. They're not just checking a lastmod date in your sitemap and calling it a day.

Modern AI systems evaluate freshness through composite signals. They look at temporal consistency, revision patterns, cross-source alignment, and structural clarity. They're asking: "Is this page actually maintained? Do the dates make sense? Can I extract clean, recent data from it?"

Manual updates fail at scale because of that complexity. You can't possibly remember to update schema markup, ping IndexNow, refresh your sitemap, and verify structured data across hundreds of pages every time you make a change. Nobody can. You need automation that handles all three pillars simultaneously.

Here's the framework that actually works.

Pillar 1: Technical Foundation Signals

These are the machine-readable timestamps and protocols that tell search engines and LLMs when your content changed and whether to trust those changes.

What you're automating:

Your content creation software should automatically write or update the lastmod attribute in your XML sitemap every time a page is published or modified. Not "eventually." Immediately, as part of the save action.

Same goes for HTTP validation headers. ETags and Last-Modified headers signal to crawlers that a page has changed without forcing a full re-fetch. Modern headless CMS platforms and static site generators handle this natively. Legacy systems often require middleware or CDN-level configuration.

Then there's IndexNow. This protocol (supported by Bing and other engines) lets you notify participating search engines the moment a URL is created, updated, or deleted. Instead of waiting days for the next crawl, you're telling the engine "this page is fresh, come look now."

How software makes this feasible:

Most modern platforms offer IndexNow integration via plugin or API. When your CMS publishes a page, it fires an IndexNow notification automatically. No manual submission. No waiting.

Your sitemap generator should trigger on every publish event, not on a weekly cron job. Static site generators with CI/CD pipelines handle this as part of your build process. WordPress or headless CMS? There are plugins and webhooks for that.

The key is orchestration. These signals need to fire together, in the right sequence, without human intervention. That's the role of your content tech stack.

Pillar 2: On-Page & Structured Signals

LLMs don't just read your content. They parse it. They're looking for structured data, semantic HTML, and visible metadata that confirms your freshness claims.

What you're automating:

Start with schema markup. Your software should inject or update dateModified and datePublished properties in your JSON-LD structured data every time a page changes. If you're manually editing schema, you'll forget. If your CMS does it automatically, it's always accurate.

Visible update dates matter too. LLMs cross-reference what they see on the page with what's in your structured data. If your schema says "modified yesterday" but your page shows no visible date, that's a red flag. Automation keeps these in sync.

Semantic HTML is harder to automate but easier to template. Your content creation software should enforce proper heading hierarchies, use lists and tables where appropriate, and structure content for extraction. Pages with structured formatting achieve a 91.3% sentence-match rate in AI citations versus 39.3% for unstructured pages.

How software makes this feasible:

Template-driven publishing is your friend. If every blog post follows a standard structure (H2s for major sections, bullet lists for key points, tables for comparisons), you're building in the structure LLMs prefer.

Dynamic schema injection is table stakes for modern CMSs. Your publishing workflow should automatically populate Article or BlogPosting schema with correct dates, authors, and metadata. Platforms like Contentful, Sanity, and even WordPress with the right plugins handle this out of the box.

For teams creating content at scale, AI-assisted formatting tools can analyze drafts and suggest structural improvements. Adding subheadings, converting paragraphs to lists, or flagging walls of text that need breaking up. This isn't about AI writing your content. It's about AI helping you format it for maximum extractability.

Pillar 3: Strategic & Measurement Signals

The first two pillars are about signaling freshness. This pillar is about proving it and knowing when automation is working.

What you're automating:

Content pruning is often overlooked, but it matters. Duplicate or near-identical pages confuse AI grounding systems and dilute your authority. Automation can flag low-traffic, outdated pages for consolidation or archiving based on rules you define. Say, any page with zero organic traffic in six months and no backlinks.

Internal linking also signals freshness indirectly. When you publish new content, your software should automatically suggest or insert links from related existing pages. This creates a web of connections that helps LLMs understand topical authority and recency.

Then there's measurement. You need automated tracking of AI citation rates, time-to-index after updates, and changes in AI-referred traffic. Manual spot-checks don't cut it. You need dashboards that update daily or weekly.

How software makes this feasible:

Content audit tools can run on a schedule (monthly for large sites, quarterly for smaller ones) and generate reports flagging candidates for pruning. Some platforms integrate directly with your CMS to show traffic and engagement data alongside each page, making triage decisions faster.

Internal linking plugins and AI assistants can scan your content library and suggest relevant links as you write. More advanced systems use NLP to identify semantic relationships and insert contextual links automatically during publishing.

For measurement, you're looking at a combination of tools. Bing Webmaster Tools now offers an "AI Performance" metric that tracks how often your content is cited in AI responses. Third-party platforms are building citation tracking for ChatGPT, Perplexity, and Gemini. Your analytics stack should pull this data into a unified dashboard so you can correlate freshness actions with AI visibility outcomes.

The three pillars aren't independent. They reinforce each other. Technical signals tell engines to re-crawl. Structured data helps LLMs extract clean information. Strategic signals prove your content is actively maintained and interconnected.

Trying to manage all three manually is a losing game. content creation software isn't just a writing tool. It's the orchestration layer that makes this system run on autopilot.

Pillar 1: Automating Technical Foundation Signals

Think of technical freshness signals as plumbing. Nobody gets excited about it, but if it's broken, your content doesn't flow to the systems that matter.

Automating Sitemap Updates & lastmod

Every time you publish or update content, your sitemap should regenerate with accurate timestamps in the lastmod field. Modern content creation software should trigger this automatically. No manual XML editing, no forgetting to update after a revision. Bing's webmaster guidance explicitly uses lastmod and HTTP validation headers like ETags to detect freshness, and outdated timestamps tell crawlers nothing has changed.

Implementing IndexNow Notifications

Here's where automation gets powerful: IndexNow is a protocol that lets you ping search engines the instant you publish or update a page.

Instead of waiting days for a crawler to notice, you're telling Bing (and participating engines) "this URL changed, come look now." Look for content platforms with native IndexNow integration, or set up API triggers in your workflow. It's the difference between hoping your update gets noticed and guaranteeing it.

Ensuring Crawl Budget Efficiency

If search engines waste time crawling broken pages, duplicate URLs, or redirect chains, they miss your fresh content. Tools like Screaming Frog's CLI can run scheduled audits to catch crawl traps before they become problems.

For large sites, this isn't optional. Poor crawl signals directly reduce your chances of appearing in AI answers.

Managing Canonicals & Redirects

Automation ensures that when you consolidate or move content, proper 301 redirects fire and old URLs drop from your sitemap. Bing's guidance is clear: preserve URL stability, use 301s for permanent changes, and remove deleted pages promptly.

Manual redirect management at scale is where mistakes happen. Duplicate or orphaned URLs confuse AI grounding systems, and you won't catch them all by hand.

Pillar 2: Automating On-Page & Structured Signals

Technical signals get your content crawled. On-page signals get it cited.

LLMs extract content differently than traditional search crawlers. They're looking for clean, structured information they can confidently quote. A wall of prose, no matter how eloquent, performs worse than a well-formatted list.

Synchronized Schema and Visible Timestamps

When you update content, two things need to happen at once: your dateModified schema updates, and a human-readable "Updated on [Date]" stamp appears on the page. Good content creation software handles both in a single action.

Why both? The schema tells the LLM's retrieval system the content is current. The visible timestamp builds user trust and provides a clear signal for AI systems evaluating source quality. Manual maintenance means one gets updated and the other doesn't. Automation closes that gap.

Structure Dictates Citation Likelihood

Pages with structured content achieve a 91.3% sentence-match rate versus 39.3% for unstructured pages. That gap should wake people up.

Your content creation software should enforce structure through templates. Every article needs proper H2/H3 hierarchies, bulleted lists for key points, and tables for comparisons or data. These aren't stylistic choices. They're extraction optimization.

The median cited sentence in AI responses is just 10 words. Long, complex sentences don't get quoted. Your software should flag sentences over 20 words during editing and suggest breaking them up. Simple tooling, massive impact on quotability.

Embedding Fresh Data Points

Evergreen content decays when its examples age.

Modern content creation software can suggest recent statistics, case studies, or news hooks relevant to your topic as you write. Instead of manually hunting for updated figures every quarter, the system surfaces them in-editor. This isn't about AI writing your content. It's about preventing your content from going stale while you're focused on strategy, not data hygiene.

Pillar 3: Automating Strategic & Measurement Signals

Technical and on-page signals tell LLMs your content exists and is current. Strategic signals tell you which content to update next and whether it's working.

This is where automation stops being about maintenance and starts being about intelligence. You can't update everything. Guessing what to refresh wastes time. What you actually need is a system that forecasts impact and tells your team where to focus.

Predictive Analytics: The Update Prioritization Engine

Advanced SEO platforms now use historical performance data combined with live ranking shifts to forecast which decaying pages would benefit most from an update. They analyze traffic trends, keyword position volatility, competitor content changes, and seasonal patterns to build a ranked list of refresh candidates.

You're not updating based on gut feel or last-modified dates. You're updating based on predicted ROI.

Set your content creation software to flag pages where traffic has declined 20%+ over 90 days and keyword rankings have dropped for queries with rising search volume. That's a signal the topic still matters, but your page has fallen behind. Automate a Slack notification or dashboard alert when these conditions align.

Automated Content Pruning: The Anti-Freshness Signal

Here's the contrarian move: sometimes the best freshness signal is deletion.

A site cluttered with outdated, low-traffic pages confuses crawlers and dilutes authority. Ahrefs found that over 65% of backlinks decay over nine years, which means old content isn't just stale. It's often a net negative.

Modern content creation software can flag pages below defined thresholds (say, fewer than 10 sessions in six months) and recommend archiving, consolidation, or 301 redirects. This isn't manual work. It's a quarterly automated audit with a one-click remediation workflow.

AI Citation Dashboards: Measuring What Actually Matters

Microsoft's Bing Webmaster Tools now includes an AI Performance metric that tracks how often your content is cited in AI-generated responses. Google Search Console is rolling out similar reporting for AI Overviews.

Wire these into your content creation software's analytics dashboard. Track citation share alongside traditional metrics like impressions and CTR. If a page ranks well but never gets cited by LLMs, that's a structural signal problem (probably missing schema or poor formatting), not a freshness problem.

The goal isn't more updates. It's smarter updates, guided by data you didn't have to pull manually.

Building Your AI-Optimized Content Tech Stack

You don't need a dozen new tools. You need the right categories, connected properly.

Most teams try to bolt AI optimization onto their existing stack, a plugin here, a manual workflow there. That's how you end up with structured data that never updates, IndexNow notifications that fire inconsistently, and content that still takes three weeks to get cited.

Better approach: build your stack around three pillars, then connect them with an orchestration layer that makes automation actually work.

Pillar 1: Technical Foundation

Your CMS is the foundation. If you're on WordPress, you need a headless setup or advanced SEO plugins that can automate sitemap updates, schema injection, and IndexNow notifications. Building from scratch? A headless CMS with CI/CD workflows reduces deployment time and eliminates the content bugs that confuse LLM crawlers.

Look for: automatic sitemap generation on publish, native IndexNow support or webhook capabilities, and version control that tracks every content change with timestamps.

Pillar 2: On-Page & Structured Signals

This is where content creation software becomes your orchestration hub. You need platforms that don't just help you write, they automate the structured data, semantic markup, and formatting that LLMs actually parse.

The best tools generate schema markup automatically when you publish, maintain consistent heading hierarchies without manual checking, and update dateModified fields across your content library when you make changes. Bonus points if they can identify which existing content needs refreshing based on ranking decay or citation drop-off.

Pillar 3: Strategic Intelligence

SEO automation platforms like Semrush or Ahrefs provide the data layer. Their APIs can feed your content system with keyword trends, competitor update frequency, and ranking volatility signals.

You're not logging in to check dashboards, you're piping that intelligence directly into your publishing decisions.

The Orchestration Layer

This is the glue: workflow automation tools (Zapier, Make, or custom scripts) that connect everything. When your SEO platform detects a ranking drop, it triggers a content refresh workflow in your CMS, which updates the page, regenerates schema, and fires IndexNow, all without you touching it.

While free social media management tools handle distribution after publication, your orchestration layer works upstream. It makes sure the content itself is optimized before it ever gets shared.

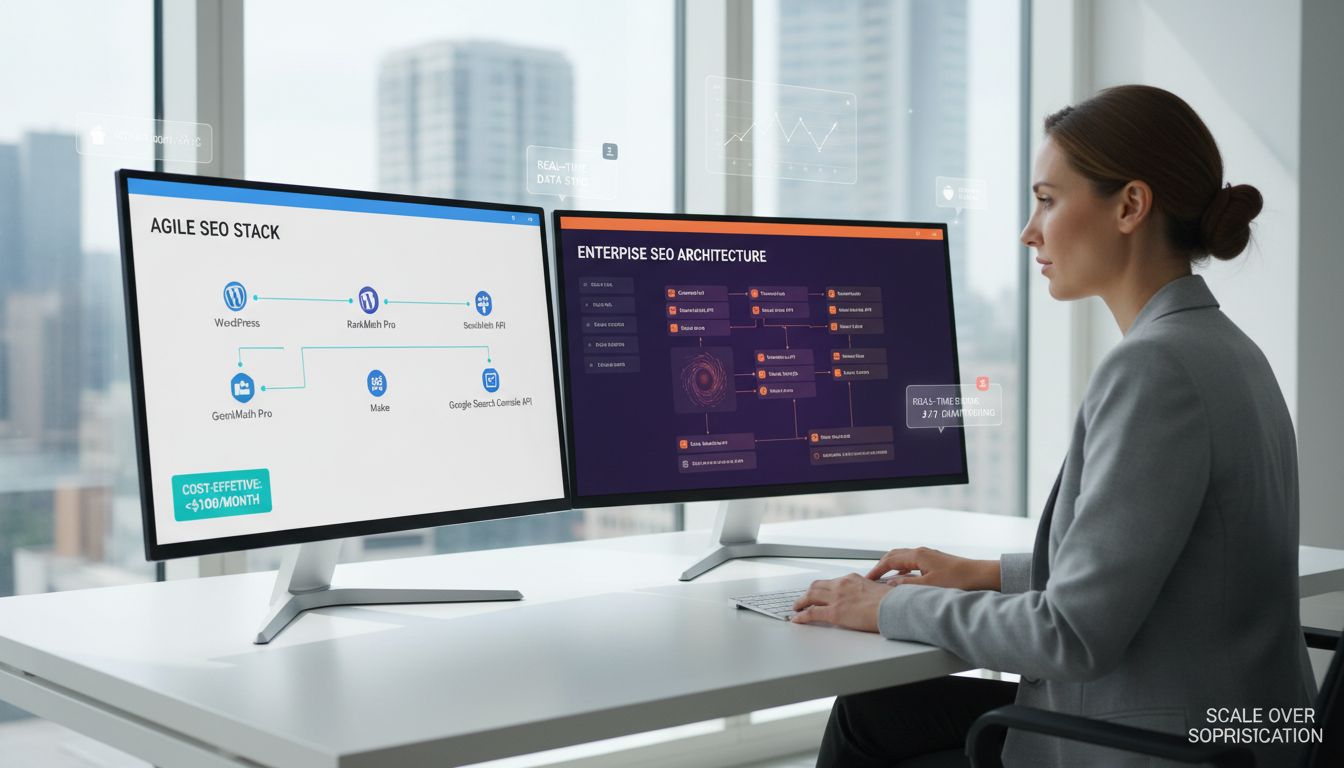

Two Real Stacks

Lightweight: WordPress + RankMath Pro + Make + Google Search Console API. Total cost under $100/month, handles 90% of automation for a 50-page site.

Enterprise: Contentful + Semrush API + custom Node.js scripts + dedicated analytics warehouse. Built for teams managing 5,000+ URLs with daily freshness requirements.

The stack you need depends on scale, not sophistication. Start with the category gaps you have today.

Evaluating Content Creation Software for AI-First SEO

The "best" content creation software for AI search isn't the one with the most features. It's the one that automates the three pillars of composite freshness signals without requiring a dedicated engineering team.

Most teams evaluate content platforms on collaboration features or ease of use. Those matter, but they're table stakes. For AI-first SEO, your evaluation checklist needs to focus on automation capabilities that actually influence whether LLMs cite you or ignore you.

Essential Features for AI-First Content Operations:

| Feature Category | What to Look For | Why It Matters |

|---|---|---|

| Indexing Automation | Native IndexNow integration or webhook support | Reduces time-to-index from days to hours |

| Structured Data | Automatic JSON-LD generation for Article schema with dateModified | Pages with structured content achieve 91.3% sentence-match rates versus 39.3% for unstructured |

| Version Control | Visible change tracking and automated lastmod updates | LLMs assess temporal consistency across multiple signals |

| Workflow Automation | Scheduled publishing and conditional update triggers | Enables systematic freshness without manual intervention |

| Platform Integration | API access to Semrush, Ahrefs, or similar SEO platforms | Allows automated prioritization based on ranking/traffic data |

Recommendations by Team Size:

Startup or solo marketer (0-2 people): Prioritize all-in-one platforms with strong SEO plugins and built-in structured data. You need automation out of the box, not customization projects.

Look for platforms where IndexNow and schema markup require zero code. You don't have time to debug webhooks or write custom scripts.

Scaling SaaS team (3-10 people): Move toward headless CMS options with workflow automation and API-first architecture. You're ready to connect tools together. Focus on platforms that integrate with your existing SEO stack and support conditional publishing rules, things like "if ranking drops below position 5, flag for refresh."

Enterprise (10+ people): Require full API control, CI/CD integration capabilities, and the ability to couple with predictive analytics platforms. At this scale, you're building custom orchestration layers. Your platform needs to be a flexible foundation, not a prescriptive tool that forces you into someone else's workflow.

The wrong platform forces you to choose between freshness and quality. The right one makes both automatic.

Your 90-Day Roadmap to Automated Freshness

You don't need a year-long transformation. Three focused months will get you there.

Most teams fail because they try to automate everything at once. This roadmap breaks the work into phases that build on each other and give you proof points to show leadership along the way.

Month 1: Audit & Baseline

Week 1-2: Inventory your current state

- Export your XML sitemap and check how many URLs have

lastmodtags (most CMSs generate these automatically, but they're often broken or static) - Run a sample of 20 top-performing pages through Google's Rich Results Test to see which have

dateModifiedschema - Document which pages show visible "Last updated" dates to readers

Week 3-4: Set up measurement infrastructure

Connect Google Search Console and enable Bing Webmaster Tools AI Performance report to track how often your content appears in AI-generated answers. Create a simple spreadsheet dashboard: track "Pages Updated This Month" alongside "AI Impressions" and "Traditional Organic Clicks."

Establish your baseline: What's your current AI citation rate for your top 50 pages?

Month 2: Implement Core Automation

Pick ONE pillar to start. For most teams, Pillar 1 (Technical Foundation) delivers the fastest wins.

Week 5-6: Enable instant indexing

- Install an IndexNow plugin for your CMS (WordPress, Webflow, and most platforms have official plugins)

- Configure automatic sitemap regeneration on publish/update events

- Test: Publish a minor update and verify the IndexNow ping fires

Week 7-8: Automate schema injection

Install or configure your content creation software to auto-inject Article schema with dateModified on every save. Set up a monthly crawl audit using Screaming Frog or your SEO platform. Quarterly works for smaller sites, but if you're publishing 20+ pieces per month, you need monthly checks.

Month 3: Measure, Iterate, & Scale

Week 9-10: Run a controlled experiment

Select 10-15 key pages that haven't been updated in 90+ days. Update them using your new automated system: refresh data, add a new section, trigger schema/IndexNow.

Measure citation performance weekly for four weeks using repeatable prompt methodology. Run the same 5-10 queries multiple times and track how often your pages appear.

Week 11-12: Document and expand

Compile results: Did updated pages see increased AI impressions? Faster reindexing?

Use findings to justify budget for scaling automation to Pillars 2 and 3. Create a rolling 90-day update calendar for your top 100 pages.

By day 90, you'll have a functioning system, measurable results, and a roadmap to scale. Not perfect, but proven enough to defend the investment.

Common Pitfalls When Automating for AI Search

Automation amplifies whatever you feed it. If your process is flawed, you'll just produce flawed content faster.

Updating lastmod without meaningful content changes is the most common mistake. You trigger a sitemap update, fire an IndexNow notification, and bump the structured data timestamp, but the content itself is unchanged. LLMs assess freshness through composite signals, including revision patterns and temporal consistency. When they see frequent date changes with no substantive edits, they downgrade your freshness score. You've trained the system to distrust your timestamps.

Neglecting the visible date stamp creates the same problem from a different angle. Your JSON-LD schema says "dateModified: 2025-01-15," but the page footer still shows "Last updated: November 2024." That inconsistency breaks the temporal alignment LLMs use to validate freshness claims. They cross-check. Always.

Failing to prune or consolidate outdated pages is automation's silent killer.

Duplicate or near-identical pages confuse AI grounding and reduce citation confidence. Your automation keeps updating five different versions of the same "Getting Started" guide from 2019 to 2023, each with slightly different advice. The LLM can't determine which is authoritative, so it cites none of them. You're competing against yourself.

Measuring vanity metrics only means you're optimizing for the wrong outcome. Traditional traffic might hold steady while your AI citation share craters. Track what matters: citation rate, AI-referred conversion rate (4.4× higher than organic search), and time-to-index after updates. If you're still celebrating pageviews while ChatGPT ignores you, you're looking at the wrong dashboard.

Letting automation degrade quality is the final trap. Every automated update must add genuine value: a new statistic, a revised recommendation, a fresh example. Shuffling sentences or swapping synonyms doesn't fool LLMs. They detect substance, not just motion. Your content creation software should make good updates easier, not bad updates faster.

The Future of AI Search and Automation

The next wave is already taking shape in academic labs. Researchers are building temporal signals directly into retrieval models, systems like TempRALM and TempRetriever that automatically weight recency for time-sensitive queries without manual tagging. What's experimental today becomes production infrastructure tomorrow.

content creation software will shift from reporting what needs updating to drafting contextually relevant updates. Your automation layer won't just flag a pricing page for refresh. It'll generate a revision that incorporates your latest feature release, competitive positioning, and brand voice guidelines. You'll review and approve, not write from scratch.

This doesn't eliminate the human role. It elevates it.

Your job becomes setting the rules: which content pillars matter, what update frequency aligns with your market, how aggressively to prune. You interpret the citation data, spot the patterns automation misses, and protect brand integrity when the system suggests something off-brand. Automation handles the mechanics. You handle the strategy. That's the division of labor that wins in AI search.

Conclusion

AI search doesn't reward the loudest voice. It rewards the most current, most structured, most consistently maintained one.

You've seen the blueprint: technical signals that announce updates, on-page signals that make content extractable, and strategic signals that prove your authority. Manually managing these across dozens or hundreds of pages isn't just tedious. It's impossible at scale.

That's where content creation software becomes the orchestration layer. Not for writing alone, but for automating the composite freshness signals that LLMs actually use to decide what gets cited and what gets ignored. The sites winning AI visibility in 2026 aren't the ones with the biggest budgets. They're the ones that built systems to keep their best content perpetually current without burning out their teams.

Your next step isn't to overhaul everything.

Run the Month 1 Audit from the roadmap. Pick five key pages. Check their lastmod, their schema, their visible dates. That gap you find? That's your starting line. From there, you can build a system that keeps your SEO and your traffic perpetually current.