April 24th, 2026

Digital Marketing Platforms for AI Content: How to Choose the Right Stack

WD

WDWarren Day

What's the actual problem with how most teams pick their AI marketing tools?

They just... buy stuff. A demo looks good, the sales rep is convincing, and suddenly you've got three overlapping subscriptions and data living in four different places.

With 81% of marketers deploying AI daily, the competitive edge isn't having AI. It's how you build around it.

Choosing the right digital marketing platforms for AI content isn't a features problem. It's an engineering problem. You're making decisions about technical debt, vendor lock-in, and governance, and most selection advice skips all of that.

I've spent 15 years building software systems for startups, media companies, and agencies. The teams that get this right treat their marketing stack the same way they treat their application stack: loosely coupled, data-driven, built around actual business goals.

Not a collection of shiny tools.

This guide gives you a practitioner's framework. How to audit what you already have (honestly), evaluate new tools with real technical rigor, and build a stack that scales without falling apart. We'll get into the five-layer model for AI marketing, the evaluation framework most vendors hope you won't use, and how to pilot tools without making a mess you'll regret later.

Step 1: The Prerequisite – Auditing Your Current Stack and Defining Strategic Goals

Before you evaluate a single new tool, you need to know exactly what you're working with.

Not a rough sense of it. A forensic audit. Build a spreadsheet with four columns: Tool, Core Function, Integration Points, and Pain Points. You'll be surprised what you find. That "marketing automation" platform might just be sending batch emails. That "analytics" tool might be a Google Sheet someone updates manually every Thursday.

The most important column is Integration Points. Map every API connection, webhook, and data sync. This is where technical debt hides.

Specifically, flag any automation built on Zapier or Make that hasn't been documented or audited in the last 90 days. In my agency work, I've seen these "low-code" workflows become the most brittle part of a stack, failing silently and costing more in reactive firefighting than they ever saved.

Once you've got that mapped, define what success actually looks like. Move past vanity metrics.

Your business KPIs should be specific: a cost-per-lead reduction, a conversion lift from a key landing page, a 40% cut in content production time. Technical KPIs matter just as much, API latency under 200ms, 99.9% data sync reliability, model output consistency scores if you're already running AI.

For teams starting from scratch, the question "how do you start digital marketing?" gets answered before you touch the stack. Your audit begins at zero, which is actually an advantage. Define your target audience, core channels, and messaging first. Your initial digital marketing platforms should be lean, built explicitly to support those foundations.

That clarity stops you from buying a "comprehensive platform" when you just need a good CRM and an email sender.

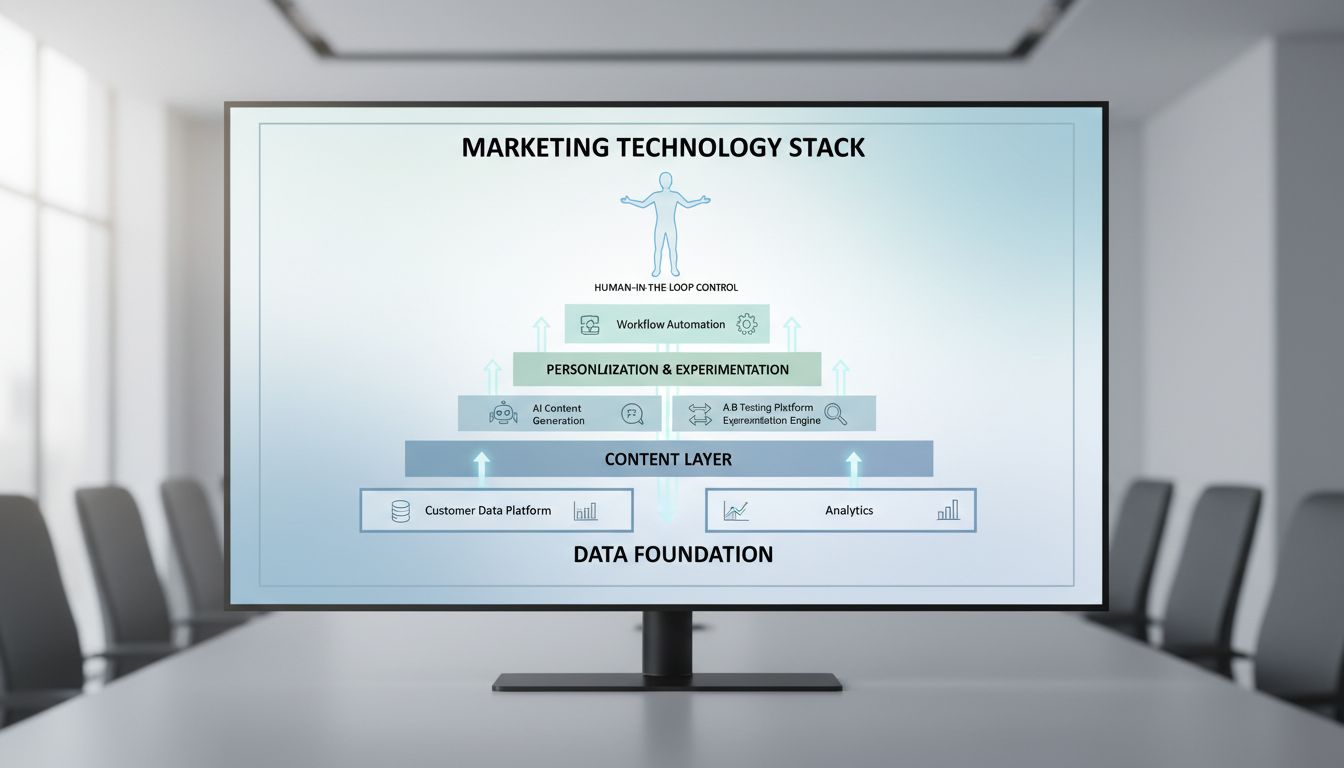

Step 2: Architecting Your Stack – The 5-Layer Model for AI Marketing

Get the architecture wrong and no tool will save you.

The biggest mistake I see teams make is buying tools that solve for the same layer. You end up with bloat, integration headaches, and three platforms doing the same job badly. What you need is a model where each part of your stack has one distinct job.

Think of it as five layers, where data flows upward and orchestration coordinates across.

Layer 1: Data Infrastructure. Your single source of truth. Not glamorous, but this is where ROI actually lives. It includes your Customer Data Platform (CDP), data warehouse, and clean rooms, collecting, cleaning, and unifying first-party data from every touchpoint.

Without clean data, your AI tools are expensive guesswork. DigitalApplied's research found that organizations missing this "pristine data" pillar see 60%+ lower automation ROI.

Layer 2: Content Creation & Optimization. This is where generative AI lives. Tools like Jasper, ChatGPT, and headless CMS platforms (Sanity, Contentful) operate here, ideation, drafting, SEO optimization against Clearscope or Surfer.

The thing most people miss: this layer should output structured, tagged content ready for the layers above. Not just a published blog post.

Layer 3: Distribution & Advertising. This layer executes. Email service providers, social schedulers (Buffer, Sprout Social), ad platforms (Google Ads, Amazon DSP). AI here optimizes bidding, generates ad creatives (Google's Asset Studio), schedules posts.

Feed it the right creative briefs and audience segments from Layer 1. That part matters.

Layer 4: Personalization & Experimentation. Here, AI decides what to show to whom. Platforms like Bloomreach Engagement (with its Loomi AI) or Optimizely use Layer 1 data to personalize web experiences, emails, and offers in real-time. This is also where you run A/B and multivariate tests.

Layer 5: Orchestration & Analytics. The control plane. Workflow tools (Zapier, n8n) and analytics platforms (GA4, Looker Studio) monitor performance, trigger cross-channel actions, and measure ROI.

It answers one question: "Is this whole system actually working?"

The power comes from keeping those layers separate. A lot of digital marketing platforms market themselves as "all-in-one", and what that usually means is they conflate Layers 2, 3, and 4 and lock you in. A modular stack lets you swap the best tool for each job as things change.

Your orchestration layer (5) is the glue. It's what makes a collection of point solutions act like one coherent system.

Step 3: The Comprehensive Evaluation Framework – Beyond the Feature Checklist

You have your blueprint. Now the trap is evaluating tools like a marketer checking boxes, when you need to evaluate them like an engineer assessing a critical dependency. Forget glossy demos. Start with business value, then get forensic.

Business Value Assessment: Prove It, Don't Just Promise It

Every vendor will cite a Forrester TEI study showing 300%+ ROI. Bloomreach claims 251% in theirs; Jasper's shows 342%. Useful benchmarks, but they're vendor-best-case scenarios.

Your job is to define your Time to Value (TTV). How many weeks from signature to a measurable output? For a content tool, that might mean the time to generate, review, and publish a pilot post that actually drives traffic.

Run a 30-day proof of concept with a concrete KPI: "Reduce first-draft time for case studies by 60%" or "Increase email open rate for Segment A by 5%." Also look hard at the vendor's public roadmap. If you need A/B testing and their next 12 months are all about social media integrations, that's a misalignment you'll feel later.

The Technical Due Diligence Checklist (The Questions Most Buyers Miss)

This is where you separate platforms from toys. Every tool on your shortlist gets this checklist.

- Data Governance & Privacy: Where is customer data processed and stored? Can you enforce EU-only processing? What's the data deletion workflow? If they use your data for model training, can you opt out?

- Model Provenance & Security: Which base model do they use (GPT-4, Claude, Llama)? Do they provide LLM-specific penetration test results, not just a generic ISO 27001 certificate? Ask for their policy on training data transparency.

- API-First Architecture & Integration: Are webhooks available for real-time alerts? What are the rate limits and SLAs for the API? Download their SDK and try a basic integration, is the documentation accurate, or a mess of outdated examples?

- Explainability & Telemetry: Can you trace why an AI made a recommendation? Do you get hallucination rates or confidence scores for content outputs? Without this, you're debugging a black box.

- Scalability & Cost Controls: Is pricing based on seats, usage, or both? Watch for usage-based traps where costs explode with success. Test cold-start latency, does the first query each morning take 8 seconds?

Quality, Compliance, and The Non-Negotiable Human Role

Adopt the 3 C's of AI as your quality lens: Context (does the output understand your audience?), Consistency (does it match your brand voice every time?), and Control (can you easily correct it?).

Use automated evaluation tools like DeepEval for metrics like semantic similarity and factual consistency, but never skip human review. Hybrid workflows consistently outperform AI-only for SEO and credibility.

Compliance is Now a Feature, Not a Footnote

The regulatory environment is moving fast. The FTC requires clear disclosure when AI substantially modifies advertising content. More critically, the European Commission's draft Code of Practice on AI content transparency, published in December 2025, introduces machine-readable marking and labelling obligations starting August 2, 2026.

Your chosen platform, across all the digital marketing platforms you evaluate, needs to be able to tag AI-generated content appropriately. This isn't future-proofing. It's basic procurement due diligence.

Synthesise: The 4 Pillars for Final Selection

Weigh every tool against four pillars. Capability: Does it actually solve the problem? Compliance: Is it secure, private, and transparent? Cost: Is the pricing model sustainable at 10x scale? Coupling: How much vendor lock-in does this create?

A tool that scores 10/10 on capability but fails on coupling is long-term technical debt. You want a high score across all four, not a perfect score on one.

Step 4: Tactical Selection and Piloting – From Shortlist to Proof of Concept

You have a scored shortlist. Now you move from theory to practice. But don't buy anything yet.

Run a structured pilot first.

For each functional layer in your blueprint, pick 2-3 candidates. Mix established vendors with one challenger. I usually shortlist one "safe" enterprise option, one modern API-first tool, and one open-source alternative. Score them against your four pillars: Capability, Compliance, Cost, and Coupling.

A tool scoring 9/10 on features but requiring proprietary data formats is a future integration nightmare.

Design a Conclusive 30-Day Pilot

Your pilot has to prove business value, not just technical function. Define success metrics upfront that tie directly to your goals from Step 1.

If the goal is "reduce content production time by 40%," track exactly that. Measure content velocity, engagement rates, cost per qualified asset, and model accuracy scores. Use automated evaluation tools like DeepEval or Galileo to track hallucination rates and instruction adherence over time.

Stress-test the APIs. Build a simple integration between the candidate tool and your CRM or CMS.

If their "robust API" breaks when you try to pass a custom metadata field, you've just saved a six-month implementation headache. This is where most marketing teams get burned, assuming integration will be simple.

Negotiate with Implementation in Mind

Before signing, negotiate three key contract terms beyond price.

First, explicit data ownership: you must retain all rights to your inputs, outputs, and any fine-tuned models. Second, SLAs for model performance, not just uptime. If the vendor's LLM accuracy degrades after an update, what's your recourse?

Third, clear exit clauses and data export functionality. How do you get your brand voice configurations and workflow data out if you switch?

Plan for the implementation lift using the 10-20-70 rule for AI. Allocate 10% of the budget to the technology, 20% to the data and integration work, and 70% to change management and team training.

The best tool fails if your team doesn't adopt it.

Apply the 70-20-10 Budget Rule

Allocate your stack budget across all the digital marketing platforms you're evaluating with this split: 70% to proven, core layers like your data foundation and content generation. 20% to emerging, high-potential areas like agentic orchestration platforms.

Reserve 10% for true experimental bets, a new video generation model, a niche personalisation engine, whatever you're curious about. This keeps you from overspending on unproven tech while still leaving room to explore.

Your pilot results should dictate which category a tool lands in.

Common Pitfalls and How to Steer Clear of Them

Even with a solid blueprint and evaluation framework, teams still stumble on the same predictable mistakes. Here are the five most costly ones I've seen.

Pitfall 1: The Single-Vendor Mirage The sales pitch sounds great. Seamless integration, one vendor to call when things break. The reality is lock-in, slower innovation, and paying for features you'll never touch.

Architect for a "loosely coupled" system instead. Best-of-breed tools connected via APIs or your data warehouse give you the flexibility to swap components when something better comes along, without rebuilding everything.

Pitfall 2: Neglecting the Data Foundation

You can't build a skyscraper on sand. Organizations missing solid data foundations see 60%+ lower automation ROI. That's not a rounding error.

Governance means clear schemas for your customer data, lineage tracking so you know where every data point came from, and automated freshness checks. Skip this and your AI tools are making decisions on stale, inconsistent information. (ask me how I know)

Pitfall 3: Full Automation Fallacy

The hype says AI can run your marketing on its own. The case studies say hybrid AI-human workflows consistently outperform AI-only approaches for SEO rankings and quality.

Keep the human checkpoints. A strategist reviews the campaign brief. An editor handles final polish on generated copy. A compliance officer signs off on regulated content. AI handles scale. Humans provide judgment. That division isn't optional.

Pitfall 4: The "Set and Forget" Automation

Workflows built on APIs are brittle. Vendors update endpoints, rate limits change, model outputs drift. An automation that worked perfectly three months ago might be silently failing right now.

Simple fix: audit every orchestrated workflow monthly. Check error logs, validate output samples against a quality benchmark, confirm integrations are still healthy.

Pitfall 5: Ignoring Content Originality and Licensing

This is the legal and reputational landmine that teams consistently underestimate.

Never assume AI-generated content is original or licensed for commercial use. Models are trained on copyrighted material, and outputs can inadvertently replicate protected text or images.

Treat it as an unsolved problem. Any content creation tool in your stack either needs robust built-in originality checks, or you integrate a standalone service like Copyleaks or Originality.ai. The FTC and emerging EU regulations mean you're accountable for what you publish, full stop.

Future-Proofing Your Stack: Trends and Adaptive Strategies

Your AI marketing stack isn't a one-time purchase. It's a living system.

The goal isn't to predict every future trend perfectly. It's to build a modular architecture that can absorb them without a full rebuild. Here's where I'd focus.

The Rise of Agentic AI and Multi-Agent Orchestration

We're moving from single-purpose tools to goal-oriented agentic AI. Think autonomous agents that chain tasks: research a topic, draft a blog post, generate supporting visuals, schedule it for publication.

Gartner predicts broad adoption of agentic AI for one-to-one interactions by 2028. Your stack needs strong APIs and platforms with agent SDKs (like LangChain or CrewAI) to compose these workflows, not just consume pre-built features.

Deepening Regulatory Scrutiny and the Need for Explainability

Regulation is moving beyond simple disclosure. The EU's draft Code of Practice on AI content transparency, with obligations starting August 2026, will require machine-readable markings and audit trails.

Future-proof by choosing tools that offer explainability: clear logs of what data was used, which model generated an output, why certain decisions were made. This isn't just compliance. It's how you diagnose failures before they become brand safety problems.

The Permanent Shift to Hybrid Human-AI Workflows

The hybrid model is here to stay for strategic work. The human role shifts from creator to editor, trainer, strategist.

Case data shows hybrid AI-human workflows outperform AI-only approaches for SEO rankings and cost efficiency across digital marketing platforms. Build for this by ensuring your tools have human-in-the-loop review stages and version control, not just fully automated pipelines.

Budgeting with the 30% Rule for AI

A practical guideline: allocate roughly 30% of your marketing technology budget to AI-specific capabilities and their ongoing governance.

This covers the tools, yes. But also evaluation frameworks, security audits, compliance checks, and the internal expertise needed to manage all of it. Think of it as investing in adaptability, not just features.

The most future-proof stack is loosely coupled, API-first, and built on clean data.

Choose platforms that publish their roadmaps and have a track record of integrating new AI capabilities. Not the ones selling a closed, "finished" system.

Putting It All Together: A Starter Stack for Different Scenarios

Theory is useful, but here are concrete examples. Three starter stacks, based on everything we've covered.

Scenario A: The Bootstrapped B2B Startup (Low DA, Lean Team)

Your priority is proving ROI with minimal budget and minimal technical overhead. Don't try to automate everything. Start focused and manual.

- Content & Creation Layer: Use ChatGPT Plus for rapid ideation and first drafts. Pair it with the free tier of Grammarly for tone and quality checks. For ads, the free Google Ads Asset Studio or Amazon Asset Studio are surprisingly capable for basic creatives.

- Data & System of Record: Your CRM (HubSpot, Pipedrive, whatever you're using) is your single source of truth. Keep it clean.

- Orchestration: Manual for now. Use native integrations where they exist, but skip complex Zapier automations until you've nailed the human workflow first. Your stack cost should be under £100/month.

The goal here isn't full automation. It's giving a small team enough leverage to punch above its weight without creating technical debt.

Scenario B: The Scaling SaaS Company (Growing Team, Established Workflows)

You have product-market fit and need to scale content and personalisation systematically. Integration and data governance become the hard part.

- Content Layer: Move to something like Jasper or Writer for brand-consistent, scalable content generation. Their Forrester TEI studies show customers achieving ROI over 300%, but verify that against your own numbers before you celebrate.

- Data Foundation: Implement a Customer Data Platform (Segment or mParticle) to create a unified customer profile. Without this, AI personalisation is mostly guesswork.

- Experimentation & Personalisation: Optimizely for A/B testing and multi-armed bandit optimisation. Their 2025 benchmark showed users run 78.7% more experiments [Source: Optimizely].

- Orchestration: Zapier or Make to connect your CMS, CDP, and analytics. Document every workflow. This is exactly where automation brittleness creeps in.

At this stage you're building a system, not just picking tools. Formalise data governance and assign ownership for each layer before it gets messy.

Scenario C: The Enterprise with Complex Compliance Needs

Your stack decisions are governed by security, legal, and regulatory requirements (GDPR, ISO 27001). Vendor due diligence comes first, everything else second.

- Prioritise Enterprise-Grade Vendors: Platforms like Adobe Experience Manager with Sensei AI or Bloomreach Engagement come with security certifications, on-premise/hybrid deployment options, and full audit trails. Bloomreach users achieved a 251% ROI in a Forrester TEI study [Source: Bloomreach].

- DAM & Governance: Invest in an enterprise Digital Asset Management platform (e.g., Aprimo) with AI-powered tagging and strict permission controls.

- Avoid Lock-in: Insist on open APIs and clear data portability clauses in contracts. Your orchestration layer might need to be custom-built to meet specific compliance workflows.

If you're just getting started and wondering where to land first: pick one high-impact use case in a single layer. AI for social caption ideation, or email subject line generation. Master that before you attempt anything resembling a full agentic stack.

Prove the value in one corner of your marketing across digital marketing platforms. Then expand. Methodically.

Conclusion

Picking your AI marketing stack is an architectural decision, not a feature checklist. The data backs this up: 94% of marketers now use AI tools daily, but automation ROI drops 60%+ for teams missing the core pillars like clean data [Source: DigitalApplied]. The underlying system matters more than any individual tool.

Avoid vendor lock-in by prioritising open APIs and modular design. The vendor TEI studies showing 251%-342% returns are compelling, but only if you can actually replicate those results in your own environment [Source: Bloomreach, Jasper].

Measure everything through Time to Value and real ROI. Not vendor promises.

Digital marketing platforms will keep evolving. A stack built on clear layers, governed data, and human oversight will adapt. One that isn't, won't.

Start by auditing what you have. Map every tool to a functional layer, document your integration points, and be honest about the gaps. The goal isn't to buy the most tools.

It's to build the most effective system.

Frequently Asked Questions

What are the top 5 digital marketing platforms?

Forget chasing specific brands. The "top" digital marketing platforms change every year, so think in categories instead.

In 2026, the five that matter are: AI-powered content creation and optimization (Jasper or Writer), data infrastructure and analytics (CDPs, Google Analytics), personalization and experimentation engines (Optimizely or Bloomreach), multi-channel distribution and ad tech with native AI (Google Ads Asset Studio), and orchestration and workflow automation (Zapier or agentic frameworks). [Source: grandviewresearch.com]

Which combination is right? Depends entirely on your existing stack and what you're actually trying to do.

Which platform is best for digital marketing?

"Best" doesn't mean anything without context.

A platform built for a large enterprise with a dedicated data engineering team would be an expensive, over-engineered mess for a 10-person startup. The best platform is the one that solves your specific problems, integrates cleanly with what you already have, and delivers measurable ROI within your budget.

Use the evaluation framework in this guide. Define your requirements, run controlled pilots, and let your own data decide. Not the vendor's marketing.

What is the 3 3 3 rule in marketing?

It's a resource allocation heuristic, mostly to stop you from rushing into poorly measured tech purchases.

Roughly 30% of your time and budget goes to researching and planning your stack strategy, 30% to building and implementing the tools you've chosen, and 30% to measuring and analyzing the results. The last 10% is a buffer for the inevitable surprises.

The point is that you have a clear measurement plan before you spend anything.

What is the 10 20 70 rule for AI?

Popularized by Andrew Ng: 10% of your AI effort goes into algorithm development, 20% into technology and data infrastructure, and 70% into business process integration, change management, and upskilling your team. [Source: cloudsecurityalliance.org]

For marketing, that means most of your investment should go into weaving AI tools into human workflows and training your people.

Not just buying the shiniest new software.

What are the 5 things AI Cannot do?

Human oversight isn't optional here. AI can't develop truly novel brand strategy or creative vision, understand nuanced cultural context and empathy at a human level, make ethical judgment calls without pre-defined human guardrails, assume responsibility for compliance and legal risks, or replace human relationships in high-touch B2B sales.

Your stack design needs explicit human-in-the-loop checkpoints for all of these.

What are the 3 C's of AI?

A useful lens for evaluating AI-generated marketing content.

Context: Does the output actually fit the audience, campaign goal, and brand moment? Consistency: Does it maintain brand voice, factual accuracy, and quality across all outputs over time? Control: Do you have the governance tools, approval workflows, brand guardrails, editing interfaces, to direct and correct the AI's work?

If you can't answer yes to all three, you're not ready to deploy.