April 20th, 2026

Who Owns AI-Generated Content? Copyright Laws and SEO Implications

WD

WDWarren Day

You're deploying AI to scale content, but one question keeps eating at the strategy: can you actually own what the machine produces? The AI content creation market is worth $2.5 billion [Source: datamintelligence.com], but without clear ownership, your SEO investment doesn't have a foundation to stand on.

For technical founders and ops leads, the answer to "who owns ai-generated content" dictates everything downstream.

Here's the short version: purely AI-generated text falls into the public domain. Anyone can copy it. But add meaningful human contribution through editing, arrangement, or selection, and you cross into copyrightable territory. That's not just a legal box to check. It's the difference between thin, penalized content and material that actually builds domain authority.

Lasting search equity requires copyrightable content, and that's only achievable through a hybrid workflow that documents human creative input in a systematic way. One that satisfies both legal authorities and search algorithms.

What follows covers the specific thresholds for human authorship, how search engines detect and evaluate AI content, and how to structure your content pipeline to clear both bars. Concrete workflows, not abstract principles.

Who Owns AI-Generated Content? The Legal Framework Explained

So who actually owns AI-generated content? The legal answer isn't a grey area. It's binary.

Under current U.S. guidance, copyright requires human authorship. No human author, no copyright. That content goes straight into the public domain, where anyone can copy, modify, or republish it without your permission.

This got settled in Thaler v. Perlmutter (2025), where a federal court upheld the U.S. Copyright Office's refusal to register a work created solely by an AI. The court affirmed that copyright law protects only "the fruits of intellectual labor" that are "founded in the creative powers of the mind" of a human (Source: nysba.org). The Copyright Office's 2025 report on AI says the same thing: meaningful human contribution is the threshold.

For a practical answer to "who owns ai-generated content":

- Solely AI-generated content (no meaningful human input): No one owns the copyright. It's in the public domain. You cannot stop others from using it.

- AI-assisted content (with meaningful human creative contribution): The human author(s) own the copyright for their original contributions. The AI is a tool, like a camera or a word processor.

The distinction comes down to that phrase "meaningful human contribution." It's not about whether you used AI. It's about what you actually did with the output. Editing a draft, arranging outputs into a structure you designed, integrating AI material into a larger piece you authored, all of that can constitute the creative input you need.

This thinking is spreading globally too. The EU maintains a human originality requirement. China's Beijing Internet Court ruled in 2025 that a user who crafted a detailed prompt and settings for a Stable Diffusion image owned the copyright, not the platform developer, which recognizes creative input at the prompt level.

An earlier case that shows how this plays out for text is Shenzhen Tencent v. Shanghai Yingxun (2019). A Chinese court held that financial articles generated by Tencent's "Dreamwriter" AI were protectable because the company's engineers made creative selections in designing the system's templates, data inputs, and triggering conditions. Copyright vested in Tencent because of the human intellectual input behind the system, not because of anything the AI did.

For your content operations, this means ownership isn't automatic. Prompting ChatGPT and hitting publish creates a public domain asset with zero defensible intellectual property. The legal framework forces you to build workflows that document and substantiate human creative control.

Do You Own AI-Generated Content? It Depends on Your Input

The answer is simple: you own it if you've made a meaningful human contribution.

Not a vague standard. That's the actual legal threshold that determines whether your work is copyrightable or sits in the public domain. The U.S. Copyright Office's 2025 report is clear: editing, arranging, selecting AI output, or integrating it into a larger human-authored work can satisfy the originality requirement.

Think of AI like a camera. You own the photograph if you composed the shot, chose the lighting, and edited the final image. You don't own a random snapshot taken by a robot on a timer. Your ownership claim hinges on your creative decisions after the AI generates its initial output.

Here's what counts as sufficient contribution in practice:

- Creative selection and arrangement: You take raw AI-generated paragraphs and structure them into a unique narrative flow, adding section headers and transitions that weren't in the original output.

- Substantive editing that adds new expression: You rewrite introductions, add proprietary data or case studies from your experience, and inject original analysis that transforms generic points into specific insights.

- Integration into a larger human-authored work: You use AI to draft a technical explanation, then embed it within a broader article where you provide the strategic framework, conclusions, and practical recommendations based on your expertise.

Platform Terms of Service are a separate concern. They govern your use of the tool, not necessarily the final copyright of the output you create with it. Always review them though, some ToS grant the platform broad licenses to use your prompts and outputs.

For formal protection, you must disclose AI use in your copyright application. This isn't optional.

The U.S. Copyright Office requires you to identify and disclaim AI-generated material. Failure to do so can lead to registration cancellation and statutory fines up to $2,500 U.S. Copyright Office. This disclosure directly informs the practical workflow we'll discuss later.

One more thing: the "30% rule" is a myth. There's no legal or algorithmic threshold where changing X percent of words magically grants ownership. Chinese courts have recognized copyright when users crafted detailed prompts and parameters for Stable Diffusion images, focusing on creative input rather than word counts. Ownership depends on the nature of your contribution, your creative judgment, not a percentage score.

The SEO Reality: Algorithms Detect and Judge AI Content

Google's official line is that they care about quality, not origin. "We reward high-quality content, however it's produced." Technically true. But it's a semantic game.

The quality signals Google's algorithms are trained to detect, E-E-A-T: Experience, Expertise, Authoritativeness, Trustworthiness, are fundamentally human attributes. Content that lacks them gets filtered out, regardless of who or what wrote the words.

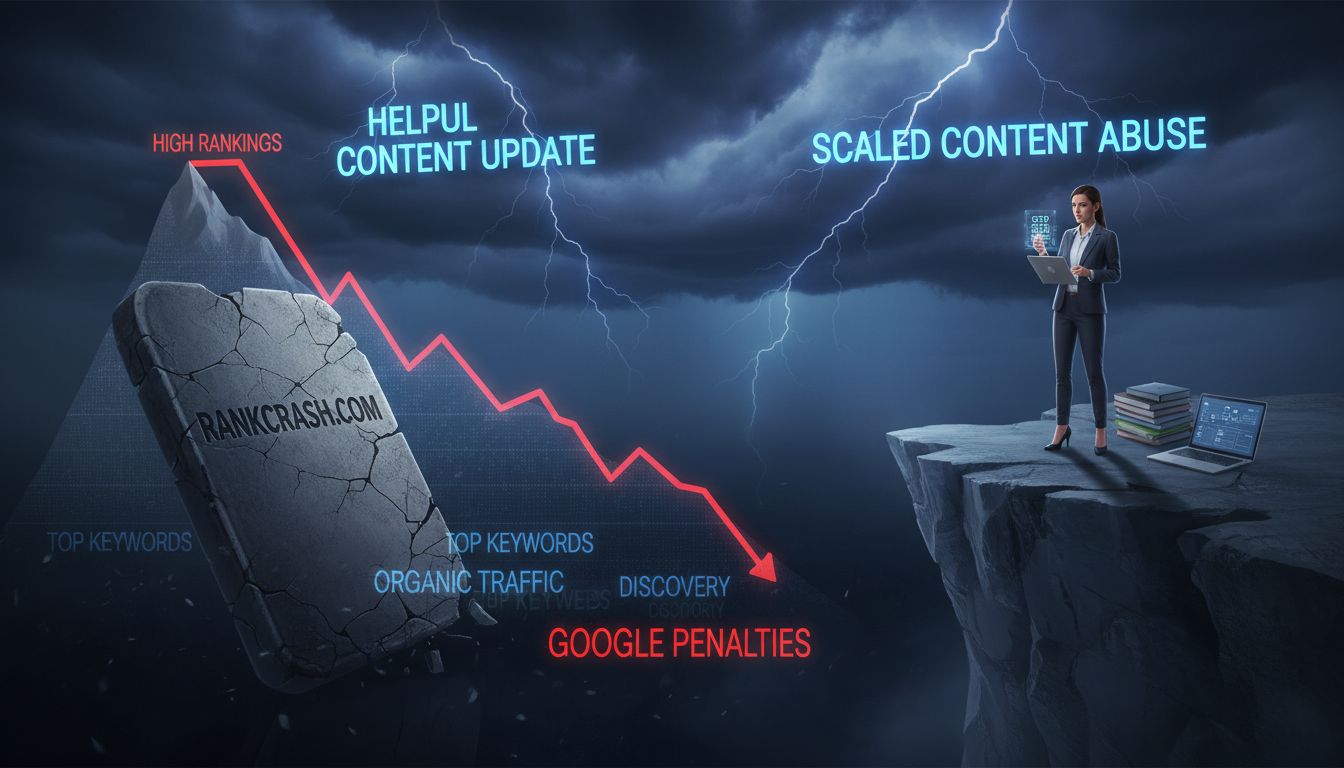

The penalties are explicit. The Helpful Content Update specifically targets content created for search engines rather than people. The "scaled content abuse" policy goes after mass-produced, low-value content intended to manipulate rankings.

The result isn't a gentle nudge down the SERPs. It's a cliff. One e-commerce blog publishing unedited AI-generated product reviews saw a 40% organic traffic drop after the 2024 update. That's business-ending for most sites.

From an engineering perspective, detection isn't just about an "AI classifier." It's about spotting statistical fingerprints that correlate with low-quality, templated output, things like perplexity (how predictable the text is) and burstiness (variation in sentence structure).

Pure AI text often has unnaturally uniform sentence length and lexical density. Add in template repetition across pages, zero citation trails to primary sources, and a backlink profile that's either non-existent or full of spammy links from automated link-building schemes, and the pattern is obvious.

Here's the variable most guides miss: Domain Authority (DA/DR).

A low-DR site like a new SaaS startup has zero margin for error. Google's crawl budget is limited, it samples your site and extrapolates quality. Find a cluster of thin, templated pages and it may deprioritize crawling your entire domain.

High-DR legacy media sites have more leeway. Their established trust acts as a buffer. But they face catastrophic risk if they trigger a manual "scaled abuse" penalty, which can lead to site-wide de-indexing.

The February 2025 SE Ranking experiment is the cautionary tale here. Researchers built twenty websites populated entirely with AI-generated pages. They initially ranked for long-tail keywords, so yes, pure AI content can technically rank.

Then, within weeks, all sites lost every keyword position as Google's systems identified the pattern. Short-term boost, then the floor collapses.

Building Spectre, my own SEO platform, forces me to confront this daily. The gap between a keyword's "difficulty" score in Ahrefs and the realistic ranking potential for a low-DA site is vast.

You might target a keyword with a difficulty score of "10," thinking it's easy. But if the top ten results all have DR 70+ and you're sitting at DR 15, your AI-generated page has almost no chance, regardless of how optimized it is.

The ROI calculation for AI content falls apart if you don't factor in the domain authority moat. You're not competing on content quality alone. You're competing on trust equity you don't have. And the question of who owns ai-generated content becomes almost academic if nobody's finding it in the first place.

A Hybrid Workflow: Designing for Ownership and Rankings

The fix isn't avoiding AI. It's building your content pipeline so human creative input gets documented at every step.

I call this "Creative Input Logging." The goal is audit trails that satisfy both copyright examiners and search algorithms looking for E-E-A-T signals. Here's the five-step checklist I use:

Step 1: Human-Defined Intent

Before any AI touches a piece, a human defines the topic, angle, target audience, and commercial intent. This isn't just SEO strategy, it's the foundational creative decision that establishes authorship. Log it as structured metadata in your CMS: author_intent: "Explain hybrid workflows to technical founders concerned about copyright and low DA".

Step 2: Human-Curated Inputs Feed the AI with human-researched data. Use the Ahrefs API to pull competitor SERP structures, or DataForSEO to analyse featured snippets and "People also ask" patterns. That curated research becomes part of your creative contribution. A sample prompt structure I use in Spectre:

Background: [Human-written analysis of 3 top-ranking articles]

Data points: [SERP features present, word count range, header structure from Ahrefs]

Unique angle: [Human-defined differentiation based on gap analysis]

Step 3: AI-Assisted Drafting Let the LLM generate a draft from your structured inputs. Document exactly which model and version you used, and save the full prompt and completion. This matters for copyright disclosure requirements, not just good practice.

Step 4: Substantive Human Editing & Expertise Injection This is where the question of who owns ai-generated content actually gets answered, and where E-E-A-T gets built. Don't just tweak sentences. Add:

- Personal anecdotes or case studies from your experience

- Original data or analysis (even simple spreadsheet calculations)

- Counterarguments or nuanced perspectives the AI missed

- Specific tool recommendations with "why" explanations

Track these edits in version control. A sample Git commit message:

Added: Personal case study from Spectre platform development

Added: Original analysis of DR vs. ranking correlation

Modified: AI-generated tool list to reflect hands-on experience

Enhanced: "Gotcha" section about API rate limits

Step 5: Human Final Review & Publishing A human approves the final version, checking voice consistency and factual accuracy. That final creative judgment completes the authorship chain.

Technical Implementation for Provenance

For images, use tools with built-in provenance. Midjourney's --weird parameter experiments can count as creative input, but add Google's SynthID watermarking or C2PA Content Credentials metadata to establish clear origin trails.

Implement IndexNow protocol for instant search engine notification, especially important when you're updating AI-assisted content frequently. That freshness signal matters more when you're competing against established domains.

Monitoring and Iteration

Don't publish and hope. Use SE Ranking to track how your hybrid content performs against pure AI or pure human pieces. Check Bing's AI Performance Dashboard to see if your content gets cited in AI Overviews, a growing visibility channel that accounted for ~30% of US desktop keyword results by September 2025.

Where Pure AI Works Fine

Some SEO tasks don't need copyright protection at all. Use AI for:

- Generating schema markup variations

- Clustering keywords by semantic similarity

- Creating internal linking suggestions based on topic modeling

- Drafting meta descriptions (then human-tweak for click-through)

Every piece that needs long-term SEO equity gets the five-step process. Everything else gets automated aggressively.

That's not about slowing down. It's about building assets you actually own, instead of content that disappears the next time an algorithm update runs.

Navigating Pitfalls: From ToS Traps to Duplicate Content

Building a hybrid workflow solves the core problem, but you'll still hit landmines if you're not careful. Here are the specific mistakes I've seen teams make when scaling AI content.

Don't assume platform terms grant you ownership. Read OpenAI's, Midjourney's, or Claude's Terms of Service. Some grant broad licenses for commercial use, but others have restrictions on volume, redistribution, or moral rights. If you're building a content product you plan to sell or license, verify the ToS allows it first.

Publishing unedited AI drafts at scale is a direct trigger for Google's "scaled content abuse" filters. The SE Ranking experiment from February 2025 is the case study here: twenty sites with fully AI-generated pages initially ranked, then lost all keyword positions within weeks.

Google's algorithms now detect patterns of templated, unoriginal text. Your hybrid workflow means nothing if you skip the human review step the moment a deadline hits.

Duplicate content isn't just about copying from competitors. When thousands of users prompt ChatGPT with "write a 500-word blog post about CRM software," the outputs share alarming structural and phrasing similarities.

Search engines can detect this low-entropy, mass-produced text. Your "unique" AI article might be nearly identical to hundreds of others published that same day.

On the input side, there's a looming infringement risk. 92% of surveyed authors reported publishers had not asked to license their works for AI training. The legal battles over training data are ongoing, and the question of who owns ai-generated content gets even messier when the model itself was trained on unlicensed work.

This is especially acute for visual content, where AI image generators can produce outputs that are derivative of specific artists' styles.

Finally, be meticulous with copyright registration disclosures. The U.S. Copyright Office requires you to identify AI-generated portions. Failing to disclose isn't an oversight, it's grounds for cancellation of your registration and statutory fines up to $2,500.

If you want to own it, you have to properly claim it.

The Future: AI Overviews, Provenance, and Evolving Standards

Search is shifting from a list of links to a generated answer engine. AI Overviews now show up for roughly 30% of US desktop queries. That's not a prediction, it's already happening, and it's already reshaping traffic.

When Google's AI answers a query directly, it cites sources. The algorithm is looking for authoritative content to pull into its answer.

Your content isn't just competing for page one anymore. It's competing to be the raw material for the answer itself. That requires verifiable expertise and clear sourcing, signals that are inherently stronger when there's documented human contribution behind the work.

This connects to the next thing worth watching: digital provenance. Standards like C2PA and Google's SynthID embed invisible metadata and watermarks into AI-generated media.

This isn't just for catching deepfakes. It's a technical protocol for establishing chain of custody, proving where content came from and who touched it. In a market flooded with indistinguishable AI outputs, verifiable provenance is a real differentiator. It's also the technical backbone for proving the "meaningful human contribution" that copyright law actually requires.

Legislative pressure is building too. The federal COPIED Act would mandate disclosure of AI training data and establish digital watermarking. State bills are targeting deepfakes and AI risk. The direction is obvious: regulators want transparency about origin and ownership, and the question of who owns ai-generated content is only going to get more scrutinized.

Your hybrid workflow isn't just good SEO practice for right now. It's architecture for what's coming.

When you document your human input, your expert prompts, your editorial revisions, your data synthesis, you're not just clearing the copyright bar. You're building the verifiable sourcing that generative search will cite and that provenance standards will authenticate.

The machine writes the draft. Your documented creative control is what makes it ownable, rankable, and durable.

Important Context: AI Tool Ownership vs. Content Ownership

Who owns the company that built the tool has nothing to do with who owns what the tool produces.

This trips people up because related questions show up together in search results. People ask "who owns 50% of OpenAI?" or "does Elon Musk fund OpenAI?" Those are questions about corporate equity and investment. They have no bearing on who owns ai-generated content that you actually create.

OpenAI has a complex ownership structure involving employees, investors, and Microsoft. No single person owns 50%. Elon Musk was a co-founder but isn't a current funder. Sam Altman's net worth comes from his equity stake and role, not from any claim on the content you generate.

None of that affects your copyright.

Your legal rights come down to your use of the tool and the creative input you put in. That's it. Whether you're using ChatGPT, Claude, or an open-source model, the rule is the same: corporate ownership of the tool and copyright in its output are separate legal domains entirely.

Focus your documentation on your creative process. Not the vendor's cap table.

Conclusion

The question of who owns AI-generated content isn't abstract. It's what determines whether your content has any lasting SEO value.

Without copyright protection, your work falls into the public domain. Zero defensible search equity. The $2.5 billion market for AI content tools [Source: datamintelligence.com] runs on a simple binary: meaningful human contribution grants ownership, pure AI output doesn't.

The fix is a hybrid, documented workflow where human creativity directs AI execution. That satisfies the U.S. Copyright Office's threshold for authorship and produces the quality signals Google rewards. Your site's Domain Authority also matters here, lower DR sites need more intensive human input to get past algorithmic skepticism.

And this approach holds up over time. It aligns with provenance standards like C2PA, fits where EEAT is heading, and accounts for the reality that AI Overviews now appear for roughly 30% of US desktop searches [Source: seoclarity.net].

Ownership isn't just a legal checkbox. It's the foundation of sustainable organic growth.

Your next step: Audit your current content pipeline. Map your process against the hybrid workflow checklist. Start implementing "creative input logging" in your CMS. For specific legal advice on copyright registration, talk to an intellectual property attorney.

Frequently Asked Questions

Who owns the content legally if AI writes your content?

Under current U.S. copyright law, purely AI-generated content falls into the public domain. Nobody owns it [Source: copyrightalliance.org].

But if you bring meaningful human creative input, detailed prompting, editing, arranging, or weaving AI output into a larger human-authored work, you may qualify as the legal author. The question is whether your contribution clears the originality threshold. The Shenzhen Tencent v. Shanghai Yingxun case is a useful reference here: creative selection and arrangement was enough to secure copyright.

Do I own AI-generated content?

Not automatically. Purely AI-generated content needs "meaningful human contribution" before copyright kicks in.

That means going beyond a basic prompt. Editing, fact-checking, adding original examples, structuring the piece in a way that reflects actual creative decisions, that's the kind of input that matters. Without it, the content sits in the public domain and anyone can use it. Including your competitors.

What is the 30% rule for AI?

There is no 30% rule. Not in copyright law, not from Google. It's a myth that keeps circulating.

Legal protection comes down to the nature and quality of your human creative contribution, not some word-change percentage. Search engines look at content holistically, not through edit ratios. Focus on putting in real human input, not hitting an arbitrary number.

What are the 5 things AI Cannot do?

For content that's both ownable and rankable, AI can't do any of the following: have genuine personal experience or expertise, possess original intent or a real creative vision, conduct original research or interviews, build actual personal authority (the first "E" in E-E-A-T), or take legal responsibility for accuracy or ownership claims.

Those gaps are exactly where human input isn't optional, for copyright and for SEO.

Who owns 50% of OpenAI?

Nobody does. OpenAI has a complex ownership structure involving employees, investors like Microsoft, and other stakeholders. No single entity holds 50%.

And none of that has any bearing on who owns AI-generated content. Your rights to content made with ChatGPT depend entirely on your human creative contribution, not on how OpenAI is structured.

Does Elon Musk fund OpenAI?

Elon Musk co-founded OpenAI and was an early funder, but he's not involved in its current funding or operations. Microsoft's multi-billion dollar partnership is the more relevant relationship now.

Either way, none of this affects who owns AI-generated content you create with their tools. That comes down to one thing: your creative input.