March 9th, 2026

Beyond the Google SEO Starter Guide: A 2026 AI-First Strategy for SaaS

WD

WDWarren Day

You've read the google seo starter guide, set up your blog, and maybe even seen some traffic. But your organic growth has stalled, and this new 'AI SEO' thing feels like another layer of complexity you don't have time for.

Here's what actually changed: AI assistants now intercept your potential customers before they click through to your site. ChatGPT logged over 637,000 sessions to SaaS sites in a single year. AI Overviews slashed traditional organic click-through rates by 61%. The traffic you spent months building is evaporating, not because your content is bad, but because the game shifted from ranking for clicks to being cited by AI agents.

For SaaS companies in 2026, effective SEO means shifting from a keyword-ranking playbook to an AI-first framework. That means technical crawlability for AI agents, entity optimization for citations, and tracking conversion lifts instead of just traffic from AI referrals.

Before you write off SEO entirely, look at this: AI-referred traffic converts at 14.2% compared to just 2.8% for traditional Google organic. SaaS brands are seeing conversion rates six times higher from LLM-driven search. The opportunity didn't disappear. It moved. Your buyers are still searching, they're just getting answers from ChatGPT, Perplexity, and Google's AI Overviews instead of your landing pages.

This guide walks you through a practical, three-phase AI-First SEO Framework built for SaaS teams with limited resources. You'll learn how to do seo for website step-by-step in the AI era: auditing your site's visibility to AI crawlers, optimizing content and entities for citations, and scaling operations while measuring what actually matters—conversions, not vanity metrics. No hype, no magic solutions. Just a clear strategy to reclaim growth in a fundamentally different search landscape.

The SEO Game Has Changed: From Clicks to Citations

The google seo starter guide taught you a playbook that worked brilliantly for 2015. Rank in the top three blue links, optimize your title tags, watch click-through rates climb. You built content around keywords, earned backlinks, and traffic flowed predictably into your funnel.

That world is disappearing faster than most SaaS founders realize.

In 2026, most searches never result in a click. AI Overviews now appear on 59% of informational queries and 19% of commercial queries. When someone asks "best project management software for remote teams," they're not scrolling through ten blue links anymore. ChatGPT, Perplexity, and Google's AI Overview synthesize an answer on the spot, pulling from sources they trust. If your brand isn't cited in that answer, you don't exist in the buyer's consideration set.

This isn't a future trend. From November 2024 to December 2025, SaaS sites logged 774,331 LLM sessions. ChatGPT alone accounted for 82.3% of that traffic. Your prospects are already using AI agents to research solutions, compare features, and shortlist vendors before they ever visit a traditional search result.

Here's what makes this shift existential for SaaS growth: AI-referred traffic doesn't just visit differently. It converts differently. One e-commerce analysis found AI-referred traffic converting at 14.2% versus 2.8% for standard organic visitors. These aren't casual browsers who bounce after skimming your hero section. They're qualified buyers who arrive with intent because an AI agent pre-screened your solution as relevant.

The old SEO playbook optimized for clicks. The new one optimizes for citations, appearing as the authoritative source when AI agents answer your prospect's questions. Rankings still matter, but only because they increase your likelihood of being cited. Traffic volume matters less than traffic quality and conversion lift.

SEO isn't dead. But if you're still chasing keyword positions without understanding how AI crawlers parse your site, you're optimizing for a game that's already over.

Phase 1: Audit – Why Your SaaS Site is Invisible to AI (And How to Fix It)

Here's the uncomfortable truth: your site might be beautifully designed, lightning-fast for human visitors, and ranking well in Google, yet completely invisible to the AI systems sending you the highest-converting traffic.

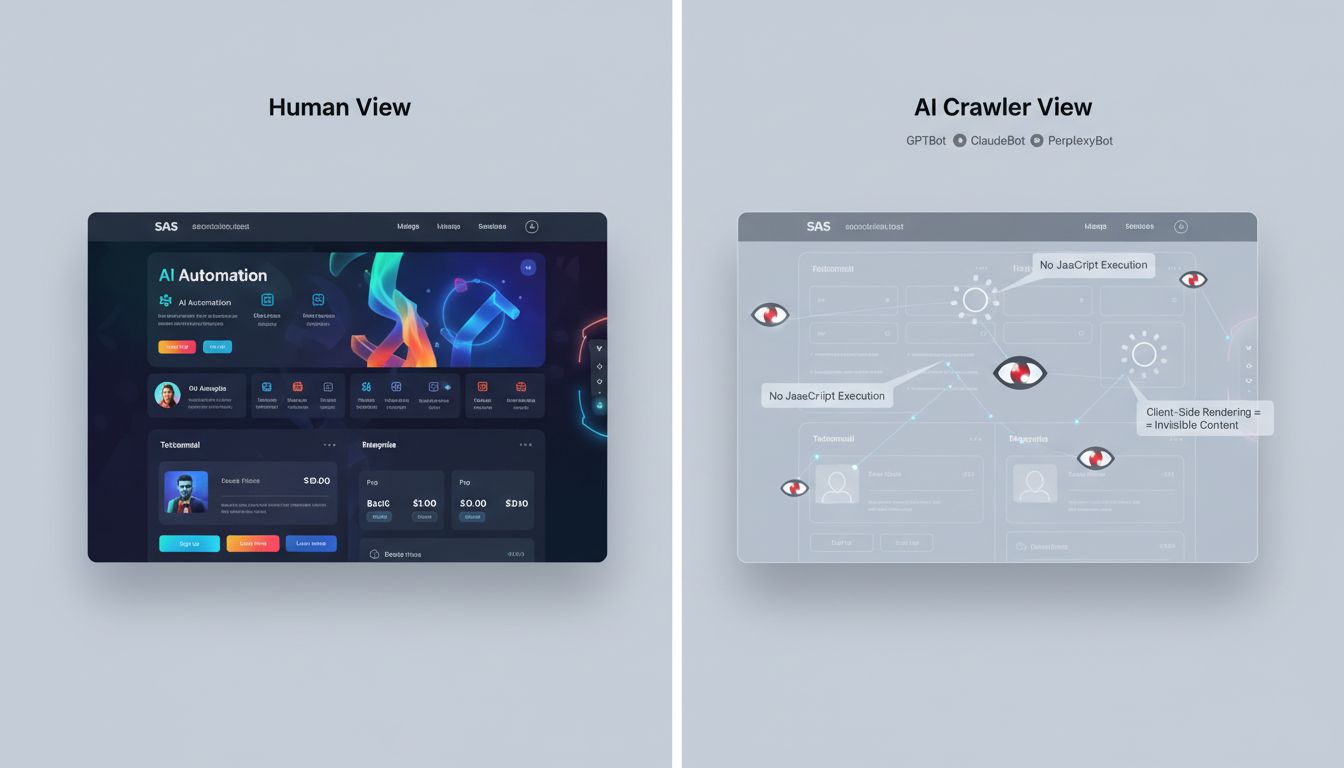

The problem isn't your content strategy or keyword targeting. It's that most AI crawlers don't execute JavaScript the way Googlebot does. GPTBot, ClaudeBot, and PerplexityBot hit your server, grab the raw HTML, and leave. If your product descriptions, pricing details, or feature comparisons load client-side via React or Vue.js, those bots see an empty shell.

You've essentially built an AI black hole.

Internal search pages, which often render dynamically, captured 41.4% of all SaaS AI traffic in recent studies, but only when the content was actually crawlable. If your implementation relies on client-side rendering without a fallback, you're forfeiting the single highest-penetration page type for LLM sessions. Not hypothetical. Happening right now.

The fix requires three layers, not one magic switch:

First, prioritize server-side rendering (SSR) or pre-rendering for your core conversion pages. SSR means your server sends fully-formed HTML to every visitor, bot or human. Pre-rendering generates static HTML snapshots at build time. Both approaches guarantee AI crawlers see your actual content, not a loading spinner.

Dynamic rendering, serving different HTML to bots versus users, sounds tempting, but Google has made clear it's a stopgap, not a strategy. Maintaining two versions of your site creates drift, introduces bugs, and still leaves newer AI crawlers in the cold if they don't identify themselves properly. You're creating more problems than you're solving.

Your immediate audit checklist:

Run a crawl using Screaming Frog (or any technical crawler) in list mode, which mimics a non-JavaScript bot. Export the URLs and content it discovers. Then run a second crawl with JavaScript rendering enabled. The delta between these two lists is your AI visibility gap.

Check your robots.txt file. Blocking GPTBot might protect training data, but it also removes you from ChatGPT's browsing results entirely. Decide deliberately whether you want discoverability or data control, you can't have both.

Finally, verify your XML sitemap URLs match your post-rendering HTML. If your sitemap points to /product/feature but that page only exists after a JavaScript router runs, AI crawlers will hit a dead end.

Fix these three issues before you write another blog post. The best content in the world doesn't matter if the systems citing it never see it in the first place.

The 2026 AI-First SEO Framework: A 3-Phase Guide for SaaS

You're probably wondering how to do SEO for website step-by-step when the traditional playbook keeps failing. The answer isn't another 47-point checklist. It's a prioritization framework built for how search actually works in 2026.

The AI-First SEO Framework has three phases: Audit → Optimize → Scale. Each phase builds on the last. Skipping ahead is how most SaaS teams waste months on content that never ranks.

Phase 1: Audit is where you fix what's broken. Can AI crawlers actually see your content? Does your site render properly for bots that don't execute JavaScript? If ChatGPT can't crawl your pricing page, optimizing your blog is pointless. Your technical foundation needs to work before anything else matters.

Phase 2: Optimize is where you build authority. You'll structure content for entity recognition, implement schema that helps AI systems understand what you do, and create the topical depth that earns citations in AI Overviews and LLM responses. This is where most teams want to start, but they shouldn't.

Phase 3: Scale is where you systematize. Set up AI-assisted content operations, implement RAG infrastructure if needed, and track the metrics that actually matter. Citation frequency and conversion lifts, not just traffic volume.

Here's the hard truth most agencies won't tell you: if your team has limited resources, you start with Phase 1. Always.

A technically broken site will never rank, no matter how good your content is. I've seen SaaS companies spend six months producing brilliant thought leadership while their JavaScript-heavy site remained completely invisible to GPTBot and ClaudeBot. All that effort, zero AI citations.

Let's break down exactly what to do in each phase.

Phase 1 Deep Dive: The Technical Foundation for AI Crawlers

Most SaaS sites fail the AI crawl test before a single word of content is read. Your brilliant copy, your product positioning, your carefully crafted CTAs? None of it matters if AI crawlers can't see it.

JavaScript is your first problem. Many AI crawlers (GPTBot, Claude, Perplexity) don't execute JavaScript. If your React or Vue app renders content client-side, those crawlers see an empty shell.

Here's what invisible content looks like to an AI crawler:

// ❌ This content is invisible to most AI crawlers

useEffect(() => {

setFeatures([

'Real-time analytics',

'Custom dashboards',

'API access'

]);

}, []);

The fix? Server-side rendering or static generation.

In Next.js, use getServerSideProps or getStaticProps. In Nuxt, enable ssr: true. If you're on a legacy SPA, pre-rendering services like Prerender.io work as a stopgap, but treat them as temporary. Dynamic rendering (serving different HTML to bots) is not a long-term solution. Google's guidelines allow it, but it's fragile and adds maintenance overhead. Bite the bullet and render on the server.

Core Web Vitals matter more than you think. A slow site doesn't just hurt user experience. It reduces conversions by 7% per second of delay and limits how much AI crawlers will index during their allocated crawl budget.

Your targets: LCP under 2.5 seconds, INP under 200 milliseconds, CLS under 0.1. Test with PageSpeed Insights, then fix the obvious wins. Compress images, eliminate render-blocking scripts, defer non-critical CSS.

Schema markup is your direct line to AI understanding. While John Mueller confirmed in 2025 that structured data doesn't directly boost rankings, it absolutely influences AI citations and answer extraction. Think of schema as metadata that teaches AI what your page represents.

For SaaS products, use SoftwareApplication or WebApplication schema. Here's a complete example:

{

"@context": "https://schema.org",

"@type": "SoftwareApplication",

"name": "YourProduct",

"applicationCategory": "BusinessApplication",

"offers": {

"@type": "Offer",

"price": "49.00",

"priceCurrency": "USD",

"priceValidUntil": "2026-12-31"

},

"operatingSystem": "Web-based",

"description": "Clear 1-2 sentence description",

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.7",

"reviewCount": "312"

}

}

The key properties: applicationCategory tells AI what your software does, offers provides pricing context, and aggregateRating signals trust. Keep it accurate. Hallucinated schema gets ignored or penalized.

Validate every implementation with Google's Rich Results Test. Schema that throws errors is worse than no schema at all.

Internal link architecture is how AI maps your authority. A clean topic-cluster structure (pillar pages linked to 6-15 supporting articles) helps AI understand which pages are authoritative on which topics. Orphaned pages (zero internal links) are invisible. Run a Screaming Frog crawl to find and fix them.

Use descriptive anchor text. "Learn more" tells AI nothing. "How to reduce churn with cohort analysis" creates a semantic connection that AI can follow.

Your technical audit toolkit: Screaming Frog for crawl analysis and JavaScript rendering checks, Google Rich Results Test for schema validation, and GA4 combined with PageSpeed Insights for performance monitoring.

Run these monthly, not once.

The technical foundation isn't glamorous, but it's the difference between being cited and being invisible. Get this right before you write another word of content.

Phase 2: Optimize – Content & Entity Strategy for GEO and Authority

Once your technical foundation is solid, you need to rethink how you create and structure content. The old playbook, pick a keyword, hit a word count, sprinkle in some headers, doesn't work when AI systems are deciding whether to cite you.

The 4 Pillars of SEO in 2026 aren't what you learned. Forget "on-page, off-page, technical, and content." The framework that drives AI citations is: Crawlability (Phase 1), Entity Authority, GEO, and Conversion Tracking. If AI doesn't understand what you are and why you matter, your content is just noise.

Here's the counterintuitive part: apply the 80/20 rule backward. Spend 80% of your effort on technical foundations and entity optimization, only 20% on traditional keyword targeting. Why? Because AI-referred traffic converts at 14.2% compared to 2.8% for Google organic. A single citation in ChatGPT or Perplexity is worth more than ranking #3 for a mid-volume keyword.

Entity Optimization: Teaching AI Who You Are

AI systems don't rank pages. They cite entities.

An entity is any distinct concept: your brand, your product, your CEO, even your methodology. If these entities are inconsistent or undefined, AI won't reference you.

Start by defining your core entities. For a SaaS company, that's typically your brand, your primary product(s), your category (like "vector database" or "sales enablement platform"), and any proprietary frameworks you've built. Create dedicated entity pages for each. A /what-is-[product-name] page isn't just SEO filler, it's a canonical reference point for AI systems. Use the same exact name, capitalization, and description everywhere: your site, LinkedIn, Crunchbase, G2, your API docs, even your robots.txt comments.

Add schema markup to every entity page. Use SoftwareApplication or WebApplication schema with properties like applicationCategory, offers, and aggregateRating. Link each entity page to 3-6 authoritative external sources (Wikipedia, industry reports, academic papers) that contextualize your space. This signals topical trust.

GEO Tactics: Structuring Content for Citation

Generative Engine Optimization is about making your content extractable. AI systems scan for clear, concise answers they can paraphrase or quote.

Every high-value page needs a 40-80 word "Quick Answer" or TL;DR at the very top, before any preamble. Write it like you're answering a voice query: direct, factual, jargon-free.

Structure the rest with question-based H2 and H3 headings. "What is [concept]?" "How does [feature] work?" "When should you use [approach]?" AI models parse headings as semantic signals. Clear questions increase citation likelihood.

Declare a primary entity in the opening paragraph and link to your entity page. Then reference 3-6 related authoritative entities (competitors, complementary tools, standards bodies) with outbound links. This isn't about "link juice", it's showing AI you understand the ecosystem.

Optimizing High-Value Entry Points

Not all pages are equal in the AI era.

Internal search pages captured 41.4% of SaaS AI traffic, 8.7 times higher penetration than the site average. If your internal search results are thin or duplicate content, you're wasting your highest-leverage pages. Make each search result page unique with a brief editorial summary, structured filters, and FAQ schema answering common questions for that query. Treat them like landing pages, not database dumps.

Documentation and pricing pages also punch above their weight for LLM sessions. Docs need version-specific canonical URLs, synchronized dateModified timestamps, and zero marketing fluff. Pricing pages should lead with a comparison table (marked up with schema), a clear value proposition in the first 50 words, and FAQ schema covering objections.

This isn't about writing more content. It's about making every page legible to the machines that are replacing Google as the front door to your product.

Phase 3: Scale – AI Operations, RAG, and Measurement

You've fixed your technical foundation and optimized your content for AI citations. Now comes the part where most SaaS teams either scale intelligently or drown in AI-generated mediocrity.

Scaling AI-first SEO isn't about cranking out more content. It's about building systems that maintain quality while expanding reach, and measuring the business outcomes that actually matter, not just traffic vanity metrics.

Can ChatGPT Do Your SEO?

Yes, but only if you treat it like a junior analyst who needs constant supervision.

ChatGPT excels at specific, bounded tasks: generating content briefs from SERP analysis, drafting meta descriptions in bulk, brainstorming technical audit checklists, or reformatting FAQ schema. It fails catastrophically at strategy, quality judgment, and anything requiring recent product knowledge or competitive context.

The teams seeing results use AI as a force multiplier within a governed workflow, not as a replacement for expertise. Define clear roles: AI drafts, humans direct and refine. Never publish AI output without adding proprietary insight, fact-checking claims, and injecting your actual product experience.

AI Content Governance: The Non-Negotiable Workflow

Here's the minimum viable governance framework that prevents AI slop from tanking your domain authority:

Human Brief (with target keyword, audience, angle, and required examples) → AI Draft → Human Edit (add case studies, contrarian takes, product-specific insights) → Fact-Check (verify every statistic and claim) → Schema Review → Publish → Audit Log.

Version control isn't optional. Use Git for documentation, track who approved what, and maintain a content quality scorecard. Set automated flags for AI-generated pages that underperform on engagement metrics within 30 days, those need human rewrites, not just tweaks.

The governance bottleneck is usually approval workflows. Assign one person (not a committee) as the SEO editor with final call authority. Committees produce beige content that AI systems ignore.

Building a RAG System for Scale

If your SaaS has substantial documentation, a knowledge base, or product data that needs to surface in AI answers, you need Retrieval-Augmented Generation (RAG).

RAG works by chunking your content into embeddings, storing them in a vector database, and retrieving relevant passages when a query comes in. The critical design choices: chunk size (respect the ~2,048 token context window for most embedding models), retrieval strategy, and database infrastructure.

Hybrid search combining vector similarity (70%) with keyword matching (30%) consistently outperforms pure vector search. Multi-step retrieval, broad retrieve, rerank with a model like Cohere, then extract the passage, improves accuracy significantly. RAGLite with reranking hit 71.9% MAP@10 versus 65.8% without.

Vector database choice matters at scale. Self-hosted Qdrant on a $96/month DigitalOcean droplet handles 20 million vectors and 1.5 million monthly queries with zero per-query cost. Managed services like Pinecone are faster to deploy but cost $2,387 more per month at that scale. For early-stage SaaS, start managed and migrate to self-hosted when your vector count hits seven figures.

Measuring What Actually Matters

Stop obsessing over keyword rankings. In 2026, the metrics that correlate with revenue are AI visibility, citation frequency, and conversion lift from AI referrals.

Track AI Citations: Use Bing Webmaster Tools' AI Performance report to measure how often Copilot cites your brand (it tracks citations, not clicks). Amplitude AI Visibility quantifies your presence across ChatGPT, Perplexity, and Google AI Overviews. Monitor week-over-week changes and correlate spikes to specific content or schema updates.

Separate AI-Referral Conversions: Create a dedicated GA4 segment filtering traffic from ChatGPT, Perplexity, Claude, and Copilot referrers. AI-referred visitors convert at 14.2% versus 2.8% for traditional organic search, but you'll never see that lift if you lump everything into "organic."

Set up conversion modeling in GA4 for cookieless environments. Use server-side tracking, UTM parameters on shareable links, and GA4's User-ID feature to maintain attribution when third-party cookies vanish.

The Scaling Mistake Everyone Makes: Running AI content operations without isolating AI-referral conversion tracking. You'll produce hundreds of pages, see traffic tick up, and have no idea whether AI citations are driving signups or just bounces. Measure citations and conversions separately from day one, or you're flying blind.

The Pragmatic 2026 AI-First SEO Tool Stack

You don't need every tool. You need the right tools for your current phase.

Most SaaS teams waste budget on overlapping platforms when a lean stack delivers better results. I've seen companies paying for five different SEO suites that all do keyword research while their technical foundation crumbles because nobody's actually crawling the site.

Start with a robust crawler. If your technical foundation is broken, no amount of content optimization will save you. Here's how to build a stack that maps directly to the Audit → Optimize → Scale framework:

| Tool | Primary Use Case | Best For | Cost Note |

|---|---|---|---|

| Screaming Frog | Technical audit (Phase 1) | Finding JS rendering issues, broken schema, redirect chains | Free up to 500 URLs; paid ~$259/year |

| JetOctopus | Large-scale crawling & log analysis | Sites with 100K+ pages; tracking AI crawler behavior | From $99/month |

| Ahrefs | Keyword research, backlink analysis, competitor intel | Teams that need research + link-building in one platform | From $129/month |

| Semrush | All-in-one SEO + early GEO monitoring | Mid-market teams wanting consolidated reporting | From $139/month |

| Amplitude AI Visibility | AI citation tracking (Phase 3) | Measuring brand presence in LLM responses | Custom pricing |

| Clearscope | Content optimization & grading | Writers who need LSI term guidance and quality scores | From $189/month |

| Surfer SEO | On-page optimization | Fast content briefs and real-time optimization feedback | From $89/month |

| Qdrant (self-hosted) | Vector database for RAG | Teams building custom AI search; handles 20M vectors for $96/month | Infrastructure cost only |

| Pinecone | Managed vector database | Teams that want zero DevOps overhead | Free tier; scales with usage |

| Cohere | Reranking for RAG retrieval | Improving RAG accuracy in Phase 3 operations | API pricing; pay per call |

Looking for a free digital marketing course with certificate? Foundational courses cover SEO basics but rarely touch AI-crawlability, entity optimization, or RAG infrastructure. Use them to learn fundamentals like how to do seo for website step-by-step, then apply the frameworks here for the AI-first reality.

The rule: Choose based on your bottleneck. Stuck in audit? Invest in crawling. Content underperforming? Add Clearscope. Scaling AI operations? Prioritize vector infrastructure and measurement tools like Amplitude AI Visibility.

The google seo starter guide won't tell you this, but your tool stack should evolve as you move through phases. Don't buy everything upfront. Add tools when the pain of not having them costs you more than the subscription.

5 Common AI-First SEO Mistakes That Cripple SaaS Growth

You've built the framework, but these five mistakes can undo months of work. Each one is common, fixable, and quietly killing your AI visibility.

1. Blocking AI Crawlers in robots.txt

You want ChatGPT to cite you, but you've blocked GPTBot in your robots.txt.

This happens constantly. Teams copy legacy robots.txt files or blanket-block crawlers they don't recognize. I've audited SaaS sites spending thousands on content while actively preventing the very systems they want to reach.

The Fix: Audit your robots.txt and user-agent rules. If you want AI visibility, allow GPTBot, CCBot (Common Crawl), and Claude-Web. Blocking prevents both training data inclusion and real-time citation. You can't win a game you've opted out of.

2. The JavaScript Trap

Your site renders beautifully in Chrome. Googlebot's renderer handles it fine, so you assume you're good.

But many AI crawlers don't execute JavaScript. GPTBot, Perplexity, Claude... they see an empty shell where your product content should be. Your pricing page? Blank. Feature comparisons? Loading spinner. Product descriptions? Literally nothing.

The Fix: Run a curl test on your key pages (curl -A "GPTBot" yoursite.com/pricing). If you see blank content or loading spinners, move to server-side rendering or static pre-rendering. Dynamic rendering is a stopgap, not a solution.

3. Structured Data Neglect

You added schema markup in 2023 and forgot about it.

Your product has evolved since then. New features, pricing tiers, integrations. But your SoftwareApplication schema still lists the old version. AI systems rely on this data to understand what you offer, and you're feeding them outdated information about a product that no longer exists.

The Fix: Tie schema updates to your product release cycle. When features ship, update your structured data. Use Google's Rich Results Test quarterly to catch drift before AI crawlers do.

4. The Vanity Metric Trap

You're celebrating a spike in AI referral traffic, but your sign-up rate hasn't moved.

Traffic without conversion is noise. AI-referred visitors convert 11× better than organic when the intent is right, but only if you're attracting qualified searches. That traffic bump might just be people asking "what is [your category]" and bouncing after reading your homepage.

The Fix: Segment AI traffic in GA4 by source (ChatGPT, Perplexity, Copilot) and track conversion events separately. Optimize for conversion lift, not session count.

5. Unchecked AI Content

You're scaling content with AI tools, but no human is reviewing for accuracy, brand voice, or strategic positioning.

The result? Generic posts that dilute authority and confuse AI systems trying to understand your entity. When every article sounds like it was written by the same bland corporate template, you're not building expertise signals. You're creating noise that makes it harder for AI to figure out what you actually do and why anyone should care.

The Fix: Implement content governance. Every AI-generated draft needs a subject-matter expert review and a brand editor pass. Use version control and audit logs. Quality compounds; mediocrity just fills your sitemap.

Conclusion

The Google SEO Starter Guide covers the fundamentals, but we're past that now. If you're still chasing keyword rankings while AI systems cite your competitors, you're optimizing for yesterday's game.

The AI-First SEO Framework works. Right now, 774,331 LLM sessions are happening [Source: searchengineland.com], and SaaS companies using this approach are capturing them. Technical crawlability for AI agents isn't optional anymore. Entity optimization for citations isn't something you can postpone. Conversion tracking from AI referrals isn't premature. These are the basic requirements for growth when AI-referred traffic converts at 14.2% versus 2.8% for traditional organic [Source: seomator.com].

When someone asks how to do SEO for website step-by-step today, the answer starts differently than it did two years ago. Can AI crawlers actually read your content? Are you being cited? Do those citations convert to sign-ups?

The three-phase framework gives you a clear path. Start with the technical foundation by fixing JavaScript rendering, implementing structured data, and making sure your internal search and documentation pages are crawlable. Then optimize for entity authority and citations. Scale with governance and measurement that tracks conversions, not vanity metrics.

Your next step: Run the AI-crawlability audit from Phase 1. Fix what's broken. The citations will follow.

Frequently Asked Questions

Can you do Google SEO yourself?

Yes. The core work (technical audits, entity optimization, conversion tracking) doesn't require an agency. You need internal governance around AI crawlers and proper measurement. Less outsourcing, more control.

This article walks through the exact 3-phase process to execute it in-house, from audit to scale. The main shift is spending less time on traditional link building and more on making sure AI systems can actually find and cite you.

How can I start SEO as a beginner?

Make sure AI agents can crawl your site. That's the new 80/20 rule.

The AI-First Framework breaks down into three phases: audit your technical foundation for AI crawlers (Phase 1), optimize content for entity authority and GEO (Phase 2), then scale operations and measure conversions from AI referrals (Phase 3). This skips outdated keyword-first tactics and focuses on what actually drives business results in 2026.

What are the 4 pillars of SEO?

For the AI era, the four pillars are: Crawlability & Technical Integrity (so AI agents can access your content), Entity & Topical Authority (so AI systems trust and cite you), Generative Engine Optimization (GEO) (so you appear in AI Overviews and ChatGPT responses), and Conversion Tracking & Measurement (so you prove ROI from AI referrals).

These replace the traditional technical/on-page/off-page/content pillars because they align with how buyers actually discover SaaS products today.

Can ChatGPT do SEO?

No, but it's useful within a governed workflow.

ChatGPT can brainstorm audit checklists, generate content briefs, draft schema markup, or write meta descriptions. It can't replace your strategic judgment, technical implementation skills, or quality oversight. AI-referred traffic converts at 14.2% versus 2.8% for organic [Source: seomator.com], so treating AI as a magic solution without human governance will cost you conversions and credibility.

What are common SEO mistakes?

The most damaging mistakes in 2026: blocking AI crawlers (GPTBot, Claude) in robots.txt when you want visibility, relying on client-side JavaScript for primary content (many AI crawlers don't execute JS), using outdated or inaccurate schema markup, failing to track conversions from AI referrals separately, and publishing AI-generated content without human fact-checking or governance.

Each of these directly reduces your visibility to AI systems or undermines trust when you do get cited.

Will SEO be replaced by AI?

AI hasn't replaced SEO. It's transformed its objectives.

Your role is evolving from keyword rank tracker to AI visibility and conversion architect. The need for technical optimization, authority building, and strategic content is more critical than ever, but success now means earning citations in ChatGPT responses and tracking conversion lifts from AI referrals, not just climbing Google's page-one rankings.

SaaS brands see conversion rates six times higher from LLM-driven search [Source: onely.com], so the opportunity is bigger. Just measured differently.