April 5th, 2026

Local SEO Tools and Automation: A Strategy for AI-Driven Discovery

WD

WDWarren Day

You're managing 30 Google Business Profiles, tracking rankings in 15 cities, and responding to reviews across 5 platforms. The spreadsheets, calendar reminders, and manual updates holding this together are buckling. AI-driven local search doesn't care about your workarounds.

Here's the thing: the businesses winning local discovery right now aren't using better local seo tools than you. They've stopped thinking about tools altogether and started engineering systems. Specifically, a centralised data pipeline that treats your Google Business Profile as an API endpoint, not a dashboard someone logs into on a Tuesday afternoon.

AI Overviews now appear in 68% of local business-type queries. That's not a trend to monitor. It's a structural shift that makes reactive, manual local SEO operationally untenable.

When an AI system assembles a local recommendation, it pulls from brand-managed sources: your listings, your website, your review responses. If that data is stale, inconsistent, or siloed across disconnected tools, you're invisible before the race even starts. No amount of scrambling catches you up.

This article lays out a phased blueprint for building that pipeline: from the foundational data hygiene work that pays off in weeks, through core automation and tool orchestration, to AI-enhanced scaling that handles hundreds of locations without proportionally scaling headcount. We'll cover specific tool evaluation criteria, architectural decisions that actually matter, and ROI calculations grounded in real numbers, not vendor slide decks.

The goal isn't to automate everything. It's to automate the right things, in the right order, with the right guardrails.

Why Manual Local SEO Management is a Strategic Liability in 2026

The rules changed in May 2024 when Google rolled out AI Overviews to the US, then expanded to 100+ countries by year end. AI Overviews now appear in an average of 68% of local business-type queries. That's not a trend to monitor. It's a structural shift that makes the old workflow of logging into dashboards, copy-pasting business hours, and manually responding to reviews not just inefficient, but strategically dangerous.

Most multi-location businesses have accumulated what I'd call local search debt. NAP data that diverges across directories because someone updated the website address two years ago but forgot Yelp. A GBP with service descriptions written in 2021. Review streams across Google, Bing Places, and Apple Maps that nobody has the bandwidth to monitor consistently. Each inconsistency is a signal to AI systems that your business data is unreliable , and unlike human searchers, those systems won't give you the benefit of the doubt.

The stakes go well beyond map pack rankings. When an AI Overview synthesises an answer about "best accountants in Bristol," it pulls from brand-managed sources: your website, your GBP, your structured listings. Yext's analysis of 6.8 million AI citations found that 86% come from exactly those sources. If they contradict each other, or if they're stale, the AI either omits you or misrepresents you. There's no algorithm update to blame. The data simply wasn't trustworthy enough to cite.

Here's the reframe worth sitting with: local SEO isn't a marketing task anymore. It's a data engineering problem. Your operational business data , opening hours, service areas, staff bios, seasonal offerings , needs to flow automatically and consistently to every search surface. Not through manual updates. Through a centralised data pipeline where your GBP acts as the primary API endpoint, not a dashboard someone logs into occasionally.

That pipeline architecture is what the rest of this guide is about.

The 3-Phase Local SEO Automation Blueprint: From Quick Wins to AI Scale

Most local SEO automation fails not because the tools are wrong, but because teams skip straight to the sophisticated stuff before the foundations are solid. Running AI-generated content on top of inconsistent NAP data is like optimising a leaky funnel , you're accelerating the wrong thing.

This blueprint structures the work into three phases, each with a clear entry condition and a measurable exit gate before moving forward.

Phase 1: Foundational Data Hygiene covers the non-negotiable groundwork for local seo for small businesses and enterprise operations alike. No automation works without clean, consistent entity data underneath it.

Phase 2: Core Automation & Tool Orchestration is where operational efficiency starts to compound. Listings sync, review workflows, and rank tracking run without manual intervention.

Phase 3: AI-enhanced scaling is the competitive layer most people never reach. Programmatic content, RAG pipelines, and proactive AI discovery signals that the majority of competitors haven't built yet.

Each phase builds on the last. Skipping ahead is how budgets get wasted.

Phase 1: Foundational Data Hygiene (Your Quick-Win Checklist)

Before any automation is worth building, the underlying data has to be clean. Pipelines that ingest inconsistent or incomplete business information don't fix the problem , they amplify it. Phase 1 is about establishing a single source of truth before you connect anything to anything else.

Before any automation is worth building, the data underneath it has to be clean. Pipelines that ingest inconsistent or incomplete business information don't fix problems , they amplify them. Get this right first.

1. Claim and Complete Your Google Business Profile

Treat GBP as your primary API endpoint, not a marketing dashboard. Every field matters: business category, service areas, attributes, opening hours, products, and real photos. Not stock imagery , actual photos of the location, team, and work. AI systems scan visual content to confirm authenticity, and an incomplete profile signals a business that isn't actively managed.

2. Run a NAP Consistency Audit

Name, Address, Phone number inconsistencies across directories are a silent ranking killer. Businesses with consistent NAP data across major citation sources are 40% more likely to appear in the local pack, yet this remains one of the most neglected fundamentals. Use BrightLocal (plans from $39/month) or Moz Local to audit citations at scale, identify conflicts, and push corrections. For multi-location operations, do this before touching anything else.

3. Deploy Your Technical SEO Checklist for Local Pages

Honestly, this is where most local SEO efforts quietly fall apart. A solid technical seo checklist for local pages covers mobile page speed (Core Web Vitals), HTTPS, canonical tags, and structured data. Implement LocalBusiness, FAQPage, and Article schema on relevant pages. These aren't optional extras , they're the machine-readable signals that AI Overviews and LLM-based search surfaces use to extract and cite your business data.

Phase 1 Success Metrics:

- NAP consistency score: target 100% across Tier 1 directories

- GBP completeness score: all fields populated, minimum 10 photos

- Schema validation: zero errors in Google's Rich Results Test

This phase costs almost nothing beyond time. The returns are disproportionate.

Phase 2: Core Automation & Tool Orchestration

Phase 1 got your data clean. Phase 2 makes the machine run without you touching it every day.

The goal is simple: automate data flow and basic engagement tasks across every location so your team stops being the bottleneck.

1. Automate Listings Synchronisation

Stop updating GBP manually. Once you're managing more than three locations, that approach doesn't scale , it just creates drift.

The right mental model is a source of truth → push architecture. Your CRM or internal database holds the canonical business data. A listings platform like Yext or PinMeTo reads from that source and pushes changes to GBP and 200+ downstream publishers in real time. Change your opening hours in one place, and every directory updates automatically.

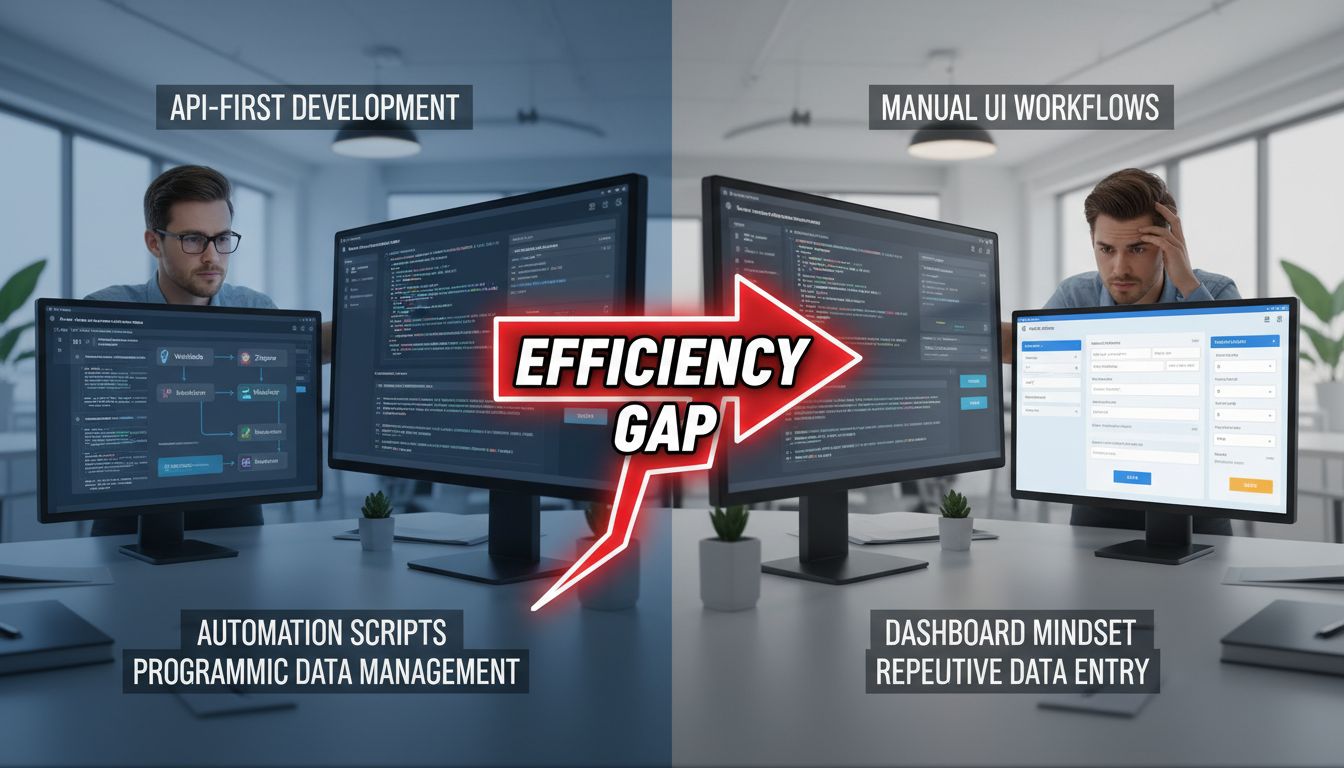

The distinction that actually matters here is API vs. dashboard mindset. Both Yext and PinMeTo expose APIs, which means you can trigger updates programmatically , from a deployment script, a CRM webhook, or a Zapier flow , rather than logging into a UI. That's the difference between a tool and an orchestration layer.

2. Automate Review Management

Reviews compound. Every 10 new reviews increases a GBP's conversion rate by 2.8%, and responding to 25% of reviews improves that figure by a further 4.1%. At scale, manually soliciting and responding to reviews just isn't viable.

Platforms like Birdeye and SOCi handle both sides: automated post-transaction review requests via SMS/email, and AI-generated draft responses queued for human approval. That last part matters. The draft goes to a human for a 30-second check before it publishes , this is the Human-in-the-Loop (HITL) model, and it's non-negotiable for brand voice consistency.

Fully automated responses without oversight are how you end up with a canned apology posted under a five-star compliment.

3. Rank Tracking & Citation Monitoring

You can't manage what you can't measure. BrightLocal and Whitespark both offer automated local pack tracking that monitors your visibility share across target locations and surfaces new citation opportunities on a schedule , no one manually pulling reports.

Core Local SEO Tools Comparison

| Tool | Primary Use Case | Key Integration Feature | Multi-Location Scalability | Starting Price |

|---|---|---|---|---|

| Yext | Listings sync | Real-time API sync to 200+ publishers | Enterprise-grade | ~$199/mo |

| PinMeTo | Listings + GBP API | Direct GBP API integration, GDPR-compliant | Strong (EU-focused) | Custom pricing |

| Birdeye | Reviews + reputation | Zapier/Make.com, CRM webhooks | Excellent | ~$299/mo |

| BrightLocal | Tracking + citations | API access, white-label reporting | Good for agencies | From $39/mo |

| Whitespark | Citation building | Manual + managed submissions | Per-location model | From $33/mo |

On Local Dominator: Worth noting as a specialist tool for agencies that need granular GeoGrid rank tracking alongside citation building. Its credit-pool pricing model (from $39/month, with rollover credits) is well-suited to agencies managing fluctuating location counts , you're not locked into per-seat costs during quieter months. It also now includes an AI Tracker for monitoring AI Overview appearances, which is increasingly relevant.

What Success Looks Like in Phase 2

According to ALM Corp's GBP automation research, businesses that implement this layer typically see 5-10 hours saved per location per month and a 15-30% increase in profile engagement within three months. Track those numbers. If you're not hitting them, the integration isn't working , and the most common culprit is a dirty source of truth feeding the pipeline.

Phase 3: AI-Enhanced Scaling & Proactive Discovery

Phases 1 and 2 keep you competitive. Phase 3 is where you build something harder to copy.

The goal shifts from efficiency to proactive authority. You're not just maintaining data anymore , you're engineering the conditions under which AI systems choose to cite your business over everyone else in your market.

Build Programmatic Local Pages

Hand-crafting location pages doesn't scale past a handful of locations. The practical alternative is a templated, data-driven setup: pull service lists, team bios, area descriptions, and local testimonials from a central CMS or database, then render them into location-specific pages programmatically.

The gotcha most people learn the hard way is that "programmatic" doesn't mean "unsupervised." Every template needs HITL checkpoints , at minimum, a brand voice review before first publish and a factual accuracy check for any dynamically injected data. The MTSOLN HITL workflow model handles this well: assign a Brand Voice Guardian who spot-checks a percentage of generated pages before they go live, not after a ranking drop forces your hand. That ordering matters more than most teams realize.

Deploy a Local Knowledge RAG Pipeline

Honestly, this is the architecture most local SEO practitioners haven't built yet. Which is exactly why it's worth the investment right now.

John Wong's local AI ingestion pipeline series walks through the pattern in detail. The short version: ingest your GBP data, service descriptions, FAQs, and review content as documents; chunk and embed them using a sentence transformer model; store the vectors in Qdrant (self-hosted via Docker or on their free cloud tier); then wire a retrieval layer to an LLM , either the OpenAI API or a locally-hosted model via Ollama , to generate hyper-localised Q&A content, FAQ schema, and draft blog sections grounded in your actual business data.

What comes out isn't generic AI content. It's content that accurately reflects your specific services, your locations, and the language your actual customers use in reviews. That distinction matters enormously for AI citation quality.

Don't skip prompt testing before you scale. Braintrust recommends running 20-50 representative test cases, scoring outputs on factuality, brand tone, and helpfulness before committing any prompt template to production.

Cultivate Local Authority Signals

Local SEO backlinks are genuinely underused at this phase. The highest-ROI moves aren't outreach campaigns , they're structural. Sponsor local events and secure a mention on the organiser's site. Contribute expert commentary to local news outlets. Build locally-relevant hub content like neighbourhood guides or local service comparison pages that earns citations from community sites and regional publishers.

These signals do two things simultaneously. They build domain authority in a way that directly supports local pack rankings, and they create the kind of third-party entity mentions that AI systems use to corroborate business information. Yext's analysis of 6.8 million AI citations found that 86% come from brand-managed sources , but that remaining 14% from independent third parties carries disproportionate trust weight in AI reasoning. A handful of genuine local mentions from credible regional sources punches well above its weight.

Measuring Phase 3 Success

Track three things specifically: growth in organic traffic to your programmatic local pages (Google Search Console, filtered by location-specific URL patterns); how often your brand appears as a cited source in AI Overviews for target queries; and domain authority trends in your local markets via Ahrefs.

That last metric is a lagging indicator. But it's the one that tells you whether the authority-building work is compounding or quietly stalling while you're busy watching other numbers.

Evaluating Local SEO Tools: The Integration-First Checklist

Most tool evaluations start with the wrong question. "What features does it have?" steers you toward whoever puts on the best demo. The right question is: "How does this connect to everything else I'm running?"

A tool that does one thing brilliantly but lives in isolation creates more work, not less. You end up manually exporting data, reconciling inconsistencies between platforms, and rebuilding the same logic in three different places. That's not a pipeline. That's just more tabs.

Here's the checklist I use before committing to any local SEO tool:

1. API & Automation Capabilities Can you read and write data programmatically, or are you stuck clicking around a dashboard? A tool without a robust API or webhook system is a dead end for serious automation. It can't be your system of record , it's just a reporting layer you'll eventually resent.

2. Publisher Coverage & Sync Quality For listings platforms specifically: how many publishers do they cover, and is sync real-time or batch? Batch sync introduces lag that quietly creates NAP inconsistencies. Also check whether the platform reaches aggregators like Foursquare , 60-70% of ChatGPT local results for smaller towns reference Foursquare city-guide listings, so gaps there matter more than most people realise.

3. Multi-Location Scalability Does per-location pricing stay predictable at 50 or 500 locations? Can you segment permissions by team, region, or client? Tools that work fine at 10 locations often become genuinely unmanageable at 100.

4. AI & Extensibility Can the platform call external LLM APIs, or does it lock you into its own AI layer? That flexibility determines whether the tool fits your pipeline or forces you to build awkwardly around it.

5. Governance & Compliance Audit logs, approval workflows, and GDPR/CCPA data controls aren't optional , especially for review management at scale. If a tool can't tell you who changed what and when, it's a liability waiting to surface at the worst possible moment.

The best local seo tools covered in Phases 2 and 3 , Yext, BrightLocal, Birdeye, PinMeTo , score reasonably well across these dimensions. None is perfect. The goal isn't finding the perfect best local seo tool anyway; it's assembling components that connect cleanly into a cohesive system rather than a pile of disconnected point solutions.

Measuring ROI and Justifying the Investment

The cost objection always comes up. So let's be precise about what you're actually spending before we get into whether it's worth it.

Typical monthly costs by stage:

- Phase 1 (Hygiene): £30–£60 for BrightLocal's starter tier. Citation building adds £2–£3 per site, one-time. Total first-month outlay for a single location: under £150.

- Phase 2 (Automation): BrightLocal + Birdeye starter runs £300–£650/month. Add Yext for serious multi-location sync and you're at £800–£1,200/month.

- Phase 3 (AI Scaling): Full multi-location stack with enterprise review management sits at £1,000–£3,500+/month.

Those numbers look very different once you attach real revenue to them. A PinMeTo client reported €130,000 in additional sales attributable to GBP data improvements alone. Not a marketing claim -- a single client outcome from fixing what was already broken.

A simplified ROI model:

ROI = [(Monthly Leads Post-Automation × Lead Value) + (Hours Saved × Hourly Rate)] − Monthly Tool Cost

Using real figures: £1,000/month spend, leads increase from 10 to 30, average lead value of £200. That's £4,000 in incremental revenue -- a 4:1 return before you even factor in the 5–10 hours saved per location per month from GBP automation alone.

Map KPIs to phases so you know what to measure when:

| Phase | Primary KPIs |

|---|---|

| Phase 1 – Hygiene | NAP consistency score, GBP completeness %, citation accuracy |

| Phase 2 – Automation | Time saved per location, review volume growth, local pack visibility |

| Phase 3 – AI Scaling | AI citation share, local page organic traffic, branded search growth |

For local seo for small businesses, the entry point is Phase 1 -- under £150/month, with measurable NAP and GBP improvements visible within 30 days. Scale the investment only as client results compound. There's no sensible reason to jump straight to a £3,000/month stack before the foundations are clean and properly tracked.

One honest caveat: ROI timelines vary a lot depending on market competitiveness and where you're starting from. A business with zero GBP presence will see faster gains than one already sitting in positions 4–6. Set expectations accordingly and don't oversell the timeline.

Governance, Risks, and the Human-in-the-Loop Imperative

Automation amplifies whatever process it's built on. A clean workflow scales efficiently. A broken one scales the damage just as fast.

The most common failure mode I see isn't a tool problem -- it's an oversight problem. Teams deploy AI-generated review responses or localised page content without a single human checkpoint, and the output is factually wrong, off-brand, or both. At scale, that's not a minor embarrassment. It's a reputation liability playing out across dozens of locations simultaneously.

The HITL model isn't optional overhead -- it's the quality gate that makes speed safe.

The MTSOLN workflow is the right mental model here: AI drafts the content, a human expert reviews for factual accuracy and brand tone, then the approved version publishes. That checkpoint adds minutes, not hours. What it prevents is a generic AI response telling a customer their complaint has been "noted and escalated" when the business has no escalation process whatsoever.

Beyond content quality, there are four specific risks worth naming explicitly:

- Generic AI content. Vague prompts produce vague output. Use location-specific templates with named streets, services, and local context baked in -- not "write a page about our Manchester branch."

- Review manipulation. Buying reviews or incentivising them against platform policy is a fast route to suspension. Use ethical solicitation workflows through tools like Birdeye or BrightLocal.

- Data privacy. If your pipeline ingests customer data -- review text, contact details, behavioural signals -- it falls under GDPR and CCPA obligations. Data minimisation, consent capture, and right-to-erasure processes aren't negotiable.

- Algorithmic dependency. APIs break. Google changes GBP field structures without warning. Build monitoring alerts for sync failures so pipeline breakage doesn't silently degrade your data quality for weeks before anyone notices.

Governance isn't a tax on speed. It's the audit trail that lets you move fast with confidence -- and recover cleanly when something goes wrong.

Conclusion

The shift in local search isn't gradual. AI Overviews now appear in 68% of local business queries [Source: whitespark.ca], and 86% of those citations pull from brand-managed sources [Source: investors.yext.com]. If your data isn't clean, consistent, and continuously updated, you're not competing. You're invisible.

Here's the thing about local seo for small businesses: the problem was never finding local seo tools. There are plenty. The problem is knowing how to connect them. Data hygiene first, then orchestration, then AI scaling. Each phase builds on the last, which means skipping the foundation doesn't just slow you down -- the automation starts amplifying your mess instead of your results.

The contrarian truth most agencies won't tell you: the best local seo tools stack is the one with the fewest manual handoffs. Integration capability matters more than any individual feature.

And governance isn't optional once you're operating at scale. HITL checkpoints protect brand voice, search compliance, and the trust signals that AI systems are actively rewarding. That's not overhead. That's how you keep the machine running clean.

So start this week. Audit your current data flow, identify one manual process to replace with the GBP API or PinMeTo, and calculate your time-to-ROI using the framework above. You've got the architecture. Build it.