April 13th, 2026

Artificial Intelligence Search Engine Optimization: The Complete Guide

WD

WDWarren Day

If you're still thinking of artificial intelligence search engine optimization as just another set of tools to bolt onto your existing workflow, you're already behind. AI-written pages now appear in over 17% of top search results, and AI search traffic grew 527% year over year between early 2024 and 2025. That's not a gradual shift, that's a structural change to how people find information, and it's happening faster than most marketing teams have had time to respond.

Here's the uncomfortable truth: most guides on this topic will hand you a list of tools and call it a strategy. That's not what this is.

I've spent the last several years building the infrastructure that powers content at scale, keyword pipelines, automated publishing systems, SERP analysis tooling, and what I've seen consistently is that teams who treat AI SEO as a checklist get left behind by those who build systems around it. For B2B SaaS companies with moderate domain authority, that distinction matters even more. You're not competing with enterprise budgets or decade-old domains with thousands of backlinks. You need to be smarter about where you play and how you automate.

This guide is a technical blueprint for 2026. We'll cover how AI search engines actually ingest and rank content, what the four pillars of an AI-optimised page look like in practice, and how to build the measurement and automation workflows that most teams haven't even started thinking about yet. Only 16% of brands currently have a systematic way to track performance in AI search results, which means getting this right is still a real competitive advantage.

By the end, you'll have a clear, actionable system, not a vague framework.

What is Artificial Intelligence Search Engine Optimization? The 2026 Reality Check

Before anything else, let's get the terminology straight, because it's genuinely a mess right now.

Artificial intelligence search engine optimization is the practice of optimising your content and technical infrastructure to gain visibility across AI-driven search interfaces, Google's AI Overviews, Bing Copilot, ChatGPT Search, and Perplexity, not just traditional blue-link results pages. It's an umbrella term that sits on top of three distinct but overlapping strategies.

Traditional SEO is still the foundation. Keywords, backlinks, crawlability, Core Web Vitals, this is what gets your pages indexed and ranked. Nothing that follows works without it.

Answer Engine Optimisation (AEO) is about structuring content so it gets extracted and surfaced as a direct answer, in Google's AI Overviews, featured snippets, or voice assistants. The metric isn't rank; it's snippet ownership. You either own the answer box or you don't.

Generative Engine Optimisation (GEO) is the newest layer. It focuses on making your content authoritative enough that generative AI systems, ChatGPT, Perplexity, Gemini, cite you when synthesising responses from multiple sources. The metric is citation frequency across AI platforms.

These aren't competing frameworks. They're layers. In 2026, you need all three working together.

Is SEO dead? No. But the question reveals the right instinct, something fundamental has shifted. AI-written pages now appear in over 17% of top search results, up from 2.27% in 2019. The interfaces look different, but the underlying need hasn't changed: people searching for information and solutions.

Here's the point most guides miss: AI search engines still depend on traditional search infrastructure to retrieve information. ChatGPT Search runs on Bing's index. Google's AI Overviews pull from Google's own crawled and ranked content. If a traditional crawler can't find and assess your pages, no AI is going to cite you either. The fundamentals aren't optional.

What has changed is what you're optimising for. In 2024, success meant a page-one ranking. In 2026, it means being the source an AI model trusts enough to quote.

How AI search engines actually work: a technical look at ingestion and ranking

Understanding the mechanics here isn't optional. If you don't know how these systems ingest and surface content, you're optimising blind, making changes and hoping something sticks.

The RAG pipeline: what actually happens when someone asks an AI a question

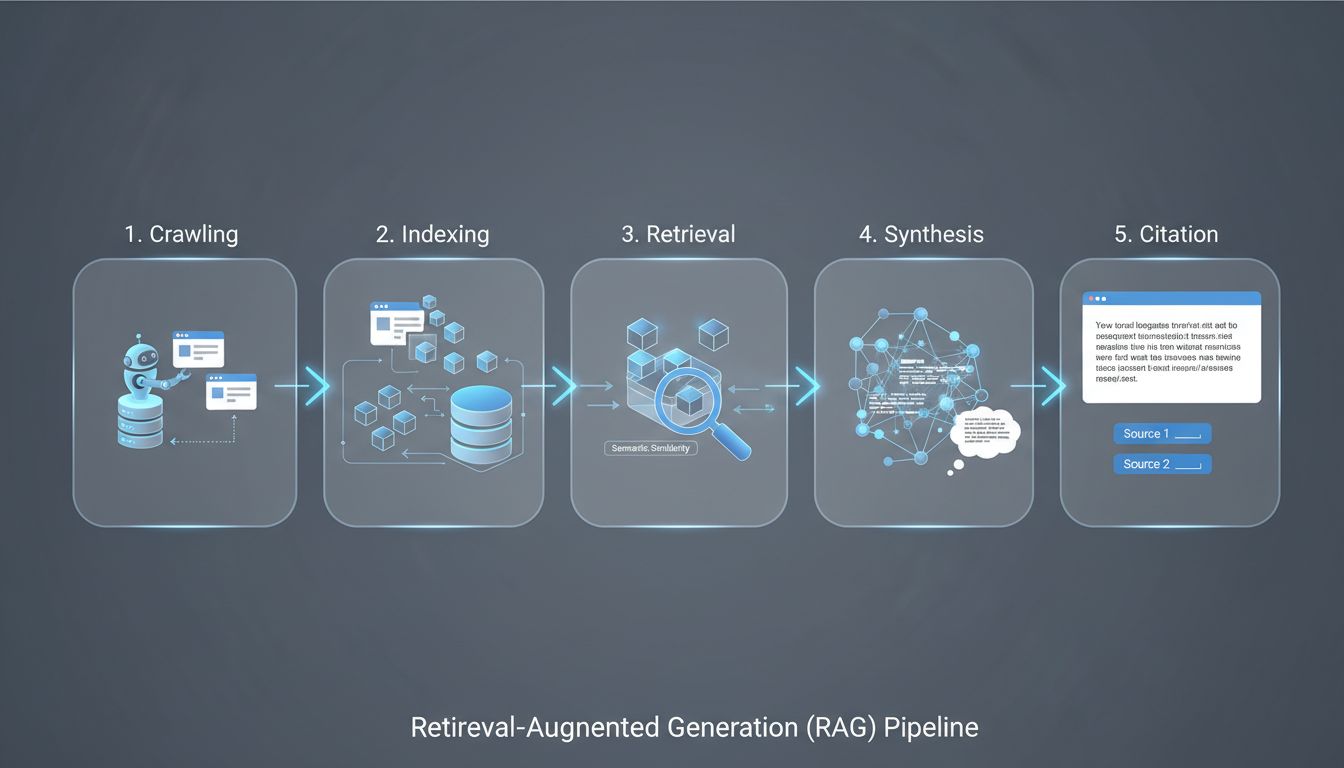

Most AI search engines, Google's AI Overviews, ChatGPT Search, Perplexity, run on a pattern called Retrieval-Augmented Generation (RAG). It's a five-stage loop, and your content either fits into it or it doesn't.

- Crawling, A bot discovers and fetches your page

- Indexing, The content is chunked, converted into vector embeddings, and stored

- Retrieval, At query time, the system finds chunks that are semantically close to the user's question

- Synthesis, An LLM combines the retrieved chunks into a coherent answer

- Citation, The system attributes the answer to the source pages it used

Here's the part most people miss: AI systems don't read your page the way a human does. They pull chunks, short passages that score well on semantic similarity to the query. If your key information is buried in paragraph seven of a 3,000-word essay, it may never surface. Content that answers questions in clear, self-contained blocks gets retrieved. Everything else gets skipped.

Crawlers: know who's knocking

This is where most teams make a costly, silent mistake. There isn't one "AI crawler", there are several, and they serve different purposes.

For OpenAI's ecosystem alone, there are three distinct agents. GPTBot crawls for model training. OAI-SearchBot indexes your content for real-time ChatGPT Search results, this is the one that determines whether you appear when someone asks ChatGPT a question with web search enabled. ChatGPT-User fetches specific pages during live user sessions. You can block each one independently in robots.txt, and they behave completely differently.

Here's the problem: many teams blocked GPTBot in 2023 when concerns about AI training data scraping were at their peak, a reasonable call at the time. GPTBot is the most blocked AI bot, with 5.89% of websites actively blocking it. But if those same robots.txt rules inadvertently block OAI-SearchBot, those sites are invisible in ChatGPT Search. That's a competitive gap you can close simply by auditing your robots.txt and being deliberate about which bots you allow.

Bing's index is also more important than most people realise. ChatGPT Search pulls from Bing's index as a primary source, so Bingbot access and Bing indexation directly affect your ChatGPT visibility.

What AI systems actually rank on

Forget pure keyword density. The signals that determine whether your content gets retrieved and cited are different:

- Semantic completeness, Does your page comprehensively cover the topic, or does it answer one narrow angle?

- Entity presence, Are you clearly associated with recognised entities (your brand, your product category, your industry) that the model can validate?

- Structured formatting, Can the system parse a clean answer from your content, or does it have to fight through prose to find it?

- Cross-platform mentions, Does your brand appear consistently across multiple authoritative sources?

- Recency, Especially relevant for platforms like Perplexity that weight freshness heavily

Structured data is not optional

JSON-LD schema markup is how you signal structure to machines. FAQ schema, HowTo schema, Article schema, these tell the retrieval system what type of content it's looking at and where the answers are. Without it, the AI has to infer structure from your HTML formatting alone, which is a far less reliable process.

If your site doesn't have structured data implemented, that's the first thing to fix. Not because Google will penalise you for not having it, but because you're making the retrieval step harder than it needs to be, and in a competitive RAG pipeline, friction loses.

The Four Pillars of an AI-Optimised Page

Here's what most people get wrong when they first encounter artificial intelligence search engine optimization: they assume the answer is volume. Publish more pages, faster, with AI. That's not a strategy, it's noise. What actually gets you cited and ranked in AI-driven results is page-level quality across four specific dimensions.

Pillar 1: Topical and entity authority

A single well-written page doesn't cut it anymore. AI systems are evaluating whether your site owns a topic, not just whether a single URL mentions the right words.

Build topic clusters, a central pillar page supported by tightly linked supporting content that covers every meaningful angle of a subject. Then go further: establish your brand as a named entity. That means presence on Crunchbase, LinkedIn, industry directories, and ideally citations in external editorial content. Google's Knowledge Graph contains 500 billion facts about 5 billion entities, if your brand isn't in that graph, you're invisible to the systems that use it.

Internal linking isn't just navigation. It's how you signal topical relationships to crawlers and retrieval systems alike.

Pillar 2: Structured data and semantic markup

JSON-LD schema is non-negotiable at this point. FAQ schema, HowTo schema, Article schema, each one gives AI retrieval systems a machine-readable map of your content's structure and intent.

Beyond schema, your HTML hierarchy matters. Proper use of <h1> through <h6>, <section>, and <article> tags tells crawlers how your content is organised. Sloppy markup means the retrieval step has to work harder to parse your page, and as covered in the previous section, friction in the RAG pipeline is a ranking disadvantage.

Pillar 3: Content for extractability

AI systems are looking for chunks they can lift and use. That means your content needs to be structured for extraction, not just for reading.

Front-load concise answers in your introductions, aim for 40-60 words that directly address the question before expanding into detail. Use question-format H2s and H3s wherever it makes sense. Write short paragraphs. If a retrieval system has to work to find your answer, it'll find someone else's instead.

This is the part that ai-powered seo tools can genuinely help with: auditing whether your existing content actually answers questions in extractable chunks, or whether your key points are buried three sentences deep in dense prose.

Pillar 4: Multimodal readiness

Multimodal AI systems, GPT-4o, Gemini, and their successors, process images, audio, and documents alongside text. Your non-text assets need to be machine-readable too.

Descriptive alt text and meaningful filenames on images. Transcripts for any video or audio content. PDFs that are text-selectable with proper metadata, not scanned images. These aren't accessibility nice-to-haves; they're signals that multimodal retrieval systems actively use when deciding what to surface.

Get all four right, and you're building a page that holds up as the systems evolve, not just one that passes today's checks. The free ai seo tools worth using, including those covered in ranked ai reviews, will test across all four of these dimensions. The ones that only check keyword density are solving last decade's problem. And if you're evaluating ai search optimization tools for your workflow, this four-pillar framework is a reasonable baseline for what they should actually cover.

Winning Strategies for Sites with Moderate Domain Authority

Here's something the generic SEO content won't tell you: if your domain rating sits around DR 33, you're not going to outrank HubSpot, Salesforce, or Semrush for "CRM software" or "email marketing tools." That battle was lost before you started. The good news is that AI search has quietly shifted the battleground in your favour, if you know where to fight.

Stop targeting head terms. Start owning conversations.

AI answer engines don't just reward the biggest sites. They reward the most relevant and complete answer to a specific question. Long-tail, conversational queries, the "how do I", "what's the difference between", "why does my X keep doing Y" questions, are genuinely winnable for moderate-authority domains.

These queries map directly to how buyers actually research in artificial intelligence search engine optimization tools. Someone asking ChatGPT "what's the best project management tool for remote engineering teams under 50 people" isn't getting a generic listicle. They're getting a synthesised answer, and if your content is the most precise, structured response to that question, you have a real shot at the citation.

Build your entity footprint, not just your backlink profile

The shift from link-based to entity-based authority is where moderate-DR sites can genuinely compete. Get listed in relevant industry directories. Contribute expert quotes to trade publications. Make sure your business name, founder name, and product name appear consistently across your site, your LinkedIn, your GitHub, your Crunchbase profile, wherever your audience and the crawlers will find you.

Cross-platform entity presence on four or more platforms increases AI citation likelihood by 2.8×. That's not a marginal gain. And unlike building domain authority through backlinks, you can establish entity presence in weeks, not years.

Depth beats breadth. Every time.

One genuinely comprehensive guide on a niche subtopic will outperform ten surface-level posts. This is the topical authority play, and it works precisely because most competitors are still churning out thin content at volume. Pick the sub-topic your ICP cares most about, go three levels deeper than anyone else has, and own it completely.

This is also where ai-powered seo tools earn their keep. The good ones, the kind that show up in ranked ai reviews for a reason, can surface the gaps your competitors haven't touched yet, the questions your audience is asking that nobody's answered properly. Free ai seo tools can get you surprisingly far here if you're willing to do the actual writing yourself.

Original data is your unfair advantage

No competitor can replicate this overnight. Publish a survey, run an analysis on your own product data, document a detailed case study. Original research becomes an entity in itself, something AI systems can cite that literally no other source contains. That's what makes it so valuable as part of any ai search optimization tools strategy: you're not just optimising existing content, you're creating something the retrieval systems have no choice but to attribute to you.

The Discovered Labs B2B SaaS case study is a useful benchmark. Starting from minimal AI visibility, they went from 575 to 3,500+ AI-referred trials in seven weeks, a 6x increase, by combining technical fixes, answer-focused content, and deliberate entity building. Their domain wasn't a household name. The strategy was.

AI SEO Tools and Resources: Building Your 2026 Stack

The tool market for artificial intelligence search engine optimization is genuinely noisy right now. Every platform has bolted "AI" onto its branding, which makes it harder to identify what actually moves the needle. Here's how I'd cut through it.

Category 1: AI-powered SEO suites

These are your research and competitive intelligence foundations. Ahrefs (from ~$129/mo) and Semrush (from ~$139/mo) are the two I'd consider essential for any serious stack. Both now include AI visibility tracking, Ahrefs' Brand Radar monitors your presence across ChatGPT, Perplexity, and Google AI Overviews, while Semrush tracks over 100 million prompts. Neither is cheap, but if you're choosing one, Ahrefs wins on backlink depth and programmatic API access. Semrush wins on breadth and reporting.

For budget-constrained teams, SE Ranking (~$65/mo) is a credible all-in-one alternative with AI visibility add-ons.

Category 2: AI writing and content optimisation

Frase (from ~$39/mo) is the standout value play here. It handles SERP research, content briefs, optimisation scoring, and GEO scoring across multiple AI platforms, all in one workflow. It's not as polished as Surfer SEO on pure ranking correlation, but for teams building AI-first content pipelines, it's the most purpose-built tool at that price point.

MarketMuse (from $149/mo, free tier available) is better suited to content-heavy sites that need site-wide topical inventory analysis. The free plan is genuinely useful for gap analysis before you commit.

Category 3: AI-specific analytics

Bing Webmaster Tools is free and now includes the AI Performance dashboard, launched in public preview in February 2026, which tracks total citations, average cited pages, and grounding queries. It's currently the only native, free surface for measuring AI citation performance. Use it.

Category 4: AI detection and risk management

Originality.ai and GPTZero are the two tools worth knowing. Originality.ai's Turbo model claims 99%+ detection accuracy; GPTZero benchmarked at 96.5% on mixed-source documents. Both have free tiers. Neither is a substitute for editorial governance, treat them as a quality checkpoint, not a compliance guarantee.

Free AI SEO tools worth using

ChatGPT (700 million weekly active users) is genuinely useful for SEO tasks: generating FAQ outlines from a keyword list, drafting meta descriptions at scale, clustering keywords by intent, and structuring content briefs. Its hard limits are real-time data and factual accuracy. Never trust it for statistics without verification.

Google Search Console remains free and essential. Bing Webmaster Tools is free and increasingly valuable for AI citation data. If you're evaluating free ai seo tools and haven't set both of these up yet, that's the first thing to fix.

On the "30% rule"

You'll see this referenced frequently in ranked ai reviews and practitioner forums. To be clear: there's no algorithmic threshold in Google's guidelines. It's a practitioner heuristic, roughly 70% AI-assisted execution, 30% human input covering strategy, fact-checking, original insight, and brand voice. The actual ratio matters far less than whether that human layer is substantive. A thin editorial pass on a 5,000-word AI draft isn't the same as genuine expert contribution.

Step-by-Step Implementation: From Audit to Automated Workflow

Strategy without execution is just a document. Here's the exact sequence I use when onboarding a site into an AI-optimised content pipeline, cold audit to running automated system.

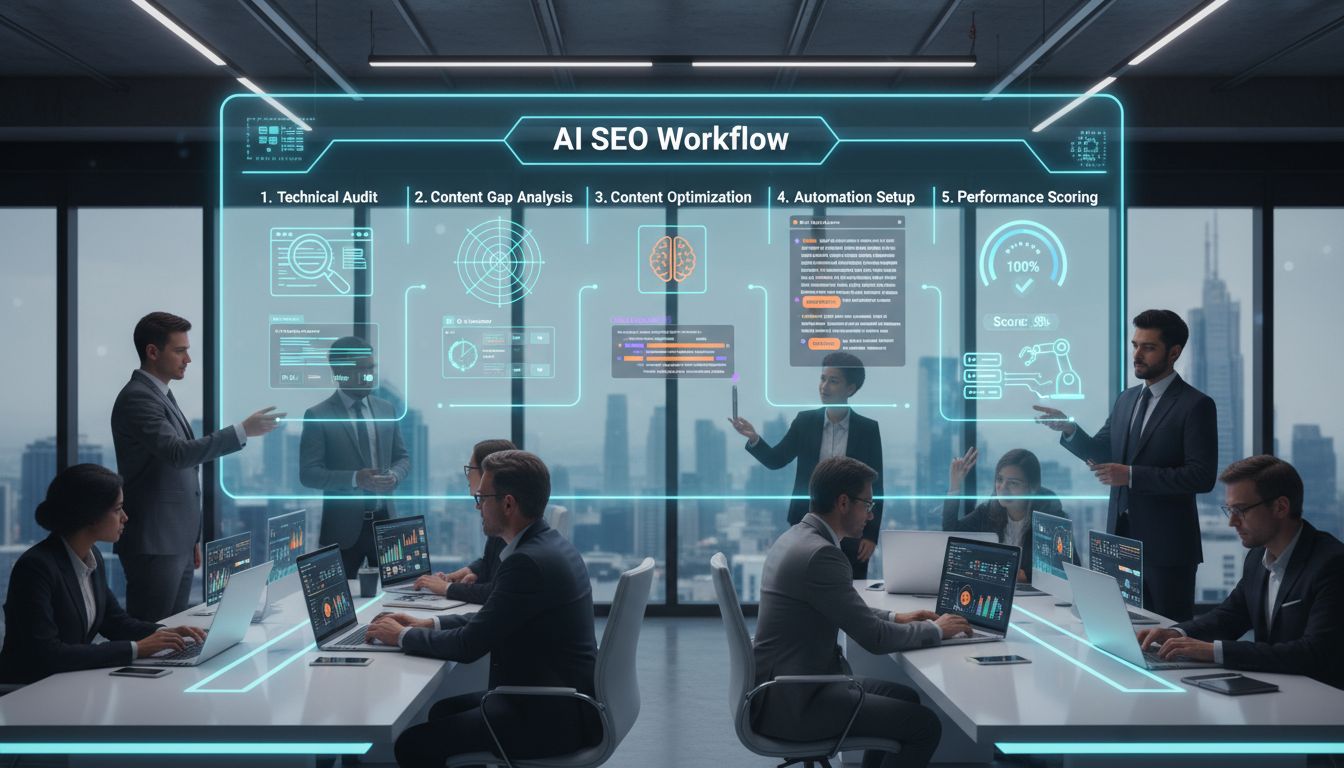

Step 1: The technical audit

Before touching content, fix the foundation. Run through this checklist:

robots.txtaudit, Are you accidentally blocking AI crawlers? Check forDisallowrules targetingGPTBot,OAI-SearchBot,PerplexityBot, andGoogle-Extended. If you want to appear in ChatGPT or Perplexity results, you need to explicitly allow these bots.- Structured data validation, Use Google's Rich Results Test and Schema Markup Validator on your five highest-traffic pages. Missing or broken JSON-LD is the single fastest win most sites leave on the table.

- Core Web Vitals, Pull your CWV scores from Google Search Console. AI answer engines still use Google's index as a base signal; a slow site is a deprioritised site.

- Mobile rendering, Crawl with a mobile user-agent. A surprising number of B2B SaaS sites still have desktop-only schema implementations.

Step 2: Content gap analysis for AI

This is where ai search optimization tools earn their keep. The goal is to find question-based queries your competitors are getting cited for in AI Overviews, and you're not.

My approach: use the DataForSEO SERP API (specifically the Advanced endpoint, which returns structured item_types arrays) to batch-check 200–500 target keywords. Filter for any result where item_types contains ai_overview. That gives you a prioritised list of queries where AI citation is already happening in your space.

Cross-reference that list against your Ahrefs keyword rankings. Anything your competitor ranks for organically and triggers an AI Overview, where you're absent, is your immediate target. One practical note: the DataForSEO AI Overview endpoint is priced separately at around $1.20 per 1,000 requests, budget accordingly, and build in retry logic for 429 rate-limit responses from the outset.

Step 3: Optimise a priority content cluster

Pick three to five related pages, not random pages, a genuine topical cluster. Apply the Four Pillars from Section 3 to all of them simultaneously.

Specifically: add FAQ schema to each page, write a direct 40–60 word answer in the opening paragraph, add or update internal links between cluster pages using descriptive anchor text, and ensure each page has at least one original image with a descriptive alt attribute. Track the cluster as a unit, not as individual pages.

Step 4: Build your first automation

Here's a basic weekly reporting script I'd wire up first:

- Pull your tracked keywords via the Ahrefs API (Enterprise tier required, minimum 50 units per request, so batch efficiently)

- For each keyword, hit the DataForSEO SERP API and check

item_typesforai_overview - Filter for keywords where an AI Overview is present but your domain isn't cited

- Output to a Google Sheet or Slack webhook with keyword, current rank, and AI Overview status

The friction point most people hit: Ahrefs API access is locked to the Enterprise plan at £1,499/month. If that's not viable, DataForSEO's keyword data endpoints cover most of the same ground at pay-as-you-go rates with a £50 minimum deposit, a genuinely flexible alternative for smaller teams.

Step 5: Implement a content scoring system

Create a simple scoring algorithm to automatically flag pages that need AI optimisation. Each page gets points:

| Signal | Points |

|---|---|

| FAQ schema present | +2 |

| Direct answer in first 100 words | +2 |

| Word count 800–2,500 | +1 |

| Question-format H2/H3 subheadings | +1 per heading (max 3) |

| Original image with alt text | +1 |

| Internal links to/from cluster | +1 |

Pages scoring below 5 go into a remediation queue. Run this against your full content inventory monthly. It takes an afternoon to build in Python against your CMS API, and it replaces a manual audit process that would otherwise take days.

How to Track AI SEO Performance: Moving Beyond Rankings

Rankings are a lagging indicator at the best of times. In AI search, they're close to meaningless on their own. A page can sit at position three, never appear in an AI Overview, and generate zero AI referral traffic. Another page might rank at position eight, get cited constantly by Copilot and Perplexity, and drive higher-intent visitors who convert at a measurably better rate. You need to measure both realities separately.

The gap is stark: only 16% of brands have a systematic way to track AI search performance. Most teams are still reporting on organic clicks and keyword positions while the actual value shifts elsewhere.

The new KPI set

Start by defining what you're actually tracking:

- Total Citations, how often your pages are referenced as sources in AI-generated answers

- Grounding Queries, the phrases the AI used internally when retrieving your content (not what the user typed, but the AI's own reformulation)

- Average Cited Pages, how many distinct URLs from your site appear as sources per day

- AI Referral Traffic, sessions originating from ChatGPT, Perplexity, Copilot, and similar platforms, segmented in GA4 by source

Setting up Bing's AI Performance Dashboard

This is the most concrete measurement tool available right now. Microsoft launched the AI Performance dashboard in Bing Webmaster Tools in public preview on 10 February 2026, and it's free for any verified site.

To access it: log into bing.com/webmasters, select your property, and look for AI Performance in the left navigation. You'll get a 90-day rolling window by default.

What to focus on first:

- Total Citations chart, look for trend direction, not just absolute numbers. A rising line over 60 days tells you your content strategy is working.

- Grounding Queries, this is the genuinely interesting data. These are sampled phrases, not exhaustive, but they reveal how the AI is categorising your content. If you're a B2B SaaS tool and your grounding queries are all informational rather than commercial, that's a signal your bottom-of-funnel pages need reworking.

- Page-level citation activity, export the CSV. You'll almost certainly find citation concentration: one or two pages driving the majority of citations. That's your template for what's working.

One caveat worth stating plainly: the data is sampled, carries a 2–3 day lag, and doesn't yet connect citations to clicks. It measures citation frequency, not prominence within a response.

Tracking Google SGE performance

Google hasn't added an AI Overviews filter to Search Console. Despite rumours circulating in September 2025, Google's John Mueller confirmed those screenshots were fabricated. AI Mode traffic is counted under the "Web" search type in GSC, but you can't isolate it.

The practical workaround: filter your GSC query report for question-based and long-tail conversational phrases, which correlate strongly with AI Overview triggers, then monitor CTR trends on those queries specifically. A falling CTR alongside stable or rising impressions is the classic signature of AI Overview cannibalisation, your content is being cited but not clicked.

For more precise tracking, ai search optimization tools like Semrush's AI Overview tracking and Otterly.AI monitor citation presence across queries programmatically.

Correlating citations to business outcomes

Raw citation counts are vanity metrics unless you close the loop. In GA4, create a custom segment for sessions where the source contains chatgpt.com, perplexity.ai, or copilot.microsoft.com. Layer that against goal completions, trial signups, demo requests, whatever your conversion event is.

For a SaaS pricing page specifically, you want to know: when that page gets cited in an AI answer, do the users who click through convert at a higher rate than standard organic? Onely reported that AI search traffic converts at roughly 5× the rate of traditional search. If your data shows something similar, that justifies prioritising citation optimisation on commercial-intent pages above almost everything else.

Pulling citation data via API

If you're building a BI dashboard, which you should be if you're running this at any scale, Bing Webmaster Tools exposes its data via API. Authenticate with your API key, hit the AI Performance endpoints, and pipe citation counts and grounding queries into whatever reporting layer you use (Looker Studio, Metabase, a custom dashboard).

Set up weekly automated pulls so you're tracking trend lines, not just spot-checking the UI. The grounding queries endpoint is particularly useful: feed those phrases back into your keyword research process as signals of how AI systems are actually categorising your content.

Risk Management: AI Detection, Crawler Blocks, and Accuracy

Most AI SEO guides skip this entirely. That's a mistake, these risks are real, and ignoring them creates genuine liability in your content pipeline.

AI content detection: useful signal, not verdict

Tools like Originality.ai and GPTZero use pattern recognition to identify statistical fingerprints in AI-generated text: low perplexity, repetitive sentence structure, predictable token sequences. Their vendor-reported accuracy figures look impressive. GPTZero claims 99.3% accuracy with a 0.24% false positive rate; Originality.ai's Turbo model claims 99%+ on unmodified AI output.

Real-world performance is a different story. Independent testing shows Originality.ai's accuracy dropping to 76-83% across mixed content types, with false positive rates climbing to 4-5% on well-written human text. That's roughly one in twenty legitimate human-written pieces getting flagged.

Use these tools as a risk-scoring layer, not a final verdict. A high AI probability score should trigger closer editorial review, nothing more.

The crawler decision: GPTBot vs. OAI-SearchBot

Most teams conflate OpenAI's training crawler with its search crawler and block both, or neither, without thinking it through.

GPTBot feeds OpenAI's model training. OAI-SearchBot powers live ChatGPT Search citations. They're independent, you can block one without touching the other.

For a B2B SaaS site with public marketing content, the sensible default is: block GPTBot (no reason to donate your content to model training), allow OAI-SearchBot, PerplexityBot, and ClaudeBot (these drive citations and referral traffic). One important caveat: since July 2025, Cloudflare blocks AI bots by default. If you're behind Cloudflare, check your Bot Fight Mode settings or you may be invisible to ai search optimization tools and crawlers without realising it.

The human-in-the-loop imperative

52% of businesses cite inaccuracy as their top risk with AI-generated content. That tracks with what I see in practice. AI models hallucinate citations, misstate statistics, and describe things that are simply wrong with complete confidence.

Every piece of AI-assisted content needs a mandatory review gate: fact-check all statistics against primary sources, verify that any named tools or products still exist and work as described, confirm that any claims about competitors are accurate and defensible. This isn't optional overhead. It's what separates content that builds authority from content that quietly erodes it.

Provenance and attribution

AI answers often don't disclose their exact sources, and you can't control what ChatGPT or Perplexity synthesises from your content. What you can control is how clearly your brand, products, and key claims are described on your own site.

If your about page, product descriptions, and author bios are precise and internally consistent, misattribution becomes harder. Think of it as entity hygiene: the cleaner your on-site signals, the more accurately AI systems represent you, whether they cite you or not.

The Future of AI SEO: Trends to Watch Beyond 2026

Will SEO be replaced by AI? It's the question I get asked most often right now, and the honest answer is: the job title might change, but the function doesn't disappear, it gets harder and more valuable.

AI search traffic grew 527% year-over-year between 2024 and 2025. That's not a blip. The trajectory points clearly toward AI-mediated search becoming the default interface for information discovery. What changes isn't whether people search, it's how they search, and what it takes to be found.

Here's where I think the discipline is heading.

Agentic search changes the optimization target. Right now, you're optimising for a human who reads a result and clicks. Within the next two years, a meaningful share of queries will be executed by AI agents acting on behalf of users, booking, comparing, purchasing, summarising. Your content won't just need to answer questions; it'll need to be machine-parseable enough for an autonomous agent to act on it. That means richer structured data (think Action schemas, not just FAQ), clean entity definitions, and potentially API-accessible content feeds that agents can query directly.

Real-time data becomes a genuine moat. As AI systems get better at synthesis, the edge shifts toward freshness and primary sourcing. Sites with direct access to live data, proprietary research, real-time pricing, original survey results, will be cited disproportionately. Static evergreen content will commoditise faster than it already has.

Multimodal is no longer optional. Vision-language models are maturing fast. Optimising only text in 2027 will feel like ignoring mobile in 2015. Images, video, and structured document formats all need the same careful attention you currently give your H2 hierarchy.

As for careers: the SEO professionals who'll thrive are the ones who can read data, build workflows, and think in systems, not the ones who chase algorithm updates. Whether you're working with free ai seo tools or enterprise-grade ai-powered seo tools, the job is evolving into something closer to digital visibility engineering. Anyone who's spent time with ranked ai reviews of these platforms knows the tooling is getting more capable fast, and that's pushing the human role toward strategy and judgment rather than execution. Honestly, that's a more interesting place to be than where the discipline sat five years ago. The practitioners who treat artificial intelligence search engine optimization as a system to understand rather than a checklist to follow are the ones building something durable. The rest are optimising for a version of search that's already changing under their feet.

Conclusion

Artificial intelligence search engine optimization isn't a checklist you complete, it's a system you build and iterate on. The sites that hold ground in 2026 and beyond aren't the ones with the biggest budgets or the highest domain ratings. They're the ones that structure content for extractability, establish entity authority across platforms, and measure what actually matters: citations, grounding queries, and AI referral traffic, not just ranking positions.

If your domain authority sits in the DR 30s, that's not a reason to panic. It's a reason to be precise. Long-tail, conversational queries with genuine topical depth are still winnable. Automated workflows make that precision scalable without headcount.

Here's where to start: run a technical audit focused on structured data gaps, crawler permissions, and your current citation rate in Bing's AI Performance dashboard. Then pick one topic cluster and run a question-driven content sprint against it. Automate one part of the pipeline, keyword clustering, content scoring, internal link mapping, anything that removes a manual bottleneck.

Whether you're working with free ai seo tools or more sophisticated ai-powered seo tools, the leverage is in the system design. Anyone who's spent time with ranked ai reviews of these platforms knows the tooling moves fast. The practitioners treating artificial intelligence search engine optimization as something to understand rather than execute are the ones building something that lasts. Spend fifteen minutes with any of the better ai search optimization tools and it's obvious the gap between strategic use and checkbox use is already wide, and it's widening.

Build the system. The rankings follow.

Frequently Asked Questions

Can AI do search engine optimization?

Yes, but it's not a replacement for human strategy. AI handles specific tasks well: keyword clustering, SERP analysis, content gap identification, meta generation, and performance reporting can all be automated or significantly accelerated. What it can't do is exercise editorial judgment, build genuine relationships for link acquisition, or decide which keywords are actually worth targeting given your domain authority and commercial goals. The right mental model in 2026 is AI as infrastructure, it runs the repeatable, data-heavy work while you focus on the decisions that require context.

Is SEO dead or evolving in 2026?

Not dead. The goal posts have moved. The core objective, connecting your content with people actively looking for it, hasn't changed. What has changed is where that visibility happens. Getting cited in an AI Overview or a Perplexity answer is the new page one for informational queries, and that requires a different playbook: topical authority, structured data, and entity presence rather than just keyword density and backlinks.

Organic click-through rates are under pressure, CTR for AI Overview queries dropped 61% in September 2025 [Source: taylorscherseo.com], but brands cited in AI Overviews still earn 35% more organic clicks than those that aren't [Source: taylorscherseo.com]. The channel isn't dying; it's bifurcating.

Can I do SEO myself?

Yes, and AI tooling has made the technical barrier lower than it's ever been. Keyword research, content briefs, schema markup, and performance dashboards are all accessible without an agency or a dedicated SEO hire.

The honest caveat: DIY SEO works best when you stop thinking in tasks and start thinking in systems. Manually writing one optimized page at a time doesn't scale. A repeatable workflow, even a lightweight one using Ahrefs for research, a content pipeline for production, and Google Search Console for monitoring, is what separates sustainable traction from a one-off win. If you're a technical founder or a marketing manager comfortable with data, this is genuinely achievable.

What is the SEO job market like in 2026?

Healthy, and paying more for the right skills. A Semrush analysis of 3,900 U.S. SEO job listings found the median salary for senior SEO roles reached $130,000, compared with $71,630 for other positions [Source: almcorp.com]. Specialists who can combine technical SEO with AI workflow automation command an additional $10,000–$18,000 salary premium, and 74% of enterprise companies plan to hire SEO professionals with AI expertise within the next 12 months [Source: webflow.jobs].

The profile employers want is shifting: less "keyword researcher who uses a dashboard" and more "practitioner who can build and operate the systems that power search visibility at scale." Worth keeping in mind whether you're hiring or developing your own skills.