April 10th, 2026

Is AI Generated Content Good for SEO? A Data-Backed Guide for 2026

WD

WDWarren Day

If you've been experimenting with AI writing tools and trying to build a coherent content strategy around them, you've almost certainly hit a wall of contradictory data. One case study shows a 91% revenue surge from AI-assisted SEO work. Another documents a catastrophic ranking collapse after scaling AI content. Your LinkedIn feed oscillates between breathless hype and ominous warnings. For a technical founder or senior marketer responsible for scaling content in 2026, this isn't just noise, it's a genuine blocker to your quarterly roadmap.

So let's cut straight to it: is AI generated content good for SEO? The data-backed answer for 2026 is that it's neither inherently good nor bad. It's a powerful but risky amplifier. On a site with real domain authority, strong E-E-A-T signals, and a disciplined editorial process, AI accelerates growth in ways that weren't possible two years ago. On a thin, low-authority site with no genuine expertise behind it, AI content doesn't paper over those weaknesses, it magnifies them, faster than any manual process ever could.

This matters more than most people realise. Research suggests somewhere between 30–40% of texts on active webpages are now AI-derived. The question isn't whether your competitors are using it. They are. The question is whether they're using it intelligently.

I build and operate Spectre, an AI-powered SEO content automation platform. What that hands-on experience has made clear is that the teams winning with AI content aren't the ones generating the most volume. They're the ones who've matched their AI usage to their domain's actual authority level, and kept humans in the loop at every critical decision point. Whether you're running a full artificial intelligence campaign or just testing the best free ai content generator you could find, the ceiling on your results is set by your domain, not your output volume. Even a content generator free tier will outpace your editorial process if you're not careful about what goes live.

This article gives you a strategic framework to do exactly that: a Domain Rating-aware approach to AI content, a hybrid workflow blueprint, an honest look at the new AI-driven search landscape, and a clear action plan you can operationalise without gambling your domain authority on it.

The 2026 Data: What the Studies Actually Say

The research on AI-generated content and SEO doesn't contradict itself, it just gets misread by people cherry-picking the headline that confirms what they already believe. Look at the full dataset and a clear pattern emerges: the outcome depends almost entirely on how AI is used, not whether it's used.

Here's the contradiction laid out plainly:

| Signal | Finding | Source |

|---|---|---|

| The Penalty Risk | Pure AI content ranked 23% lower on average than human-written articles | Digital Applied, 2026 |

| The Penalty Risk | Pure AI content had a 54% traffic stability rate vs. 81% for human-written | Digital Applied, 2026 |

| The Penalty Risk | Pure AI articles earned 1.6 editorial backlinks at 12 months vs. 4.2 for human-written | Digital Applied, 2026 |

| The Growth Opportunity | Websites using AI content grew 5% faster than those not using AI (Jan 2024–Jan 2025) | Ahrefs, 2025 |

| The Growth Opportunity | 65% of businesses report better SEO results thanks to AI | Semrush, 2024 |

| The Growth Opportunity | AI-assisted hybrid content achieved near-parity with human-written content (4% ranking gap at 16 months) | Digital Applied, 2026 |

The 23% penalty and the 5% growth advantage aren't in conflict. They're measuring different things.

The Digital Applied study tracked 1,400 pure AI articles, LLM output with light copyediting only. The Ahrefs growth data captures sites using AI as part of their workflow, which in practice means AI-assisted production with human editorial oversight. These are fundamentally different content production models. Conflating them is how most of the bad takes in this space get generated.

The operative concept is what I'd call the Quality Threshold: the minimum level of originality, depth, factual accuracy, and genuine expertise a piece needs to be competitive in a given SERP. Human-written content from subject-matter experts clears this threshold almost by default. Pure AI output frequently doesn't, it synthesises existing information competently but rarely adds the original perspective, proprietary data, or earned authority that drives backlinks and long-term ranking stability.

So is ai generated content good for seo? The honest answer is: it depends on which kind. AI-assisted content, where a human applies substantive editorial judgment, integrates original data, and adds named expertise, clears the same Quality Threshold at a fraction of the time cost. Raw, unedited output usually doesn't, regardless of whether you're running a full artificial intelligence campaign or just spinning up the best free ai content generator you could find on short notice.

There's one more variable the aggregate data obscures entirely: your Domain Rating. The Quality Threshold isn't fixed. A DR 70 domain can rank AI-assisted content that a DR 20 domain couldn't get indexed on a good day. Even a content generator free tier will outpace your editorial process if you're not careful about what goes live, but the ceiling on those results is still set by your domain's authority, not your output volume. That's the dimension the next section addresses directly.

The pragmatic answer: should you use AI for SEO in 2026?

Yes, but only if you match the tool to your domain's authority level. AI works best as a force multiplier inside a hybrid workflow: ideation, brief generation, templated content at scale, and technical optimisation. Deploying it for fully automated content creation, particularly on new or low-authority sites, carries real ranking risk. The determining variable isn't the AI itself. It's your Domain Rating.

The Domain Rating Matrix

This is the framework I use when advising on AI content strategy. Stop asking "should I use AI?" and start asking "what can my domain actually support?"

| DR Range | Primary Focus | AI Use Case | Human Input |

|---|---|---|---|

| DR < 30 | Build foundational E-E-A-T and topical authority | Briefs, outlines, keyword clustering | 80–90% |

| DR 30–60 | Scale supporting and mid-funnel content | First drafts for informational content; templated pages | 50–70% |

| DR > 60 | Strategic volume across the full funnel | First drafts including pillar content; programmatic pages | 30–50% |

The percentages aren't arbitrary. They reflect the trust buffer a higher-DR domain carries with Google. A site with strong backlink authority, established topical depth, and a track record of quality content has earned more tolerance for content that isn't perfect out of the gate. A low-DR site has none of that buffer.

Where I actually sit

My own site is at DR 33, squarely in the cautious-scale tier. That means I use AI heavily for research synthesis, brief generation, and structural drafts, but every piece targeting a competitive keyword gets substantive human editing before it goes anywhere near the publish button. I'm not being conservative out of caution. I'm being conservative because the data supports it: pure AI content ranked 23% lower on average than human-written articles across a 16-month study of 4,200 articles. At DR 33, I can't afford to absorb that penalty.

So when people ask whether is ai generated content good for seo, my honest answer is: it depends on your DR. A DR 70 domain running a polished artificial intelligence campaign with editorial oversight will see very different results than a DR 25 site pushing raw output from the best free ai content generator it could find.

The cold start problem

There's a specific failure mode worth naming. Brand-new sites have almost no crawl budget, zero trust signals, and no topical history. Publishing AI content at volume into that environment doesn't just fail to help, it can actively signal low quality before the domain has any authority to offset it. Even a content generator free tier will outpace your editorial process if you're not careful about what goes live, but the ceiling on those results is still set by your domain's authority, not your output volume.

AI isn't the root cause of cold-start problems. It reliably makes them worse when used as a shortcut.

The matrix above isn't a ceiling. It's a starting point. As your DR climbs, your AI leverage increases proportionally, which is exactly why building domain authority should be running in parallel with any AI content programme, not treated as something you'll get to later.

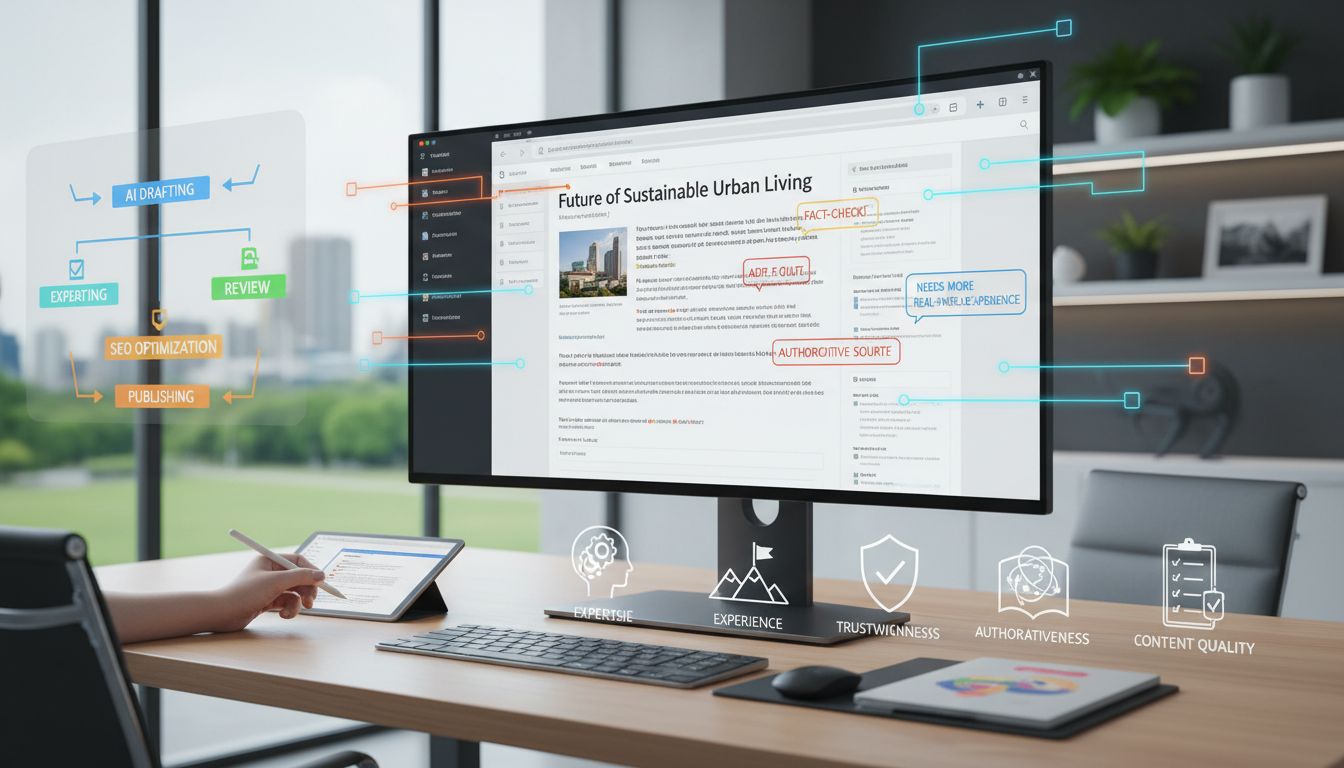

The strategic framework: a hybrid content assembly line

Think of this as the production spec that operationalises the Domain Rating Matrix from the previous section. The matrix tells you what to build; this pipeline tells you how to build it without shipping content that tanks your domain.

Six stages. Two are AI-led. Four have mandatory human gates.

Stage 1, Human-strategic: goal and E-E-A-T briefing

This is where your artificial intelligence campaign lives or dies, and it's entirely human work. Before a single prompt is written, someone with domain expertise defines the target keyword cluster, the search intent, the required E-E-A-T signals, the competitive angle, and the content's commercial purpose.

Skipping this stage is the single most common reason AI content fails. You're not briefing a copywriter, you're programming a system. Garbage in, garbage out is more literal here than anywhere else in content production.

Stage 2, AI-execution: ideation and outline

Feed the brief into your LLM of choice. Use it to generate heading structures, identify semantic gaps, surface related questions, and propose supporting evidence angles. This is where AI earns its keep, fast, comprehensive, and free of the cognitive bias that comes from being too deep in a niche.

Treat the output as a structural scaffold, not a content plan. You're looking for coverage gaps and structural logic, not copy.

Stage 3, AI-execution: draft generation

Generate the first draft against the approved outline. A 2,000-word draft that would take a writer 3-4 hours takes minutes. But the draft is raw material, not a finished product.

Stage 4, Human control: editorial, fact-check and E-E-A-T injection

This is where the quality threshold is crossed, or isn't. Non-negotiable. Cannot be abbreviated on anything you care about ranking.

Concretely, this means:

- Verify every statistic against its primary source. LLMs hallucinate citations with alarming confidence.

- Add unique anecdotes and analogies that only come from real experience, the kind of detail that signals genuine expertise to both readers and ranking systems.

- Strengthen arguments where the AI has produced plausible-sounding but shallow reasoning.

- Insert proprietary data where you have it: internal benchmarks, client results, original survey data.

- Adjust tone so it matches your brand voice, not the averaged-out style of the training corpus.

This stage typically takes 60-90 minutes for a long-form piece. That's the actual cost of quality.

Stage 5, AI-optimisation: meta, readability and internal linking

Run the edited draft through your optimisation tooling, Surfer, Clearscope, or equivalent, to check semantic coverage, heading structure, and keyword distribution. Use AI to generate meta title and description variants, and suggest internal linking candidates programmatically.

Stage 6, Human control: final QA and publishing

A final human pass for factual accuracy, brand alignment, and structural coherence before anything goes live. Doesn't need to be the same person as Stage 4, in fact, a second pair of eyes is better.

The ROI calculation nobody does honestly

The business case for this pipeline isn't pure speed. It's quality-adjusted output per pound spent.

Here's the model:

Net Efficiency Gain = (Editor Rate × Drafting Time Saved)

- (Editor Rate × Verification Time Added)

- Monthly Tool Costs

A realistic example: a senior editor at £75/hr saves 3 hours of drafting per article (£225 saved), but spends 1.5 hours on verification and E-E-A-T injection (£112.50 cost), against £150/month in tooling spread across 20 articles (£7.50/article). Net gain: roughly £105 per article in time value, before accounting for the fact that the edited output is demonstrably higher quality than what the editor would have produced under time pressure alone.

The real win isn't the £105. It's that you can publish 3x the volume at the same editorial quality, which compounds over time as topical authority builds.

What the SE Ranking data actually shows

SE Ranking's controlled experiment is the cleanest real-world validation of this pipeline I've seen. Six AI-assisted articles, properly briefed, AI-drafted, then substantively edited by human editors, accumulated approximately 555,000 impressions and 2,300+ clicks between June 2024 and July 2025. Three of the six rank in the organic top 10. Four appear as cited sources in AI Overviews.

The contrast with their second experiment is stark: 2,000 unedited AI articles across 20 brand-new domains saw early indexation and impressions, then collapsed around the three-month mark, with the share of pages ranking in the top 100 dropping from 28% to just 3%.

Same AI. Same tool. Entirely different outcomes. The variable was the human editorial layer, and the domain authority it was built on.

This is also why the question of is ai generated content good for seo doesn't have a clean yes or no answer. The SE Ranking data doesn't indict AI; it indicts AI without editorial process. Run a best free ai content generator at volume into a new domain with no human review, and you'll likely replicate that collapse. Use even a content generator free tier as the drafting engine inside a proper hybrid workflow, and the numbers look very different.

Tool deep dive: can ChatGPT 'do SEO' in 2026?

The short answer: no. A general-purpose LLM can't run your SEO strategy end-to-end. It has no access to live SERP data, no awareness of your domain's authority relative to competitors, no memory of your brand voice across sessions, and a well-documented tendency to hallucinate statistics with complete confidence. Treating it as an SEO solution rather than an SEO component is one of the most expensive mistakes I see teams make.

That said, used correctly, it's genuinely powerful. The key is knowing exactly where it fits.

Where general LLMs earn their keep:

- Keyword clustering: Feed ChatGPT or Claude a flat list of 200 keywords from Ahrefs and ask it to group them by intent and semantic similarity. It does this well and saves hours.

- Content brief scaffolding: Give it the top 5 SERP URLs for a target keyword and ask it to identify common H2/H3 patterns, missing subtopics, and question coverage gaps. This is essentially what large media editorial teams do, using AI for intelligent summarisation of SERP data before briefing writers, rather than having writers do the manual analysis themselves.

- Meta description variants: Generate 10 variations, A/B test the ones that feel right. Fast, low-risk, genuinely useful.

- H2/H3 brainstorming: Structural ideation before you commit to an outline.

What it can't do: tell you whether a keyword is actually winnable at your domain rating, score your draft against live competitor content, or adapt its output to your brand voice without extensive prompt engineering every single time.

Evaluating 'best free AI content generator' tools

If you're searching for a best free ai content generator, you'll land on ChatGPT's free tier, Claude Sonnet, and Gemini Advanced. They're all capable drafting engines. None of them are SEO tools.

The practical limitations are real. Free tiers hit token limits mid-workflow. There's no persistent brand voice, every new session starts from zero. Output defaults to the same generic structure that every other user gets, which means your content looks like everyone else's. And there's no integration with live SEO data, so the draft has no awareness of what's actually ranking, what the content gaps are, or how your competitors have structured their pages.

Using a content generator free of SEO context is like writing a brief without reading the SERP. You might get lucky, but you're flying blind.

The role of specialised SEO platforms

This is where the real leverage is. Tools like Surfer SEO, Frase, SE Ranking's Content Editor, and Ahrefs' Content Gap analysis do something a general LLM fundamentally can't: they anchor your content decisions in live, competitor-specific data.

Surfer scores your draft against the top-ranking pages in real time. Frase pulls the actual questions being asked in the SERP and builds briefs around them. Ahrefs shows you exactly which subtopics your competitors cover that you don't. Jasper and Writesonic, when configured with brand voice settings and connected to SEO data, narrow the generic-output problem considerably, though they're not immune to it.

Honest budget recommendation: a paid SEO platform (Surfer or SE Ranking at roughly £80-120/month) combined with ChatGPT or Claude for drafting will outperform most mid-tier "AI writer" subscriptions that promise to do everything. The all-in-one tools rarely do any single thing as well as the specialists.

The workflow that actually works: use a general LLM for raw ideation and first-draft velocity, then pipe the output through a specialised platform for optimisation against live SEO metrics, and put a human editor on it before it goes anywhere near your CMS. That's not a workaround. That's the architecture.

This also connects back to the broader question of whether is ai generated content good for seo, and the answer keeps coming back to the same variable. Whether you're running a full artificial intelligence campaign or just using a general LLM for drafts, the platform you pair it with matters as much as the AI itself.

The 2026 Landscape: SEO Isn't Dead, But Its Rules Have Evolved

SEO isn't dead. But if you're still measuring success purely by blue-link rankings and organic click volume, you're optimising for a game that's already changed around you.

The more accurate framing: SEO has expanded into what I'd call AI Ecosystem Optimisation. Your content now needs to perform across traditional SERPs, Google AI Overviews, and a growing cluster of AI answer engines, each with its own citation logic and referral behaviour.

The dual reality of AI Overviews and answer engines

Here's the tension you need to sit with. AI Overviews are simultaneously cannibalising your organic clicks and creating a new referral channel worth taking seriously.

On the cannibalisation side: organic CTR for queries with AI Overviews dropped from 1.76% to 0.61% between June 2024 and September 2025, a 61% decline according to Seer Interactive. That's not a rounding error. For informational queries where AI Overviews are most prevalent, expect significantly fewer clicks even if you're ranking in position one.

On the opportunity side, AI answer engines are becoming a legitimate referral channel. Generative AI monthly visits grew 76% year over year per SimilarWeb's 2025 report, and ChatGPT now accounts for over 77% of AI chatbot referral traffic globally according to Statcounter. That traffic converts, multiple studies report AI-referred visitors converting at 2x to 14x the rate of standard organic traffic, because they arrive pre-qualified by a conversational query.

The Ahrefs engagement data adds nuance: AI visitors averaged 4 pages per session versus 5.2 for search visitors, with a slightly higher bounce rate (67.8% vs 63.7%). Fewer pages, but higher purchase intent. A different visitor profile, not a worse one.

Optimising for the new referral streams

Getting cited by AI systems, whether Google's AI Overviews or ChatGPT, requires a different technical approach than traditional SEO.

Structured data is the most underutilised lever here. In March 2025, Google publicly stated that structured data is "critical for modern search features, including Generative AI, because it is efficient, precise, and easy for machines to process." Microsoft confirmed the same thing that month. Organisations using entity-based schema appeared 3x more often in AI responses according to BrightonSEO 2025 data from Schema App's Martha van Berkel.

The practical priority: implement Organization, Person, Article, and Product schema correctly, not as decorative markup, but as machine-readable entity declarations that connect your content to the knowledge graph. Your author bios, your brand name, your product SKUs, all of it needs to be unambiguous to a machine.

Beyond schema, conciseness matters. AI systems extract direct answers. Content structured with clear headings, short definitional paragraphs, and explicit question-answer patterns gets pulled more reliably than dense prose.

For monitoring where your brand actually appears across ChatGPT, Perplexity, and Gemini, tools like Scrunch AI are purpose-built for this, tracking citation frequency and brand mentions across AI engines in a way that Google Search Console simply doesn't cover.

E-E-A-T: from algorithmic checkbox to business imperative

When an AI system synthesises an answer from dozens of sources, it has to decide which sources to trust. That decision is driven by the same signals Google's quality raters have been evaluating for years, but the stakes are now higher.

A thin author bio and a generic "about us" page used to be a minor SEO weakness. Now it's a reason to be excluded from an AI-generated answer entirely. Demonstrable E-E-A-T, named authors with verifiable credentials, cited sources, original data, and consistent topical authority, is what gets you into the citation pool. It's not a checklist item. It's the infrastructure that determines whether AI systems treat your domain as a trusted source or background noise.

Worth sitting with: a Stanford HAI study on medical RAG found that up to 30% of statements in GPT-4 RAG responses are unsupported by their cited sources, and nearly half of responses contain at least one unsupported statement. That's not a case against using AI, it's a case for making your source material so clearly structured and credibly attributed that AI systems can't easily misrepresent it. Strong E-E-A-T signals reduce the chances your content gets mangled in the synthesis process.

Common pitfalls and why 85% of AI SEO projects fail

The failure rate isn't a technology problem. It's a process problem. Teams that struggle with AI content aren't using worse tools, they're skipping the editorial controls that turn raw AI output into something Google actually wants to rank.

Here's where most projects go wrong, and what to do instead.

| Pitfall | Consequence | The Antidote |

|---|---|---|

| Mass-producing low-differentiation content | Ranking erosion post-March 2024 core update; Google's spam systems now specifically target scaled, lookalike content | Every piece needs a differentiating angle, original data, a specific use case, a named expert perspective. Volume without differentiation is a liability, not an asset |

| Publishing unverified AI drafts | Hallucinated statistics, broken citations, outdated facts embedded in indexed pages | Mandatory fact-check layer before publish. Every stat needs a live source URL. Every claim needs a human to vouch for it |

| Using AI for YMYL content without expert review | Severe E-E-A-T penalties; manual actions in health, finance, and legal verticals | For YMYL topics, AI handles formatting and structure only. The substance, the actual advice, diagnosis framing, or legal interpretation, must come from a credentialed human. Required human input here is 80–100% |

| Ignoring structured data and freshness signals | Excluded from AI Overviews and knowledge-graph features despite strong organic rankings | Implement relevant schema (article, FAQ, person, product). Refresh high-performing pages quarterly with updated data and dates |

| Treating AI as a volume multiplier, not a quality multiplier | Early indexing gains evaporate; traffic volatility after core updates | Use AI to produce better content faster, not more content carelessly. One well-researched hybrid post outperforms ten thin AI articles every time |

| Never updating AI-generated content | Stale pages lose rankings as fresher, more accurate competitors take over | Build a refresh cadence into your workflow. AI content ages faster because it often lacks the original sourcing that earns evergreen authority |

The hallucination problem is worse than most people admit

So, is ai generated content good for seo? Generally yes, but only with guardrails. The hallucination risk is real and underestimated in most content pipelines.

A Stanford HAI study on medical AI responses found that even GPT-4 with RAG produced at least one unsupported statement in nearly half of responses. That's in a controlled research context with explicit source retrieval. In an unmonitored content pipeline, the rate is almost certainly worse.

Publishing unverified AI output isn't just an SEO risk, it's a credibility risk. One factually wrong article on a sensitive topic can undo months of authority building. This applies whether you're running an artificial intelligence campaign, using the best free ai content generator on the market, or building with an enterprise stack. The output quality ceiling is set by your editorial process, not the tool.

Worth being direct about the content generator free tier problem too: lower-cost tools often skip the retrieval layer entirely, which makes hallucination more likely, not less. Free doesn't mean unusable, it means the fact-checking burden shifts entirely onto you.

One more thing worth stating plainly: disclose significant AI use where it isn't obvious. Google's guidance doesn't mandate disclosure, but the direction of travel is clear. Transparency is increasingly a trust signal, not a confession.

Myth Busting: The so-called 'AI rules' (30%, 80/20, 3-3-3)

If you've spent any time in SEO communities or LinkedIn threads, you've probably seen people citing hard percentage rules for AI content. Most of them are invented.

The '30% rule' doesn't exist

There's no Google policy, guideline, or algorithm that says "keep AI content below 30% and you'll be fine." This rule appears to have started as a loose interpretation of older content quality discussions, then got laundered through enough blog posts that it feels official. It isn't.

What Google actually evaluates is whether content is helpful, reliable, and people-first. No token ratio, no percentage threshold, no detection trigger that fires at 31%. The real threshold is qualitative: does the page demonstrate genuine expertise, satisfy user intent, and offer something a competitor hasn't already covered better?

Chasing a percentage gives you false confidence. A page that's 70% human-written but padded with generic observations will underperform a page that's 80% AI-drafted but rigorously edited by a subject-matter expert who added real-world data, first-hand experience, and a clear point of view.

The 80/20 rule, reframed for AI content

The 80/20 principle is real. It's just being applied backwards by most teams. People assume: "80% of the content can be AI, 20% human-touched." That's not how leverage works here.

The more accurate framing: 20% of your human effort, applied to strategy, brief quality, editorial review, and E-E-A-T injection, will determine 80% of your AI content's ranking potential. The AI does the structural heavy lifting. The human effort is the force multiplier. Get the brief wrong or skip the editorial pass, and you've just scaled mediocrity.

The '3-3-3 rule' is a sales framework

The 3-3-3 rule, contact a prospect within 3 minutes, follow up 3 times, across 3 channels, is a sales cadence framework. It has nothing to do with SEO or AI content. Its appearance in People Also Ask data alongside this topic is almost certainly a SERP artefact from overlapping keyword clusters. Worth knowing so you don't spend time hunting for an SEO version that doesn't exist.

The principle behind the myths

These rules spread because people want certainty in an uncertain system. Rigid percentage-based rules are a substitute for strategic thinking, not a version of it. The only framework that holds up is the one built on your actual domain authority, your content pipeline quality, and the depth of human expertise you're injecting at the right stages.

The Human Edge: The 3 SEO & content roles AI can't replace

The previous section dismantled the myth that rigid percentage rules can substitute for strategic thinking. Here's the corollary: the humans in your content pipeline aren't there to apply those rules, they're there to make judgements that no rule can capture.

These aren't roles that survive AI. They're roles that AI makes more valuable.

1. The Strategic Editor (the E-E-A-T architect)

This is the person who decides what your content stands for. They define the content mission, set the quality threshold, and make the final call on whether a piece earns a publish or goes back for rework. Not because they're checking a list, but because they understand what your brand's authority actually rests on.

AI can produce a structurally coherent article in minutes. What it can't tell you is whether that article advances your topical authority, differentiates you from the three competitors ranking above you, or quietly undermines the trust you've spent two years building. That judgement is editorial. It lives in a person.

In an AI-augmented workflow, the Strategic Editor stops writing first drafts entirely. They move upstream, into brief quality, content strategy, and workflow governance. It's a promotion, not a preservation.

2. The Subject Matter Expert (the trust signal)

For YMYL content, complex B2B topics, or any niche where the reader is making a real decision based on what they read, the SME is the difference between content that ranks and content that gets filtered out by E-E-A-T signals.

LLMs synthesise from public data. They can't give you a proprietary case study, a hard-won opinion formed from 10 years in a specific vertical, or the kind of nuanced caveat that only comes from having actually done the thing. That's what pure AI content consistently lacks, and it's a real reason to question whether is ai generated content good for seo in isolation, without human expertise layered in. It's why it underperforms human-written content on average.

The SME's role shifts here. They're no longer writing 2,000-word articles from scratch. They're doing a 30-minute recorded interview, reviewing a draft for accuracy, or adding a paragraph of genuine insight to an AI-generated scaffold. Their byline is a trust signal. Their time is spent where it creates the most leverage.

3. The SEO Analyst (the adaptive strategist)

This role has fundamentally changed. The 2026 SEO Analyst isn't primarily building keyword lists, they're interpreting a more complex performance picture than most teams are currently equipped to read.

Which content types are being cannibalized by AI Overviews? Which queries still drive clicks? How does AI referral traffic from ChatGPT compare to organic search traffic in terms of engagement and conversion? A best free ai content generator or artificial intelligence campaign can scale output fast, but none of that matters if nobody's reading the data on the other end. These aren't questions a tool answers automatically, they require someone who can read the data, form a hypothesis, and restructure the workflow accordingly.

This is the role that turns the hybrid content pipeline into a learning system rather than a production line. And if you're using a content generator free or paid, this is also the person who decides whether what's being generated is actually worth publishing.

The throughline across all three roles is the same: AI handles the repeatable and the generative. Humans own the strategic, the experiential, and the interpretive. The goal isn't to protect headcount, it's to redeploy it where it actually compounds.

The Verdict on AI-Generated Content and SEO

The question "is ai generated content good for seo?" has a genuinely unsatisfying answer: it depends entirely on what you're building on top of.

AI is an amplifier. Point it at a site with real authority, genuine subject matter expertise, and a disciplined editorial process, and it accelerates everything. Point it at a thin site with no backlink profile and no human oversight, and it accelerates the decline just as efficiently.

The sustainable model for 2026 isn't AI-first or human-only. It's a phase-gated assembly line where AI handles the scalable generative work, research synthesis, structural drafts, metadata variants, while humans own the irreplaceable parts: strategy, E-E-A-T signals, factual validation, and the original perspective that search engines and readers actually reward.

Whether you're running a full artificial intelligence campaign or just experimenting with a best free ai content generator, the Strategic Editor, the Subject Matter Expert, and the SEO Analyst aren't being replaced. They're becoming the control points that determine whether your AI investment compounds or collapses.

Start here: audit your domain rating, map your next ten content pieces against the Domain Rating Matrix, and run one target topic through the full Hybrid Content Assembly Line. Even if you're using a content generator free or paid, measure the time and the rankings. That data, your data, will tell you more than any benchmark study ever could.