April 4th, 2026

A Founder's Guide to AI SEO Tools for Automated Content Publishing

WD

WDWarren Day

You're caught between two realities: your content calendar is empty, and your schedule is full.

You know SEO compounds over time , it's one of the few growth channels where the work you do today pays dividends eighteen months from now. But the daily grind of keyword research, brief writing, drafting, and optimisation feels like a second job you never signed up for. So you've started exploring ai seo tools, probably beginning with ChatGPT. And you've hit the same wall everyone hits: the output is fluent, fast, and almost entirely useless for ranking.

That's not a failure of AI. It's a failure of workflow.

By 2026, 91% of marketers report actively using AI in their work, but only 41% can actually prove ROI from it. That gap between adoption and results is where most founders are stuck. They've bolted AI tools onto an existing process without stopping to rethink the process itself. More tools, same bottlenecks.

The winning strategy isn't hunting for the single best ai seo tool. It's building a lean, automated content pipeline where specialised AI handles execution at each stage, and you stay in the loop only where human judgement genuinely matters: strategy, positioning, and the final edit that injects the authority no model can fabricate.

What follows is that blueprint. A four-pillar tool stack mapped to the full content pipeline, from keyword discovery to CMS publication. A founder-specific 80/20 workflow you can run in a few focused hours per week. And a 30-day implementation plan that gets you to consistent output without rebuilding your entire operation from scratch.

The State of SEO in 2026: It's Not Dead, It's Different

Let me be direct: SEO isn't dying. It's undergoing the most significant structural shift since Google introduced PageRank. The rules have changed, the metrics have changed, and the strategies that worked in 2021 are now liabilities. For lean, agile teams, that's actually an opening.

Here's the number that should reframe your entire approach: 64% of global searches in mid-2025 resulted in zero clicks. The user got their answer from an AI Overview, a featured snippet, or a knowledge panel and never visited anyone's website. If you're still measuring SEO success purely by organic clicks, you're tracking the wrong thing.

Visibility and citation are the new primary KPIs. Appearing in an AI Overview, being referenced in a Google-generated summary, ranking in the sources panel of a Perplexity answer -- these are brand impressions at scale. The goal isn't just to drive a click; it's to become the source that AI systems trust and cite. That rewards authority over volume. Different game entirely.

Now, the AI content paradox. Ahrefs analysed 900,000 newly published web pages in April 2025 and found that 74% contained some form of AI-generated content. Every competitor in your space is already doing this. Publishing AI-generated text is table stakes, not an edge. The edge comes from how you use it.

This is where E-E-A-T becomes the real competitive moat. Google's algorithms increasingly reward content that demonstrates genuine Experience, Expertise, Authoritativeness, and Trustworthiness -- signals that raw, unedited AI output structurally cannot provide. First-person insights, named authors with verifiable credentials, original data, specific examples drawn from real work. These are things an LLM can't fabricate convincingly at scale, and they're exactly what separates content that ranks from content that clutters an index.

Will AI replace SEO? No. Among the best ai seo tools and ai seo optimization tools available right now, not one of them can replace the strategic judgement of someone who actually understands their market, their customers' real questions, and where their domain has the authority to compete. AI replaces tasks -- first drafts, keyword clustering, meta descriptions, content briefs. That's it.

The founder's role now is editor-in-chief and systems architect. Not writer. Not keyword researcher.

The founders who win at SEO in 2026 are the ones who build a system around that insight.

Architecting Your AI SEO Tool Stack: The Founder's Blueprint

Most founders approach ai seo tools the way they approach any SaaS purchase: search "best X tool", pick the one with the slickest demo, bolt it onto whatever they're already doing. Then wonder why nothing changes.

"Which AI tool is best for SEO?" is the wrong question. It's like asking which tool is best for building a house. The answer depends entirely on what stage of construction you're in.

What actually works is treating your content operation as a pipeline, not a collection of disconnected tools. Each stage has a job. Each tool handles that job. Data flows between them with minimal human intervention. You step in at the decision points that require real judgement -- keyword strategy, editorial quality, brand voice -- and let the system handle execution.

This is the architecture I built into Spectre. Same mental model I'd hand to any founder who asked how to get SEO working without hiring a full content team.

The Four-Pillar Pipeline

The pipeline breaks into four distinct pillars. Get this structure right and the tool choices become obvious. Get it wrong and you'll spend more time managing tools than creating content.

Keyword Ideas

│

▼

[Pillar 1: Research & Strategy]

Ahrefs · Semrush · DataForSEO

│

▼ (Brief)

[Pillar 2: Content Creation]

ChatGPT · Claude · Jasper

│

▼ (Draft)

[Pillar 3: On-Page Optimisation]

Surfer SEO · MarketMuse · Frase

│

▼ (Optimised Draft)

[Pillar 4: Publishing & Distribution]

Make · CMS APIs · Zapier

│

▼

Live Post + Distribution

The arrows matter as much as the boxes. Every handoff between pillars is an opportunity for the workflow to collapse into manual work. Your job as the architect is to wire those handoffs together -- via APIs, webhooks, or automation tools like Make or n8n -- so data flows downstream without you copy-pasting between browser tabs.

AI SEO Tools by Pillar

| Pillar | Purpose | Tools |

|---|---|---|

| Research & Strategy | Keyword discovery, SERP analysis, competitor gaps, content briefs | Ahrefs, Semrush, DataForSEO, Frase |

| Content Creation | First-draft generation from brief | ChatGPT, Claude, Jasper, Surfer AI |

| On-Page Optimisation | Scoring, semantic coverage, structure, E-E-A-T signals | Surfer SEO, MarketMuse, Clearscope |

| Publishing & Distribution | CMS publishing, internal linking, social variants, indexation | Make, n8n, Zapier, WordPress API, Webflow API |

All-in-One vs. Best-of-Breed

There's a real debate here. I'll give you the honest version, not the vendor version.

All-in-one platforms like Semrush or Ahrefs cover multiple pillars adequately. Data stays in one place, the learning curve is contained, and you're not debugging broken Zaps at 11pm. For a founder who's time-poor and just getting started, this is often the right call -- get one tool working before you build a stack.

Best-of-breed stacks -- Ahrefs for research, Surfer for optimisation, Claude for drafting, Make for publishing -- give you best-in-class capability at each pillar. But they come with what I call the integration tax: the hidden cost in developer time, Zapier task limits, API rate limits, and the ongoing maintenance burden of keeping five tools talking to each other. When one tool updates its API schema, your pipeline breaks. Nobody mentions that in the "best tools" roundups.

The practical answer for most founders at the 1--50 employee stage: anchor your stack on one solid research platform (Ahrefs or Semrush), add a dedicated optimisation layer (Surfer SEO is the default choice for good reason), and automate the publishing handoff. Three tools. Not ten.

The Human-in-the-Loop Model

Here's the bit most AI SEO content gets wrong: the pipeline doesn't replace your judgement, it protects your time so you can apply it where it counts.

You should be making decisions at exactly two points -- which keywords to target, and whether the final draft is good enough to publish. Everything in between -- brief generation, first draft, optimisation scoring, CMS upload -- should be automated or AI-assisted.

74% of new web pages published in April 2025 contained some AI-generated content. The floor for AI content has collapsed. The only differentiator left is the quality of the human judgement layered on top of it.

That's the blueprint. The next four sections walk through each pillar in detail -- what the tools actually do, where they fail, and how to wire them together without creating a maintenance nightmare.

Pillar 1: Research & Strategy -- Finding the Right Battles

Before any AI tool writes a single word, you need to know which keywords are worth targeting. This is where most founders waste the most time -- and where AI acceleration has the clearest payoff.

The foundation is still Ahrefs or Semrush. No shortcut around reliable keyword data. But the AI-enhanced features layered on top -- keyword clustering, content gap analysis, topical authority mapping -- compress hours of spreadsheet work into something a founder can action in a single session. Ahrefs' keyword clustering groups semantically related terms automatically, so instead of targeting 40 isolated keywords, you're building 5 topic clusters that reinforce each other.

The real leverage comes when you add a specialised research tool like Frase. Feed it a seed keyword and it analyses the top-ranking pages, extracts the questions people are asking, identifies structural patterns across competitors, and produces a content brief you'd normally spend half a day building manually. Embryo Digital saved 180 hours per month by shifting their briefing workflow to Frase -- that's not a rounding error, that's a full-time employee's worth of capacity.

What AI can't do here is set your strategy. It can surface a list of rankable keywords, but it doesn't know your domain rating, your commercial priorities, or which topics you can actually write with authority. A keyword clustering tool might tell you "ai seo tools" is worth targeting. It can't tell you whether you have the domain strength to compete for it, or whether that traffic converts for your specific offer.

Your job at this pillar is to approve the battlefield, not map every inch of it.

Pillar 2: AI Content Creation -- The Draft Engine, Not the Publisher

The question I get asked most often: can ChatGPT do SEO? Yes and no. It's a genuinely capable drafting engine when you feed it a well-structured brief, a clear target keyword, and specific instructions about structure and depth. What it can't do, out of the box, is optimise for semantic coverage, maintain brand voice across dozens of posts, or tell you whether your draft actually matches what's ranking for that query.

Used alone, it's a word processor with good autocomplete.

That distinction matters. ChatGPT gives you a coherent, readable draft -- and gives the same coherent, readable draft to the next thousand people who type a similar prompt. The output converges toward a bland, confident median. The SEO equivalent of stock photography. Not wrong, just not yours.

This is where tools like Jasper earn their place -- not as magic bullets, but as efficiency layers. Jasper lets you encode brand voice, maintain project-level consistency, and work within SEO-aware templates that push the output in the right direction before it hits an optimisation tool. For teams producing content at volume, that consistency compounds. A Forrester TEI study commissioned by Jasper found customers achieved $2.2M in annual time savings and a 342% ROI -- numbers that only make sense at scale, but they show what systematised AI drafting unlocks when it's embedded in a real workflow rather than used ad hoc.

The mental model that matters: the AI draft is an input, not an output. Whatever tool you use -- ChatGPT with a strong prompt, Jasper with a trained brand voice, or a purpose-built pipeline like Spectre -- the draft must pass through optimisation and human editing before it sees a CMS. Skipping that step is precisely how sites end up publishing content that briefly ranks, then collapses after a Google quality update.

Your perspective, your experience, your genuine opinions -- that's the E-E-A-T signal no language model can fabricate. It gets added in the next stage.

Pillar 3: On-Page & Data-Driven Optimisation -- Where AI Truly Shines for SEO

Pillar 2 generates a draft. Pillar 3 makes it rankable. This is where ai seo optimization tools stop behaving like writing assistants and start behaving like reverse-engineered SERP analysts -- and it's genuinely the most impactful category in the stack.

Here's what's actually happening under the hood: tools like Surfer SEO pull the top 20 ranking pages for your target keyword, extract hundreds of on-page signals -- NLP entities, semantic term frequency, heading structure, word count norms, internal link density -- and translate those correlations into a live content score. You're not guessing what Google wants. You're reading what it's already rewarded.

Surfer SEO's Content Editor is the best-known implementation of this. As you write (or paste in a draft), it surfaces NLP-driven term suggestions drawn from competing pages, flags structural gaps, and adjusts your score in real time. The SERP Analyzer sits behind it, explaining why pages rank -- not just what they contain. That's the difference between copying competitors and understanding the pattern.

The results when this is applied properly are significant. Planable, a social media SaaS, achieved a 176% increase in organic traffic over six months using Surfer as their primary optimisation layer. That's consistent with what I see when structured SERP-data optimisation replaces gut-feel content decisions. If you want to test the workflow before committing, the surfer seo free trial is worth using to run your top three target keywords through the Content Editor and see the gap between what you've published and what's actually ranking.

MarketMuse takes a different but complementary angle. Rather than scoring against the current SERP, it builds a topic model by analysing thousands of documents to surface semantically related concepts your content is missing. The Kasasa case study is instructive: 92% year-over-year growth in organic entrances after systematically closing topical coverage gaps identified by MarketMuse. It's a stronger fit for building content authority across a cluster than for single-page optimisation sprints.

Frase sits between the two -- strong on automated brief generation from SERP analysis, useful for founders who want research and structure handled before they start writing rather than scored after.

Practical note on AI citation visibility: Surfer's own research found that formatting content into concise bullet points increased AI citation likelihood by 8.63%. With 64% of searches now resulting in zero clicks, appearing in an AI Overview is the click. Structure isn't just an SEO nicety -- it's the mechanism by which your content gets surfaced in the answer layer.

One thing worth being direct about: these tools provide the what -- the terms to include, the structure to follow, the gaps to close. They don't provide the how. The unique case study, the contrarian take, the practitioner-level insight that signals E-E-A-T to both Google and a reader -- that's your job. An optimised shell with generic content inside still fails. The tools sharpen the container. You fill it with something worth reading.

Pillar 4: Automated Publishing & Distribution -- The Missing Link

Most AI SEO advice stops at "optimise your draft." That's exactly where the workflow falls apart for founders -- because the gap between a finished Google Doc and a live, indexed post is where hours disappear every week.

Nobody talks about this pillar. It's where genuine scalability actually lives.

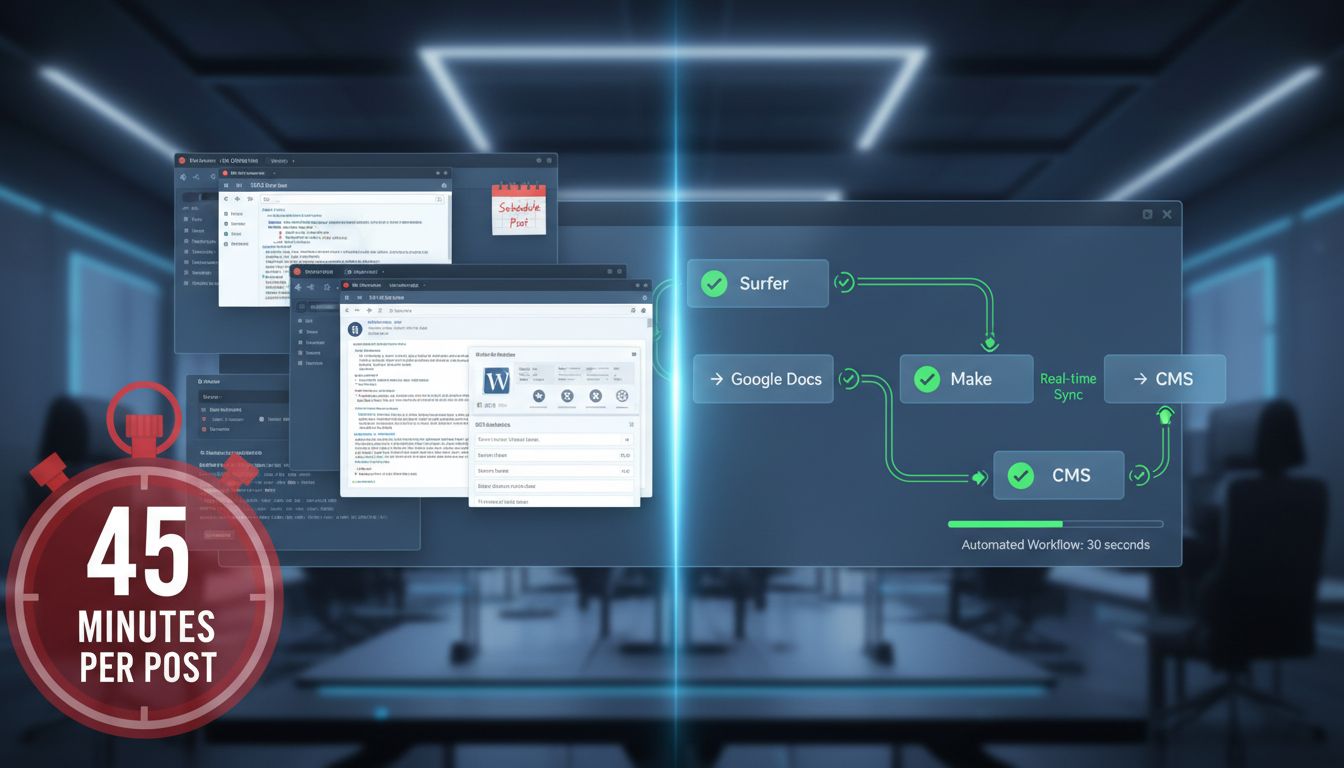

The manual version looks like this: export from Surfer, paste into WordPress, reformat the headings, re-upload the images, set the meta description, assign categories, schedule it -- then do it again next week. Each step is trivial. Collectively, they're a 45-minute tax on every single piece of content you publish.

The automated version uses a webhook. Your final approved doc triggers a Make or Zapier workflow that calls your CMS API -- Webflow, WordPress, Contentful, whatever you're running -- and publishes the post with all metadata intact. A practical pipeline looks like this:

Surfer Editor → Google Docs (final human edit) → Make webhook → CMS API → scheduled live post

I've built variations of this inside Spectre. The setup cost is real -- typically three to five hours to wire up correctly, handle edge cases, and test against your CMS's API quirks. That's the integration tax. Pay it once, reclaim it every week thereafter.

For teams publishing multilingual content, this layer becomes even more critical. Phrase, which processes more than 2 billion words monthly, integrates directly with Contentful and similar headless CMS platforms -- meaning localised variants can be routed and published programmatically without a human touching each one.

alli ai seo is worth mentioning here as an emerging option for teams that want automated on-page deployment without a custom integration. Rather than calling your CMS API directly, it installs a code snippet on your site and deploys SEO changes -- meta tags, schema, internal links -- directly to live pages through its dashboard. Different model to a full publishing pipeline, but useful if your bottleneck is post-publication optimisation rather than initial deployment.

One honest caveat before you invest time in this pillar: automated publishing is useless if your technical SEO foundations are broken. If Google can't crawl your site efficiently, if your Core Web Vitals are failing, or if your indexing configuration is misconfigured, no amount of pipeline automation fixes that. AI tools can't resolve a broken sitemap or a misconfigured robots.txt. Sort the foundations first -- then automate on top of something that actually works.

The Founder's 80/20 AI SEO Workflow in Practice

Most founders I speak to treat SEO as an all-or-nothing commitment. Either they're spending 10+ hours a week on it, or they've quietly abandoned it. The 80/20 model breaks that false choice.

The 80/20 Rule in SEO:

The Pareto Principle applied to SEO states that 80% of your organic results come from 20% of your efforts. In an AI-powered workflow, this means automating 80% of tactical execution -- keyword clustering, drafting, optimisation, formatting, and publishing -- so your 20% effort concentrates on high-leverage strategy and human refinement.

That 20% is irreplaceable. It's where your experience, judgement, and genuine perspective live. The 80% is mechanical -- and machines are better at mechanical than you are.

Your 20%: The High-Leverage Human Tasks

Three things only you should be doing:

- Strategic topic selection and keyword approval -- Deciding which keywords align with your commercial goals, not just which ones have search volume. An AI can cluster keywords; it can't tell you which problem your product actually solves.

- Crafting detailed briefs and prompts -- The quality of your AI output is directly proportional to the quality of your input. A lazy prompt produces a generic draft. A brief that includes your target customer's specific pain points, your contrarian angle, and the one insight your competitors missed? That produces something worth publishing.

- The final edit -- This is where E-E-A-T gets injected. Add the customer story from last month's call. Reference the specific metric from your own data. Rewrite the introduction so it doesn't sound like every other article on the topic.

Your 80%: What the Tools Handle

Everything else. Keyword clustering, SERP analysis, outline generation, first drafts, semantic optimisation, content scoring, internal link suggestions, meta descriptions, formatting, and CMS publishing. If it's repeatable and rule-based, it belongs in the automated layer.

A Realistic Weekly Schedule (2.5 Hours Total)

| Day | Task | Time |

|---|---|---|

| Monday | Strategy session: approve keywords, write briefs | 60 min |

| Tue--Wed | Tools auto-generate drafts, run optimisation | 0 min |

| Thursday | Final edit: inject voice, data, stories | 90 min |

| Friday | automated publishing via CMS integration | 0 min |

Two and a half hours. One fully optimised article per week, published consistently, without burning out.

Pillars 1 and 2 (research and drafting) run largely on autopilot after your Monday briefing session. Pillar 3 (optimisation) runs in parallel with drafting. Pillar 4 (publishing) fires automatically on schedule. Only 41% of marketers can actually prove AI ROI -- and honestly, that tracks. Most aren't operating with this kind of intentional structure. They're using best ai seo tools reactively, grabbing whatever's trending, rather than building a system where each tool has a defined job and the handoffs are wired together. The structure is what makes the ROI real.

Common Pitfalls: Where AI SEO Goes Wrong (and How to Avoid It)

The workflow I've described works. But I've also watched founders implement pieces of it and get burned -- not because AI SEO doesn't work, but because they made predictable, avoidable mistakes. Here's what to watch for.

Publishing Unedited AI Content at Scale

This is the one that keeps coming up, and the data is unambiguous. After Google's March 2024 core update, unoriginal low-quality content saw a 45% drop in rankings within weeks. Then, in February 2025, a documented experiment tracked 20 websites that had published unedited AI content at scale -- they initially ranked, then lost all keyword positions simultaneously. Not gradually. All at once.

AI drafts are inputs, not outputs. Even the best generation tools produce content that's structurally sound but experientially hollow -- no genuine opinion, no specific examples, no real-world friction. That's exactly what Google's quality signals are trained to detect.

Here's a benchmark worth sitting with: 98% of sales professionals edit AI-generated text before using it. If people who sell with AI won't trust raw output, you shouldn't be publishing it.

Buying Tools That Don't Match Your Actual Bottleneck

Most founders who tell me "AI SEO isn't working" are using a sophisticated content generation tool when their real problem is keyword selection. Or they're paying for an optimisation suite when they haven't fixed their publishing pipeline.

Before you spend anything, audit where time actually disappears in your content process. Is it research? Drafting? Editing? Publishing? That answer determines which pillar to invest in first. A £99/month content editor won't help if you're spending four hours on keyword research before you even open a doc.

Failing to Measure ROI Against Anything Concrete

Only 41% of marketers could prove AI ROI in 2026 -- and I suspect most of the other 59% weren't measuring the right things, not that the tools weren't delivering.

For a founder, the metrics are simple:

- Hours saved per article -- benchmark your pre-AI time, measure after

- Time-to-publish -- from keyword selection to live URL

- Top 10 keyword growth -- tracked monthly in Google Search Console or Ahrefs

You don't need attribution modelling. You need a before/after comparison on a handful of numbers. If you can't show movement on those three after 90 days, something in the system is broken.

Ignoring the Domain Rating Moat

This is the caveat I wish more ai seo optimization tools content included, because skipping it is how founders waste months of effort.

If your domain rating is below 20, the quality of your AI-assisted content is largely irrelevant for competitive terms. You will not outrank established players on broad keywords, regardless of how well-optimised your article is. Domain authority is the moat, and it's built slowly through backlinks, not content volume.

The right strategy at low DR is ultra-specific, long-tail targeting -- questions your competitors haven't bothered to answer thoroughly, niche sub-topics with low competition, and content depth that earns links organically over time. AI tools accelerate your ability to execute that strategy at volume, but they don't change the underlying authority equation. Work within your DR reality, not against it.

Getting the Formatting Wrong

Tactical, yes. Increasingly consequential, also yes. Surfer's internal research found that formatting content into concise bullet points increased AI citation likelihood by 8.63%. That's not a rounding error -- it's the difference between appearing in an AI Overview and being invisible in it.

Wall-of-text content that reads well to humans is increasingly penalised by the systems that determine what gets surfaced in AI-generated answers. Short paragraphs, clear heading hierarchies, bullet points for lists, step-by-step structure for processes -- these aren't just readability choices anymore. They're visibility signals.

If you're running content through an optimisation tool like Surfer and ignoring its structural suggestions, you're leaving discoverability on the table.

Your 30-Day Implementation Plan: Start Here, Not Everywhere

The biggest mistake I see founders make isn't picking the wrong tool. It's trying to overhaul everything at once. You end up with five half-configured subscriptions, no measurable improvement, and the same content bottleneck you started with.

Do this instead: treat the first month as a single sprint with one goal , prove that one part of your pipeline is faster with AI than without it.

Week 1: Audit Your Current Process

Before you touch a single tool, map where your time actually goes. Write down every step from "I have a keyword idea" to "post is live," then identify the single biggest time sink. Research? First drafts? Optimisation? Publishing?

Pick one bottleneck. That's your target for the month.

Week 2: Master One Tool Against That Bottleneck

Sign up for a free trial of one tool that directly addresses your chosen bottleneck. If drafts are slow, try Surfer AI or Frase. If optimisation is the drag, start with Surfer's Content Editor.

Use only the features that solve your specific problem. Ignore everything else. At the end of the week, document the before/after time cost in a simple note -- even a rough estimate is useful data.

Week 3: Measure One Metric, Build One Automation

Pick a single leading indicator: time from brief to draft, content score before vs. after optimisation, or pieces published per week. One number.

Then build one lightweight automation. Export your Surfer brief directly into a Google Doc template. Set up a Zapier trigger that notifies you when a draft is ready. Small friction reductions compound faster than you'd expect.

Week 4: Refine the Human 20% and Consider Tool Two

With time freed up, put that capacity back into the parts AI can't do well: sharpening your briefs, adding genuine insight to drafts, improving internal linking. These are the levers that actually move rankings.

Only now -- if Week 2 and 3 showed clear time savings -- should you consider adding a second tool to your stack.

Your 30-Day Checklist

- Map every step of your current content process end-to-end

- Identify and name your single biggest time bottleneck

- Start one free trial targeting that bottleneck only

- Document time cost before and after using the tool

- Choose one leading metric to track weekly

- Build one automation to reduce a manual handoff

- Use recovered time to improve briefs and editorial quality

- Evaluate whether a second tool is warranted based on evidence

Day 30 shouldn't look like a perfect AI stack. It should look like one proven workflow improvement you can actually build on. Start there.

The Bottom Line on AI SEO Tools

The founders who win at SEO in 2026 aren't the ones who found the perfect all-in-one platform. They're the ones who stopped looking for it.

A stack of specialised ai seo tools , each handling one stage of the pipeline well , will outperform any single tool trying to do everything. Not a limitation of the technology. That's just how good systems get built.

Your job isn't to write every article, research every keyword, or manually optimise every page. Architect the system, set the strategic direction, and apply your irreplaceable domain expertise at the moments that actually matter: the brief, the angle, the final edit.

The 80% of tactical execution? Let the tools handle it.

But none of this starts with a tool comparison spreadsheet. It starts with an honest look at where your current process actually breaks down. Pick your single biggest bottleneck. Choose one tool from the blueprint above that directly addresses it. Trial it this week. Then build from there.

One block at a time is how durable content systems get built.