March 31st, 2026

AI Blog Writing Tools for SEO Content: A 2026 Review and Comparison

WD

WDWarren Day

You've probably already run this experiment. You picked one of the dozens of ai blog writing tools flooding the market, fed it a keyword, and watched it produce a 1,500-word draft in under two minutes. Time saved: real. Rankings earned: zero.

That gap is where most content teams are stuck right now.

Here's the uncomfortable part: the tool probably worked exactly as advertised. The strategy around it didn't. Vendors are happy to show you case studies with 500% impression lifts and 717% year-over-year traffic growth, but they're a lot quieter about the editorial labor, the authority-building, and the E-E-A-T signals that did the actual heavy lifting behind those numbers.

What they really won't tell you is that sites leaning entirely on AI-generated content without meaningful human editing tend to see rankings deteriorate fast. Not because Google hates AI, but because undifferentiated content has no reason to rank. There's nothing in it that couldn't have been written by someone who'd never touched your industry.

So the question isn't which free ai content generator saves you the most time per article. It's which tool actually fits how your team works, what your SEO program is ready to support, and what budget you're genuinely working with. That distinction changes the entire buying decision.

What follows is built around that premise. We'll go through a practical evaluation framework, a breakdown of tools matched to real editorial constraints, a way to calculate your true per-article cost, the failure patterns that quietly kill AI content programs, and a workflow that holds up over time.

The 2026 Reality: AI Content, Google, and the E-E-A-T Imperative

Google's official position is actually pretty simple: it doesn't penalize content for being AI-generated. It penalizes content for being unhelpful. That distinction matters more than most people in the AI content space want to admit.

The productivity case for these tools is real. 84% of enterprise marketers using AI-powered content tools report that productivity has significantly or somewhat improved, according to the Content Marketing Institute. Faster drafts, broader topic coverage, lower per-word costs. Those are genuine wins.

But productivity and ranking performance aren't the same thing.

Search Engine Land and SE Ranking ran a 16-month controlled experiment: 2,000 fully AI-generated articles across 20 brand-new domains. Early results looked fine. Around 80% of sites were showing up in Google Search within the first month, ranking for hundreds or thousands of queries. Then the three-month mark hit. Rankings collapsed. By six months, only 3% of pages held any position in the top 100. After a full year, no site showed meaningful recovery.

The culprit wasn't AI authorship.

It was the absence of E-E-A-T signals -- Experience, Expertise, Authoritativeness, and Trustworthiness. No original insights. No demonstrated authority. No trust infrastructure of any kind. Google's systems eventually recognized the pattern, and down everything went.

Speed without substance is just a faster path to a rankings plateau. Any content team using ai blog writing tools right now is navigating exactly this tension.

Two distinct categories have emerged in response. SEO-first platforms like Surfer, MarketMuse, and Frase bake SERP analysis, content scoring, and E-E-A-T scaffolding directly into the workflow. General-purpose writers like Jasper, Copy.ai, and Writesonic optimize for generation speed and brand voice, leaving the SEO layer largely to you -- including any free ai content generator you might already be testing on the side.

Which category fits your situation has nothing to do with feature lists. It has everything to do with your team's actual capacity and where your SEO program is right now.

How to Evaluate AI Blog Writing Tools: A 3-Part Strategic Framework

Most buying decisions in this space come down to feature comparisons: which tool has more templates, which one writes faster, which one has the slickest UI. That's the wrong lens entirely.

The right question is whether a tool fits your specific constraints , your team's editorial bandwidth, your SEO maturity, your budget reality. Here's the framework I use to cut through the noise.

Pillar 1: SEO and Authority-Building Capabilities

Start here, because this is where most tools fail content teams quietly.

Ask whether the tool analyzes live SERPs before generating a brief, not just keyword frequency. Does it give you a real-time content optimization score as you write, so you know where you stand against ranking pages before you publish? Does it connect to Google Search Console, pulling actual impressions and click data into your workflow rather than making you context-switch between platforms?

And this one matters more than any feature on the checklist: does the tool have a mechanism for injecting E-E-A-T signals? Expert quotes, first-person experience prompts, original data callouts. A tool that ignores this isn't built for sustainable rankings. Full stop.

Pillar 2: Workflow and Team Collaboration

A tool your team won't actually use is a tool that costs you money.

Look at whether it supports a documented brand voice, not just a vague tone selector. Then look at the review and approval flow: can an editor leave comments, track changes, and push to your CMS without leaving the platform? The best ai blog writing tools bend to your editorial process. The worst ones make you rebuild your process around them.

Pillar 3: Total Cost of Ownership (TCO)

Subscription price is the least useful number on the pricing page.

The formula that actually matters: TCO = (Monthly Tool Cost ÷ Articles Published) + (Editor Hourly Rate × Editing Hours Per Article). At a $50/hour blended rate, editor labor alone runs $25–$50 per article , a cost that appears nowhere in any vendor's pricing table. Add the variable cost of underlying AI model tokens if you're on an API-based plan, and the real per-article number can surprise you.

We'll build this out fully in section four. For now, treat any tool , including any free ai content generator you're testing , that doesn't help you reduce editing time as a partial solution at best.

2026 Tool Deep Dive: Categorized by Your Primary Constraint

Stop trying to find the single best AI blog writing tool. That question has no good answer because the right tool depends entirely on what's actually slowing you down. Is it content depth? Team throughput? Budget? A tool that solves the wrong bottleneck just adds another subscription to your stack.

Here's how the current landscape breaks down by primary constraint.

Comparison Table: AI Blog Writing Tools at a Glance (2026)

Pricing reflects publicly listed rates as of early 2026 , always verify directly with vendors before purchasing, as these change frequently.

| Tool | Category | Starting Price | Core SEO Feature | Best For |

|---|---|---|---|---|

| Surfer SEO | SEO-First | $99/mo ($79/mo annual) | Real-time Content Score vs. SERP competitors | On-page optimization & content scoring |

| MarketMuse | SEO-First | $99/mo (Optimize plan) | Topic modeling, content inventory, gap analysis | Content strategy at scale |

| Frase | SEO-First | ~$45/mo (Solo) | GSC integration, SERP research, dual SEO + GEO scoring | Research-led brief building & content refresh |

| Jasper | High-Velocity | $69/mo ($59/mo annual, Pro) | Brand Voice, Surfer integration, Content Pipelines | On-brand content at enterprise scale |

| Copy.ai | High-Velocity | $49/mo (Starter) | Workflow automation, brand voice, multi-LLM access | Short-form and campaign-driven content |

| Writesonic | High-Velocity | $49/mo (Lite, annual $39/mo) | Live web research, SEO checker, GEO tracking (higher tiers) | Fact-checked drafts, multi-channel content |

| Outranking | Budget-Conscious | $79/mo (SEO Writer) | SERP-informed briefs, auto interlinking | Combined drafting + optimization on one budget |

| Rytr | Budget-Conscious | Free; $9/mo (Unlimited) | Basic SEO analyzer, tone matching | Low-volume copy tasks, solo creators |

For the SEO-First Strategist

Your profile: Rankings matter more to you than draft speed. You'd rather spend an extra hour on a piece than publish something that sits on page four. Your bottleneck is content depth, search intent alignment, and proving that what you publish actually moves the needle.

Surfer, MarketMuse, and Frase all belong in this category, but they solve slightly different problems.

Surfer is the most direct path from keyword to optimized draft. Its Content Score shows a 0.28 correlation with Google rankings, which gives writers a concrete target rather than a gut feeling. The Essential plan ($99/mo) covers 30 articles and includes Google Docs and WordPress integrations. The caveat: Surfer's AI articles (5 per month on Essential) are optimization-focused, not particularly human-sounding. Budget for editing time.

MarketMuse operates at a higher strategic altitude. Rather than optimizing individual articles, it maps your entire content inventory against competitor topic authority. The Optimize plan at $99/mo gives you 5 content briefs and 100 tracked topics per month, which is enough for a focused team but will feel tight if you're publishing aggressively. IEEE saw measurable SEO results within 90 days of implementing MarketMuse, though that's a vendor case study, so treat it as directional rather than a guarantee.

Frase is the most practical pick if you want to act on traffic data you already have. Its Google Search Console integration surfaces underperforming pages, flagging clicks, impressions, CTR, and average position, so you can prioritize refreshes with actual performance evidence instead of guesswork. A simple workflow that works: connect GSC, filter for pages ranking positions 8-20, then use Frase's SERP analyzer to find the topic gaps pulling you down. That refresh-first approach tends to return ROI faster than publishing net-new content.

For the High-Velocity Content Team

Your profile: You're managing multiple writers, possibly across time zones, and "consistent brand voice" is a real operational problem, not a marketing platitude. Your bottleneck is throughput and coordination, making sure the tenth article sounds like the first.

Jasper, Copy.ai, and Writesonic all serve this profile, but they've evolved in noticeably different directions.

Jasper remains the strongest choice for teams with serious brand consistency requirements. The Brand Voice feature lets you upload existing content so Jasper learns your tone, then applies it across every piece generated by every team member. The Pro plan ($69/mo per seat, or $59/mo billed annually) supports up to 5 seats and 3 brand voices, which covers most small-to-mid marketing teams. Business plan pricing is custom but typically starts around $499/mo, adding Content Pipelines and the AI App Builder for high-volume, multi-channel work. The Surfer SEO integration is worth flagging specifically: it's one of the cleaner ways to pair generation speed with SEO guardrails without paying for two full-platform subscriptions.

Copy.ai has shifted its positioning toward go-to-market automation rather than pure writing. The Starter plan ($49/mo) gives you one seat, unlimited words in chat, and access to multiple LLMs. Where it earns its keep is workflow automation, building repeatable processes for content repurposing, email sequences, and campaign briefs. For pure long-form blog content at scale, it sits behind Jasper.

Writesonic lands somewhere between the two. Its live web research capability pulls real-time data during generation, which meaningfully reduces the hallucination problem you get with static-model tools. The Lite plan starts at $39/mo billed annually, but the SEO features that actually matter, auto-optimization and GSC integration, don't unlock until the Standard tier at $79/mo annually.

For the Budget-Conscious Creator or Solopreneur

Your profile: You're running content largely solo, possibly wearing the SEO hat part-time alongside a handful of other responsibilities. Budget is a real constraint. You need something that covers the basics without a six-month payback period.

Honestly, you've probably already experimented with a free ai content generator. ChatGPT's free tier, Rytr's free plan (10,000 characters/month), or one of the many freemium tools promising unlimited words. Here's the trade-off nobody spells out: free tools give you generation without SEO intelligence. You get a draft, not a strategy. Word caps reset monthly, brand voice is nonexistent, and there's zero connection to your actual search performance data.

Rytr is the most honest entry point at this tier. The Unlimited plan at $9/mo removes character limits and adds basic tone matching and plagiarism checks. It won't replace a dedicated SEO tool, but it's a legitimate way to accelerate short-form copy tasks, blog intros, meta descriptions, email drafts, without spending $100/mo on a platform you'll only use at 20% capacity.

Outranking is the more serious option if you're willing to spend $79/mo on the SEO Writer plan. Unlike Rytr, it combines SERP-informed brief generation with auto-interlinking, meaning it's doing actual on-page SEO work rather than just producing text. For a solo operator who can't afford a separate writing tool and a separate content optimizer, that consolidation is genuinely useful.

The contrarian take: at this budget tier, your highest-ROI move might not be a dedicated ai blog writing tool at all. A $20/mo ChatGPT Plus subscription paired with a $39/mo Frase Solo plan covers research, brief generation, and content optimization, often more effectively than a mid-tier all-in-one tool that tries to do everything adequately and ends up doing nothing particularly well.

The Hidden Cost: Your True Per-Article Investment

Every pricing page shows you the subscription fee. None of them show you the full bill.

The number that actually determines your ROI is your true per-article cost , and it has three components most buyers ignore until they're three months into a contract.

True Cost = Tool subscription (amortized per article) + Editor labor + Underlying model/API costs

Let's make this concrete. Editor labor alone runs $25-$50 per article at a $50/hour blended rate, and that's assuming a fast editor working on relatively clean output. That figure isn't on any vendor's pricing page.

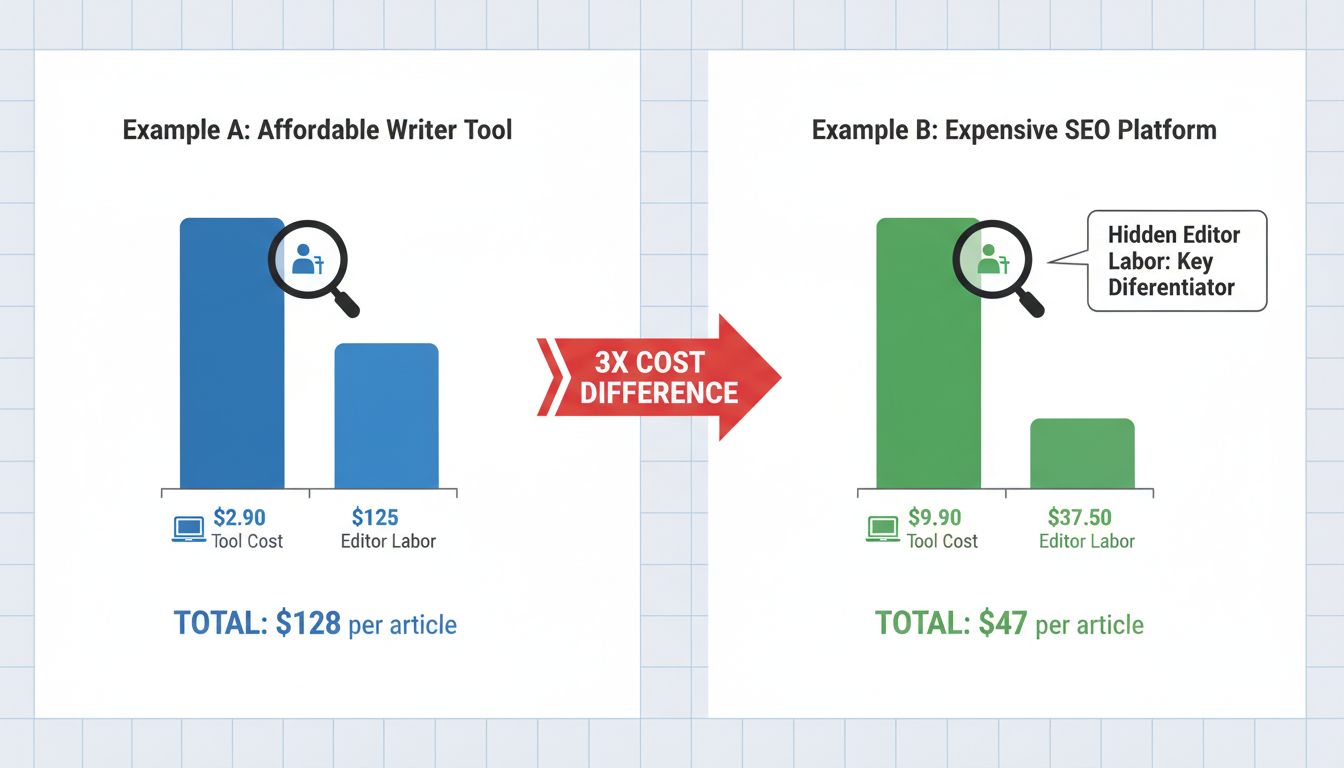

Example A: The "Affordable" Writer Tool

You're paying $29/month for a general-purpose AI writing tool. Publishing 10 articles a month, that's $2.90 per article in tool costs. Sounds excellent. But the output is generic, structurally flat, and needs heavy reworking. Your editor spends 2.5 hours per piece: $125 in labor. Your true cost: ~$128 per article.

Example B: The "Expensive" SEO Platform

You're on Surfer's Standard plan at $99/month. Same 10-article output: $9.90 per article in tool costs. But because the brief is SERP-informed and the content score gives your editor a clear optimization target, cleanup takes 45 minutes. Labor cost: $37.50. True cost: ~$47 per article.

The cheaper tool costs nearly 3x more when you account for actual human time.

There's a third variable worth flagging for teams using API-based or white-label tools: underlying model costs. GPT-4 Turbo runs $10 per million input tokens and $30 per million output tokens. Claude's pricing varies by model tier. At scale, these costs compound , a 2,000-word article generation pass isn't free, and some platforms pass those costs directly to you above a usage threshold.

Here's the practical exercise: before your next renewal, track editing time per article for one month. Multiply by your editor's hourly rate. Add it to your tool cost. That number, not the subscription fee, is what you're actually spending on AI-assisted content. Whether you're using a dedicated ai blog writing tool or piecing together a free ai content generator with a separate optimizer, the math works the same way. The sticker price is almost never the real price.

Common Pitfalls and How to Sidestep Them

Most teams don't fail with AI content tools because they picked the wrong platform. They fail because they walked straight into one of four predictable traps.

Pitfall 1: Chasing Case Study Results Without Comparable Authority

Surfer's 717% year-over-year traffic lift is a real number. So is MarketMuse's claim that IEEE saw meaningful ranking movement within 90 days. But here's what those case studies quietly leave out: the domain authority behind those results, the size of the editorial team, and how much human strategy shaped the final output.

A Series A SaaS company with a 6-month-old blog and 15 referring domains isn't operating in the same conditions as the brands in those studies. Before a vendor's case study influences your buying decision, ask: What was their domain authority? How many editors were involved? How long did it take? If they can't answer those questions, treat the number as marketing, not a benchmark.

Pitfall 2: Publishing Raw AI Output as Final Draft

Raw AI output is structurally competent but experientially hollow.

It doesn't have the specific customer anecdote, the contrarian take, or the hard-won operational detail that signals real expertise to both readers and Google's quality raters. That $25-$50 per article in editorial time isn't overhead to minimize. It's what separates content that ranks from content that indexes and quietly dies.

Pitfall 3: Choosing a Tool That Misaligns With Your Bottleneck

If your real problem is not knowing what to write, buying a high-velocity generation tool just produces more of the wrong content, faster. If your problem is slow production on a solid strategy, a deep content intelligence platform adds complexity you don't need yet.

Go back to your primary constraint. Strategy, speed, or budget. Then match the tool category to that. Buying around your weakness is how you waste a quarter's worth of subscription fees without noticing until renewal.

Pitfall 4: Treating the AI as a Strategist, Not an Assistant

The tool executes. You strategize.

No ai blog writing tool will tell you which topics actually move pipeline, which angles differentiate you from competitors, or which keywords align with your ICP's buying stage. That judgment has to come from you. Teams that hand strategy over to the AI end up with topically coherent, commercially useless content.

Define your keyword targets, your narrative angles, and your content goals first. Then let the tool do what it's actually good at: turning that strategy into a structured draft faster than any human writer could. Even a free ai content generator can do that part well, as long as someone with real strategic judgment is driving.

Building Your Future-Proof AI-Human Content Workflow

The tools are only as good as the process around them. And right now, the process is where most teams fall short.

Here's the four-phase model I'd recommend building around any ai blog writing tool you choose.

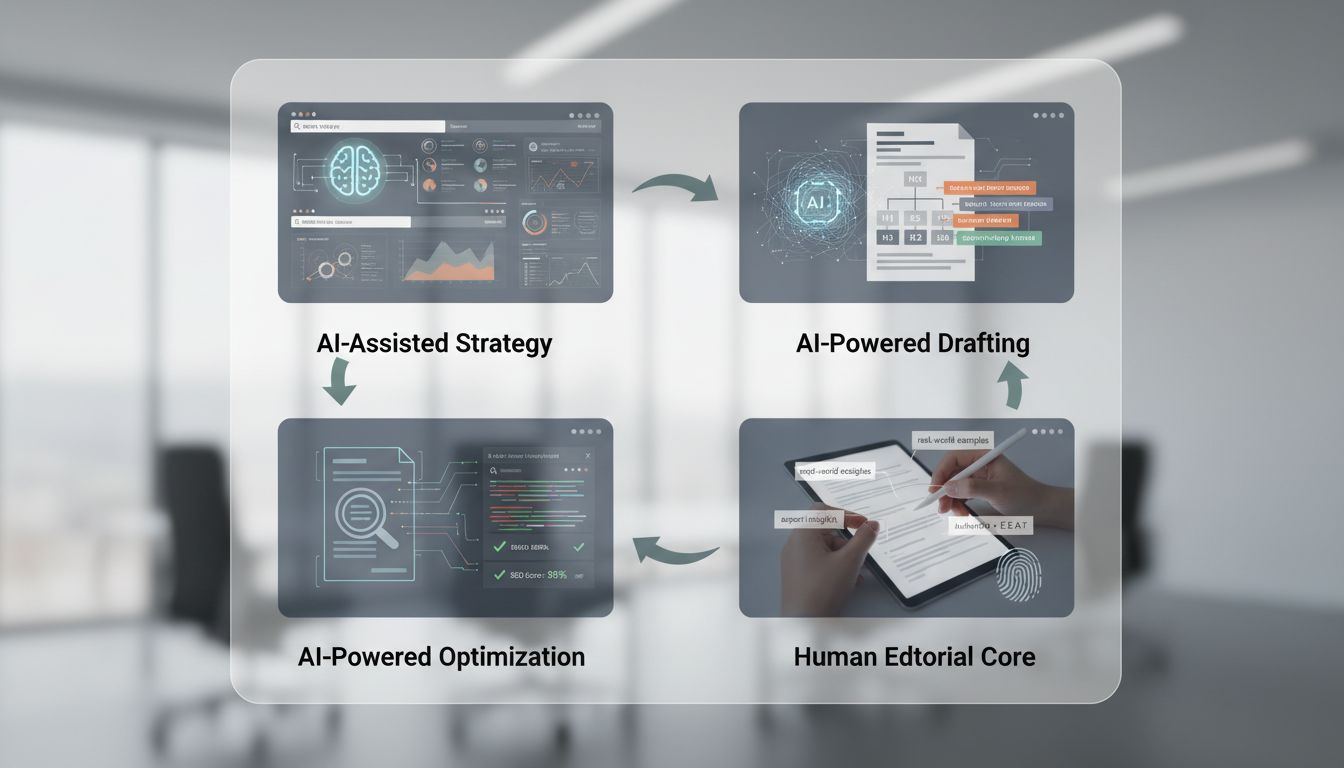

Phase 1 , AI-Assisted Strategy. Use your tool's SERP analysis, keyword clustering, and competitor gap features to define what you're writing and why. This is where AI earns its keep on the research side: surfacing topic clusters, identifying questions your competitors haven't answered, generating a structured brief. You approve the strategy. The AI does the legwork.

Phase 2 , AI-Powered Drafting. Feed the approved brief back into your tool and generate a first draft. Treat this output as a smart scaffold, not a finished product. It gives you structure, logical flow, and keyword coverage. It doesn't give you perspective.

Phase 3 , Human Editorial Core. This is the non-negotiable step. A human editor, ideally someone with subject matter proximity, adds the expert insight, real examples, contrarian angles, and specific claims that make content worth reading and worth ranking. This is where E-E-A-T actually gets built. No AI phase replaces this.

Phase 4 , AI-Powered Optimization. Run the human-edited draft through your SEO optimizer (Surfer, Frase, Clearscope, whichever fits your stack). Close the keyword gaps, check heading structure, and finalize the content score before publishing.

Here's the mental model that makes this sustainable: the AI is your tireless researcher and first drafter. You're the editor-in-chief. The moment those roles reverse, your content starts sounding like everyone else's. And ranking like it, too. Even a free ai content generator can produce a solid structural draft fast. What it can't do is decide what actually matters to your audience. That part stays with you.

The Bottom Line on AI Blog Writing Tools

There's no universally best option here. There's only the right fit for your situation: your editorial bandwidth, your SEO maturity, and your actual budget once you factor in the editing hours that never show up on any pricing page.

The teams winning with ai blog writing tools right now aren't the ones running the most sophisticated tech stack. They picked a tool that matched their primary bottleneck, built a repeatable human-oversight layer around it, and treated E-E-A-T signals as non-negotiable rather than optional polish.

The math isn't complicated. AI handles volume and structure. Your editors handle judgment, expertise, and the original perspective that Google is increasingly rewarding over generic output. Strip out either side and the whole thing falls apart, either through wasted tool spend or rankings that spike and disappear within months.

So here's your next move: identify your primary constraint using the framework in this article, run a four-week pilot on one tool, and measure true per-article cost against organic performance. That's it. Even starting with a free ai content generator to test your workflow is fine. Pick one, test it honestly, and adjust from there.