March 26th, 2026

Open Source AI Content Generators in 2026: Pros, Cons, and Practical Uses for SEO

WD

WDWarren Day

Your SaaS AI platform invoices have climbed 30% year-over-year. You've hit seat limits on your enterprise plan right when a new client needs local SEO at scale. And you're quietly uncomfortable feeding proprietary content and keyword data into someone else's black box. If that's where you are in 2026, the answer probably isn't another subscription. It's building your own content engine with an open source ai content generator.

This isn't a hobbyist project anymore.

For SEO teams that actually think ahead, open-source AI has become the foundation of a content stack you control completely -- the infrastructure, the data, the output quality. No vendor lock-in, no surprise pricing tiers, no terms-of-service clause that quietly claims rights to your inputs.

Here's the thing about results: teams running hybrid AI-human workflows have seen total traffic jump 144% and organic traffic climb 159% within a single year. That doesn't happen by subscribing to another platform and clicking generate. It comes from deliberate architecture -- the right models, real guardrails, and human judgment applied at exactly the points where it matters.

The catch is real, though. Self-hosting a capable model carries genuine operational overhead, and publishing raw AI output without a quality system is one of the faster ways to earn a Google penalty right now. The teams winning with this approach aren't naive about that tradeoff.

So that's what this guide actually covers: why open-source AI has hit a real inflection point for SEO work, which models and tools are worth your time, and how to build a workflow that's both cost-effective and penalty-proof. There's also a concrete five-step pilot plan so you can test this with a real project before committing your whole content operation.

If you're trying to decide whether an open-source AI content stack belongs in your toolkit, this is the blueprint to make that call clearly.

The State of SEO in 2026: Adaptation, Not Extinction

Let me settle the "is SEO dead?" debate quickly: no. But the job description has changed significantly.

The SEO professionals I talk to aren't worried about SEO disappearing. They're worried about keeping up with how search actually works now. AI Overviews have moved from novelty to a primary KPI. Ranking #1 organically matters less when a synthesized answer sits above the fold and cites three sources, none of which are yours. That's a fundamental shift in what "winning" at search means.

E-E-A-T hasn't just survived the AI era. It's become the central axis around which everything else rotates. Google's quality raters are specifically looking for demonstrated experience, genuine expertise, and credible authorship. You can't fake that with volume alone, and no AI tool, open source or otherwise, manufactures it for you. It has to be built into your content process from the start.

Here's where the tension lives: the competitive pressure to produce more content hasn't eased, it's intensified. Your competitors are using AI. Clients expect faster turnaround. Editorial teams are leaner. The instinct is to automate aggressively and publish at scale.

That instinct has already burned a lot of teams. Google's March 2024 Core Update penalized over 1,400 websites for what it formally classified as "scaled content abuse" -- bulk-produced pages that added no genuine value to users. The sites that got hit weren't using obviously bad tools. They were using the same SaaS platforms many agencies rely on today, just without adequate quality controls in place.

The real problem, then, isn't access to AI. The question isn't whether to use AI for content in SEO 2026 -- that decision is already made. The question is how to use it in a way that scales output without sacrificing the quality signals that search rewards.

Open-source AI tools, built into a thoughtful workflow, are the most credible answer I've found to that question.

Why Open Source AI? The 2026 Value Proposition for SEO

Let me be direct: open source isn't automatically better. It's a different set of trade-offs. The question isn't "is open source good?" -- it's "does this model of working fit my team's actual situation?"

Here's how I think through that.

The Strategic Advantages

Control and data privacy are the underrated wins. When you run your own open source ai content generator, your content briefs, internal keyword strategies, client data, and draft outputs never touch a third-party server. For agencies handling NDA-bound clients or in-house teams in regulated industries, this isn't a nice-to-have -- it's a compliance requirement. Commercial SaaS tools, regardless of their privacy policies, introduce a data-sharing surface you can't fully audit.

The cost math gets compelling at scale. The number that gets thrown around is 60-80% TCO savings versus managed APIs on steady workloads. That range is real, but it's also highly variable -- it depends on your token volume, hardware choices, and whether you're factoring in staffing costs honestly.

A concrete anchor: self-hosting Llama 4 70B for a 5M tokens/day workload runs approximately $5,931/month when you include hardware, electricity, colocation, and staffing. That sounds like a lot until you price the equivalent volume through a commercial API, where per-token costs at that scale can run two to three times higher. At lower volumes, the math flips -- which is exactly why this is a trade-off, not a universal truth.

Customization is where open source genuinely pulls ahead. Using frameworks like LangChain, you can wire your models directly into your CMS, internal analytics, proprietary keyword databases, and brand style guides. No waiting for a SaaS vendor to build the integration you need. No vendor lock-in forcing you to stay on a platform that's raised prices or deprecated features you relied on.

The Operational Realities

Technical overhead is real and often underestimated. Running your own stack means someone on your team -- or a contractor you trust -- needs to handle model deployment, GPU configuration, and pipeline maintenance. If your team's technical ceiling is "comfortable with APIs," self-hosting will be painful without dedicated support.

Setup time is a genuine upfront cost. Expect weeks, not days, to get a production-ready pipeline running. Hardware provisioning, model selection, prompt engineering, and quality testing all take time before you generate a single publishable piece of content.

Model management is ongoing work. The open-source landscape moves fast. Staying current on releases, security patches, and performance improvements isn't a one-time thing -- it's a recurring operational commitment that needs to live on someone's plate permanently.

Should Your Team Use Open-Source AI?

Use this as a quick self-assessment:

| Factor | Lean Open Source | Lean Commercial SaaS |

|---|---|---|

| Technical skill | In-house DevOps/MLOps | Limited technical staff |

| Content volume | High (500K+ tokens/day) | Low-to-medium |

| Data sensitivity | High (client data, NDAs) | Standard |

| Budget flexibility | Upfront CapEx acceptable | OpEx only |

| Timeline | Weeks to implement | Deploy today |

If you checked three or more boxes in the "Lean Open Source" column, the rest of this article is written for you.

The Golden Rule for AI in SEO: Augment, Don't Automate

Here's the principle I apply to every AI-assisted content project I run: AI makes a terrible editor-in-chief but an exceptional research assistant.

That's the whole rule. Everything else flows from it.

The moment your workflow inverts that relationship -- the moment AI is making the strategic calls and humans are just hitting "publish" -- you've built a penalty waiting to happen. Google's March 2024 Core Update penalized over 1,400 websites for exactly this kind of scaled content abuse. Those weren't sites using AI. They were sites surrendering to it.

On the "30% Rule" -- Let's Retire It

You've probably heard this one: keep AI-generated content under 30% of your final piece. It sounds reassuring. It's also meaningless.

Google doesn't run a percentage detector. What it evaluates is whether content demonstrates genuine experience, expertise, and original insight -- the E-E-A-T signals that no model generates on its own because no model has done anything. A piece that's 90% AI-written but grounded in real case data, edited by a subject-matter expert, and enriched with firsthand observations can outperform a "30% AI" article that's just a human awkwardly stitching together model outputs. The percentage is the wrong metric entirely.

The Framework That Actually Works: The AI-Human Workflow Flywheel

Think of it as two distinct roles operating in sequence, not in competition.

AI's role -- the drafting engine: Synthesizing research across multiple sources, generating structural outlines, producing first-draft body copy, scaling repetitive-but-necessary text like meta descriptions, alt tags, and FAQ schema. These are high-volume, time-consuming tasks where AI delivers genuine leverage.

Your role -- the expertise layer: Setting the strategy before AI touches anything. Engineering the prompts that constrain and direct the output. Fact-checking every claim. Injecting the specific client data, proprietary insights, or firsthand experience that transforms a competent draft into something only your team could have produced. Final approval on everything.

The flywheel spins because each human edit makes your prompts better, which makes the AI drafts better, which means less editing time next round. The cycle compounds.

Why Open-Source Makes This Safer

With a SaaS tool, the workflow is largely opaque. You input, it outputs, and the guardrails are whatever the vendor built.

With an open source ai content generator, you design the pipeline. You can hard-wire the human checkpoints directly into the process -- not as a policy that someone might skip, but as a structural requirement the system enforces. That's not just better for quality. It's better for your liability exposure too.

Choosing Your Toolkit: Top Models & Tools for SEO in 2026

If someone asks me "what's the best open source ai content generator?", my honest answer is: it depends on the task. For most SEO teams starting out, Mixtral 8x7B offers the best balance of permissive licensing, inference speed, and output quality. If you're doing multilingual content or processing long briefs, Gemma 3 is the stronger pick. No universal winner exists -- only the right tool for the specific job.

Here's how the leading models stack up for SEO work:

The Model Comparison

| Model | License | Context Window | Best For in SEO |

|---|---|---|---|

| Mixtral 8x7B | Apache 2.0 | 32k tokens | All-round drafting, high-volume programmatic SEO, speed-critical tasks |

| Gemma 3 | Google Terms | 128k tokens, 140+ languages | Long-form content, multilingual localization, large brief processing |

| Llama 4 (Scout/Maverick) | Llama Community License | Up to 1M tokens | Strong generalist tasks , but read the license before deploying |

| Qwen3 Series | Apache 2.0 | 256k tokens (expandable to 1M) | Ultra-long briefs, complex RAG pipelines, agentic workflows |

A few things worth flagging before you pick a model and build around it.

Mixtral's Apache 2.0 license is genuinely clean. No user caps, no attribution theater, no acceptable use policy landmines. For a commercial agency, that matters. The tradeoff is a smaller context window -- 32k tokens handles most article drafts fine, but starts to strain on very long briefs or multi-document retrieval tasks.

Llama 4 is powerful, but "open" is a generous description. Meta's Community License prohibits EU-domiciled companies from using the multimodal variants, requires "Built with Llama" attribution, and restricts organizations above 700M MAUs. For most agencies this is irrelevant in practice -- but your legal team should read it before you ship anything client-facing built on Llama 4. Don't skip that step.

Qwen3's Apache 2.0 license is the cleanest option in the frontier tier. No user limits, no acceptable use policy beyond the license notice itself. If you're building a RAG pipeline that needs to ingest large knowledge bases or chew through multiple documents simultaneously, Qwen3's native 256k context window gives you real room to work.

The Supporting Tool Stack

The model is only one component. What actually makes this system work is the tooling you wrap around it.

Orchestration & RAG

- LangChain -- the connective tissue of any serious open-source content pipeline. Use it to wire your LLM to external data sources, add verification steps, and structure outputs. This is how you implement RAG, which outperforms fine-tuned models by ~16% on ROUGE metrics for factual accuracy.

CMS & Content Integration

- ClassifAI + Ollama -- runs models locally inside WordPress to generate titles, excerpts, and alt text without any external API calls. Honestly worth it if data privacy is a hard constraint.

- CData Connect AI -- connects live WordPress data to LangChain pipelines without copying data. Useful when your content needs to reference live site information.

SEO Operations

- SerpBear -- replaces expensive, keyword-capped SaaS rank trackers. Self-hosted, unlimited keywords, no tier walls.

- Greenflare -- handles large-scale crawls without the per-page throttling you get from SaaS crawlers.

- SEO Panel -- multi-site reporting in one dashboard. Agencies managing 10+ client sites will feel this one immediately.

- SEOJuice -- self-hosted link analysis and opportunity discovery. Customizable codebase means you can adapt it to your specific workflow.

Experimentation

- Unleash / GrowthBook -- open-source A/B testing platforms for running controlled experiments on headlines, meta descriptions, and content variants. GrowthBook's self-hosted edition is MIT-licensed with unlimited users and experiments, which means you can run rigorous content experiments without paying per-seat fees.

None of these tools are plug-and-play. Each one requires setup time and someone technically capable of maintaining it. That's the honest reality.

But once the stack is running, you own it completely -- and that ownership compounds in value every month you're not writing a SaaS renewal check.

Practical Use Cases: Building Your AI-Powered SEO Workflows

Theory is cheap. Here's where open source ai content generator tooling actually earns its place in your stack -- three concrete workflows I've used or seen running in real agency environments.

Use Case 1: Research, Ideation, and First-Draft Generation

Feed SERP data and a keyword brief into a local model, and it produces a structured outline and working draft. With LangChain orchestrating the pipeline, you can chain the steps so the model first reads your research doc, then generates the outline, then expands each section in sequence.

A prompt template that actually works:

SYSTEM: You are a senior SEO content strategist.

CONTEXT: [PASTE SERP SUMMARY + TOP 5 COMPETITOR HEADINGS]

KEYWORD CLUSTER: [primary_kw], [secondary_kw_1], [secondary_kw_2]

AUDIENCE: [describe reader and their problem]

TASK: Generate a 7-section outline with H2s, a suggested word count per section,

and 2-3 key points to cover in each. Do not write the draft yet.

OUTPUT FORMAT: JSON with keys: section_title, word_count, key_points[]

The human step: What comes back is a starting point, not a finished brief. Someone with domain knowledge needs to review it -- kill the weak sections, add angles the model missed, and flag anything that needs sourcing before a single word of draft copy gets written. Skip that step and you're just shipping the model's guesses.

Use Case 2: Scaling Programmatic and Local SEO

This is the use case most competitors either ignore or get badly wrong.

Programmatic SEO at scale -- generating location or service pages in bulk -- is where open-source tooling delivers a real cost advantage. It's also where penalty risk is highest if you skip the guardrails.

The basic pipeline:

# Pseudocode: batch local SEO page generator

locations = load_csv("cities.csv") # [city, state, population, local_landmark]

services = load_csv("services.csv") # [service_name, key_differentiator]

for city, service in product(locations, services):

prompt = f"""

Write a 400-word intro section for a '{service}' page targeting '{city}'.

Include: a reference to {city}'s {local_landmark}, one local pain point,

and a natural use of the phrase '{city} {service}'.

Tone: direct, helpful, no corporate filler.

"""

draft = model.generate(prompt)

save_to_review_queue(city, service, draft) # NOT auto-publish

For long-context city-plus-service combinations, Qwen's 256K token window handles large batches without truncation. For multilingual local SEO, Gemma 3's support for 140+ languages makes it the right call for non-English markets.

The guardrail that actually matters: every page that comes out of this pipeline goes into a review queue, not directly to production. A local team member or freelancer adds a real photo, a genuine customer testimonial, and at least one hyper-local detail the model couldn't know. That's the difference between a page that ranks and a page that gets your site flagged for scaled content abuse. Not optional.

Use Case 3: Metadata, Schema, and Accessibility at Scale

Nobody talks about this one, but they should.

Writing meta descriptions for 800 product pages, generating alt text for an image library, building schema markup across a site -- this is the work that quietly eats hours every week. It's also where AI pays back its operational cost fastest.

The toolchain that works:

- Meta descriptions: A simple Python script hitting your local Mixtral instance, fed a page title + H1 + first paragraph, outputting a 150-character description with the target keyword in the first 60 characters.

- Alt text: ClassifAI + Ollama running locally inside WordPress -- generates descriptive alt text without sending images to an external API. Non-negotiable for any client with data privacy requirements.

- Schema markup: Moonlit Platform can generate structured data for hundreds of pages in minutes, which is hours of manual JSON-LD work gone.

The human step here is lighter than in the previous two workflows, but it doesn't disappear entirely. Spot-check 10% of outputs for accuracy, run a schema validator on a sample, and confirm meta descriptions aren't duplicating across pages. Automation handles the volume. A human catches the systematic errors before they compound.

Implementing Guardrails: Your Penalty-Proof Quality System

Let me address the core fear directly: AI-generated content isn't bad for SEO. Poor implementation is. Google's Danny Sullivan has said it plainly -- "AI origin is not a ranking factor. Helpfulness, originality, and intent are." The March 2024 Core Update that penalized over 1,400 websites wasn't targeting AI content. It was targeting scaled, low-value content with no human oversight -- content that happened to be AI-generated because that's the cheap path to volume.

The distinction matters. Your goal isn't to hide that you used AI. It's to make sure everything that ships is genuinely useful.

The Core Guardrail: Your RAG Pipeline

The single most effective technical safeguard against AI content failure is retrieval-augmented generation -- RAG. The concept is simpler than it sounds.

A standard LLM writes from memory. It predicts plausible text based on its training data, which means it can confidently state things that are wrong -- a problem called hallucination. RAG fixes this by forcing the model to read before it writes.

Here's the flow in plain terms:

User Query / Brief

↓

Fetch relevant sources (your internal docs, top-ranking SERP content, brand guidelines)

↓

Inject those sources into the prompt as context

↓

Model generates content grounded in what it just read

↓

Output with citations you can verify

You're not asking the model to remember facts. You're handing it a research pack and asking it to write from that. RAG outperforms fine-tuned models on factuality metrics by roughly 16% in ROUGE scores, and properly implemented RAG pipelines can reduce hallucination rates by 70-90%. In practice, that means fewer invented statistics, fewer phantom citations, and fewer embarrassing factual errors that slip past a tired editor.

In LangChain, setting this up means connecting your vector store -- your internal knowledge base, past articles, product docs -- to the generation step. The model only writes what it can retrieve and attribute.

The Quality Assurance Checklist

RAG reduces the risk of bad output. It doesn't eliminate the need for human review. Every piece of content that goes through your open source ai content generator pipeline should clear these six gates before it publishes:

1. Strategic Alignment Check Does this piece actually serve a search intent and a business goal? AI drafts to a brief -- but the brief has to be right first. A human sets that direction.

2. Fact & Citation Verification Even with RAG in place, spot-check every specific claim, statistic, and named source. If you can't verify it in 30 seconds, cut it or replace it with something you can.

3. Expertise Infusion This is where you earn your E-E-A-T signals. Add a real-world example, a client observation, a contrarian take, or proprietary data that no model could have generated. This is the step that separates content that ranks from content that merely exists.

4. Originality & AI-Detection Scan Run every draft through an originality checker before publishing. Originality.ai flags 94.3% of Mixtral-generated content as AI-written even after light editing. Heavy editing and expertise infusion -- step 3 -- will move that needle, but you need to measure it.

5. On-Page SEO Fundamentals AI models don't automatically nail heading hierarchy, internal linking, image alt text, or meta description length. A human -- or a structured post-processing script -- needs to verify these. Don't assume the model handled it.

6. Final Read-Through for Tone & Trust Read it out loud. Does it sound like a person with genuine knowledge wrote it, or does it sound like a plausible arrangement of industry phrases? If it's the latter, it's not ready. Google's 2025 Quality Rater Guidelines now instruct raters to apply the lowest quality rating to pages where content is AI-generated with no detectable originality. That's the bar you're clearing -- or failing.

This checklist adds time. Expect 20-45 minutes of human effort per article on top of generation time. That's still a fraction of writing from scratch, and it's the difference between a content operation that compounds over time and one that quietly accumulates liability.

Getting Started: Your 5-Step Pilot Project Plan

The quality system from the previous section might sound like a lot to absorb. It isn't. Run it a few times and it becomes muscle memory. But you need somewhere to start , something small enough to actually finish, meaningful enough to teach you something real.

Here's the pilot plan I'd hand any SEO team starting this today.

Step 1: Define a Contained Project

Resist the urge to automate everything at once. Pick one task with a clear success metric , something like regenerating meta descriptions for your 50 top-performing blog posts. It's high-leverage (meta descriptions directly affect click-through rate), low-risk (you're not publishing new pages), and fast to measure.

Step 2: Choose Your Entry Point

You have two honest options:

- Option A , Cloud-hosted model instance: Use a provider like Together AI or Replicate to call an open model via API. No GPU required, minimal setup. Good for validating output quality before committing to infrastructure.

- Option B , Local runtime: Tools like GPT4All (MIT license, runs on a laptop) or Ollama give you full data privacy and zero per-token costs. Slightly more setup, but you'll understand the stack better for it.

Want speed? Start with Option A. If data privacy is a hard requirement, go with Option B.

Step 3: Set Up a Basic RAG

Don't let "RAG pipeline" intimidate you. For a meta description pilot, this is simply: feed the model the existing page title, H1, first paragraph, and target keyword as context before asking it to generate. A basic Python script or a LangChain starter template handles this in under an hour.

Step 4: Generate, Edit, and Publish

Run your QA checklist from Section 6. Log the time it takes per description and compare it to your historical manual rate. Track the numbers , you'll need them to justify the next phase.

Step 5: Measure and Iterate

After 4-6 weeks, compare click-through rates on the updated pages against your historical baseline. Refine your prompt based on what underperformed. That feedback loop is the whole game.

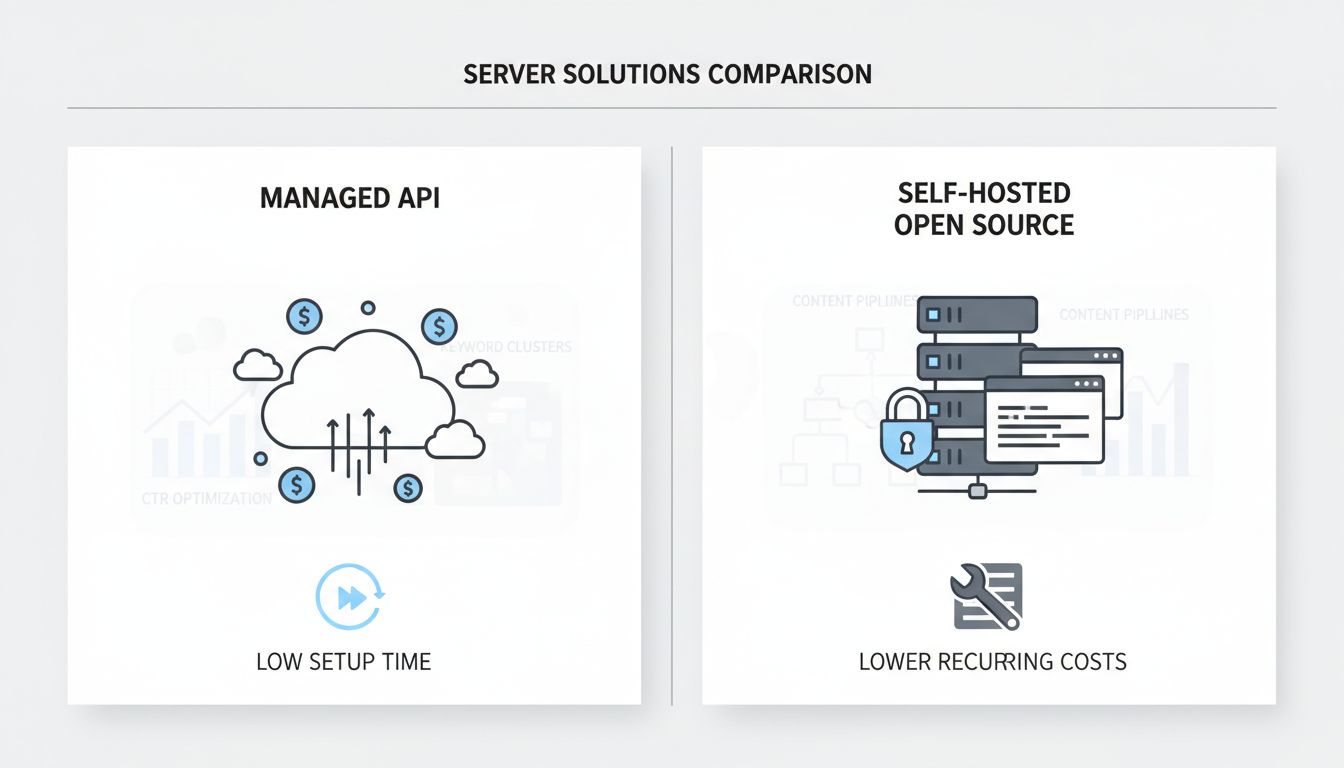

Cost-Benefit Snapshot: Managed API vs. Self-Hosted Open Source

| Factor | Managed API | Self-Hosted Open Source |

|---|---|---|

| Monthly cost (5M tokens/day) | ~$15,000–$20,000+ | ~$5,931 (all-in) |

| Setup time | Hours | Days to weeks |

| Data privacy | Vendor-dependent | Full control |

| Customization | Limited | Unlimited |

| Operational overhead | Low | Medium–High |

This isn't a full-scale migration. It's an experiment with a defined scope, a measurable outcome, and a clear off-ramp if things go sideways. That's exactly how it should start , and honestly, the teams that treat it that way are the ones that actually ship something useful instead of spending three months planning a rollout that never happens.

The Bottom Line on Open-Source AI for SEO

Everything in this article points to the same conclusion: the most defensible SEO content operation you can build in 2026 isn't the one burning money on SaaS subscriptions. It's the one with the most control.

An open source ai content generator isn't a budget compromise. Used correctly, it's the foundation of a future-proof SEO stack that bends to your workflows, protects your data, and doesn't send a price increase notice every January.

But the technology is only half the equation. The teams winning with open-source AI right now aren't the ones who automated the most. They're the ones who automated the right things. AI handles the repeatable, high-volume work: drafts, metadata, clustering, schema. Humans bring the experience, judgment, and credibility that Google's quality raters are specifically trained to look for. That division isn't a workaround. It's the strategy.

The practical architecture isn't complicated, either. Hybrid systems combining an open model like Mixtral or Gemma with a RAG pipeline for factuality, a self-hosted rank tracker like SerpBear, and a human editorial review step outperform both pure automation and pure manual production on cost, consistency, and penalty risk.

None of this requires a six-month migration project.

This week, pick one repetitive task -- meta descriptions, keyword clustering, local page templating. Find the GitHub page for SerpBear or the Hugging Face page for Mixtral. Read the docs. Run a private test. Join the community Discord.

The future of SEO belongs to teams that build adaptable systems. Start building yours.

Frequently Asked Questions

Is SEO dying due to AI?

No, but the job description is changing fast. The SEO role is shifting from technical execution toward strategic content system design: setting up AI pipelines, interpreting performance signals across both traditional rankings and AI Overviews, and ensuring content demonstrates genuine E-E-A-T. If anything, AI has raised the floor on what "good" content means, which makes skilled SEO professionals more valuable, not less.

What is the "30% rule" for AI content?

Honestly, the 30% rule is a flawed shortcut with no basis in Google's actual guidelines. The idea that keeping AI-written content below some arbitrary threshold keeps you safe just isn't how this works. Google penalizes scaled low-quality content, not AI content as a category. [Source: averi.ai]

A more honest framework: let AI handle the heavy lifting of initial drafts and structural scaling (call it 80% of the volume), but reserve human strategy, subject-matter expertise, and final editorial judgment for the 20% that actually determines whether content ranks and converts.

Is AI-generated content bad for SEO?

Unedited, low-value AI content absolutely is. But AI content itself? No.

Google's March 2024 Core Update penalized over 1,400 websites specifically for "scaled content abuse," which targets mass-produced pages with no human oversight or original insight, regardless of whether a human or a machine wrote them. [Source: averi.ai] The fix is straightforward: run a RAG pipeline to reduce hallucinations, apply a human editorial pass for E-E-A-T signals, verify facts, and never bulk-publish without review. Follow those steps and AI-generated content gets treated the same as anything else.

What is the best AI to use for SEO?

There's no single answer. The right model depends on the task.

For most SEO teams building an open source ai content generator workflow in 2026, Mixtral 8x7B is the strongest default for high-volume drafting: it's Apache 2.0 licensed (commercially permissive), runs inference roughly 6x faster than Llama 2 70B, and handles a 32K token context window. [Source: mistral.ai] If you're doing long-form content, large briefs, or multilingual work across 140+ languages, Gemma 3 is the better fit thanks to its 128K context window. [Source: ai.google.dev]

For a full breakdown of which model fits which task, see the comparison table in the toolkit section above.

Which 3 SEO jobs will survive AI?

The roles that survive are the ones AI genuinely can't replicate:

- SEO Strategist -- Defines business goals, interprets ambiguous data, and understands the human intent behind search queries. AI optimizes for patterns; strategists decide which patterns matter.

- Expert Editor / Subject Matter Expert -- Infuses the lived experience, nuanced opinion, and original insight that Google's E-E-A-T framework specifically rewards. This is the 20% that separates content that ranks from content that gets penalized.

- Technical SEO Architect -- Designs and maintains the integrated systems: RAG pipelines, self-hosted model infrastructure, quality guardrails, and A/B testing frameworks. As AI becomes central to content operations, someone has to build and own the engine.

What should you never say to an AI when generating SEO content?

A few prompts that consistently produce weak output:

- "Write a 1,000-word article about [topic]." -- No role, no audience, no angle. You'll get generic filler.

- "Generate content that ranks #1." -- The model has no idea what's currently ranking or why. This prompt produces confident-sounding nonsense.

- "Use these exact keywords 15 times." -- You're prompting the model to stuff, not to write. The output will read like it was written for a bot, because it was.

Always give the model a clear role ("You are a senior technical SEO consultant writing for agency founders"), a specific audience, a defined angle, and examples of the tone you want. Vague inputs produce vague outputs -- that's not an AI problem, it's a prompting problem.