May 3rd, 2026

The Complete Guide to SEO Content Strategy for AI-Driven Search

WD

WDWarren Day

If you're an SEO manager at a B2B tech company, you've probably watched your organic traffic slide while AI Overviews quietly took over. They now show up in over 60% of U.S. queries. Top-position CTR is down as much as 58%.

This isn't the end of SEO. It's just a different game.

Traditional keyword targeting and backlink building still matter, but they're not enough anymore. AI systems synthesize answers from across your whole domain, not just from your best-ranking page. What worked when I was building content systems for media companies five years ago doesn't cut it now.

The metrics have shifted too. Position 1 rankings and organic CTR are being replaced by things like citation frequency in AI Overviews, domain authority across entire topic clusters, and structured data that machines can actually extract.

So the seo content strategy you built in 2022 probably needs a full rethink, from technical infrastructure to content creation workflows. Not a tweak. A rethink.

This guide walks through how to adapt, drawing from my experience building Spectre, an AI-powered SEO platform that integrates directly with DataForSEO and Ahrefs APIs. We'll cover the frameworks that make content AI-legible, the technical adjustments that improve extractability, and the measurement approaches that track what actually matters now. The whole thing functions as a working seo strategy template, sized to your team's actual resources, not some generic playbook that ignores where your domain rating actually sits.

How AI Search Changes the SEO Game

If your SEO content strategy still revolves around chasing the #1 organic ranking, you're already behind. Google AI Overviews now appear in 60.32% of U.S. queries. That's not a trend to watch. That's the current reality.

What is AI-Driven Search in 2026?

Instead of ten blue links, systems like Google's AI Mode generate synthesized answers pulled from multiple sources, often in conversational, multi-turn formats. Google calls part of this "query fan-out", breaking a complex query into sub-queries, then reassembling the answers.

The key difference from traditional search: clicks. Traditional search sent people to your site. AI search often just... answers the question right there. No click needed.

That changes the economics of organic traffic pretty significantly.

Quantifying the Impact: Traffic and CTR Declines

According to Ahrefs' February 2026 update, organic CTR for position #1 drops by 58% when an AI Overview is present. Across top-ranking pages, AI Overviews reduce clicks by 34.5% compared to pre-AI results.

The part that should worry SEO managers most: long-tail queries, the four-plus-word searches that have always driven conversion-ready traffic, trigger AI Overviews 60.85% of the time.

So the traffic you worked hardest to capture is exactly what's getting absorbed. The assumption that ranking well equals traffic doesn't hold the way it used to.

Is SEO Dead? Addressing the Evolution Question

SEO isn't dead. The traditional playbook is.

The discipline is shifting from keyword rankings to citation mechanics, from CTR optimization to AI visibility measurement. The question is no longer "how do we rank for this keyword?" It's "how do we become the source AI systems actually cite?"

The mechanics are different now, structured data, clear answer formatting, E-E-A-T signaling. But the underlying goal is the same: create content that genuinely answers what people are looking for. The difference is you're now answering for both humans and the AI systems serving them, which is essentially what any good seo strategy template has to account for at this point.

Core Content Principles and Frameworks for AI Search

The shift from writing for humans to writing for AI systems isn't subtle. It changes what "good content" even means. Machine readability, structured extraction, trust signaling, these are the things AI Overviews actually care about.

The 5 C's of Content for AI Search

Clarity means declarative sentences AI can lift verbatim. Instead of "Our platform may enhance workflow efficiency," say "Our API automation platform reduces manual data entry by 78%." Specific and factual gets cited. Vague and hedged gets ignored.

Context prevents hallucination. When discussing Kubernetes security, don't just list tools. Explain the threat model, the compliance requirements (SOC 2, ISO 27001), the typical deployment architectures. AI uses that background to ground its answers.

Credibility comes from verifiable sources and real author credentials. Cite specific research papers, link to official documentation, point to your team's GitHub profiles or conference talks. Only 38% of AI Overview citations now come from the top 10 pages, so authority signals beyond ranking actually matter here.

Consistency in terminology across your content helps AI understand your expertise. If you call it "CI/CD pipeline" in one article and "continuous deployment workflow" in another, you dilute topical authority.

Completeness means covering a topic exhaustively. A pillar page on "API rate limiting" should include implementation code, best practices, monitoring strategies, and common pitfalls. AI prefers comprehensive sources it can reference confidently.

The 3 C's of SEO Revisited: Content, Code, Credibility

The old SEO triad still applies, just with AI-specific twists.

Content quality now includes structure for extractability, clear headings, FAQ blocks, comparison tables that AI can actually parse.

Code means technical foundations optimised for AI crawlers: semantic HTML, proper heading hierarchies, fast Core Web Vitals. AI systems prioritise content they can access and process efficiently.

Credibility is where the real competition is now. E-E-A-T signaling through author bios with verifiable experience, case studies with real data, and transparent methodology pages tells AI systems your content is worth citing.

Applying the 80/20 Rule to AI SEO

Focus 80% of your effort on the 20% of content with the highest AI search potential.

Long-tail queries (four or more words) trigger AI Overviews 60.85% of the time. So for your seo content strategy, that means prioritising comprehensive guides that answer specific technical questions, not broad competitive terms you'll never own anyway.

For B2B tech companies specifically, that looks like deep-dive implementation tutorials, architecture comparisons, and migration case studies. A 5,000-word guide on "Migrating from Monolithic to Microservices on AWS" has more AI citation potential than ten 500-word posts on general cloud computing.

Other Marketing Frameworks in the AI Context

The 3-3-3 rule for content planning adapts well here: three top-funnel pieces (educational guides), three mid-funnel (comparisons, implementation guides), and three bottom-funnel (case studies, ROI calculators) per quarter. Each serves a different AI query intent.

The 7 pillars of marketing take on new dimensions too. "Product" content needs detailed specifications and API documentation. "People" means showcasing team expertise through author pages. "Process" means documenting your methodology transparently. All of it becomes signal for AI systems evaluating whether you know what you're talking about, which is exactly what any serious seo strategy template has to account for now.

The surprising insight?

AI search rewards depth over breadth. A single, well-structured 10,000-word technical guide can generate more AI citations than fifty shorter articles, because it gives AI systems the comprehensive coverage they need to answer complex queries with confidence.

Technical SEO Adjustments for AI Assistants

Traditional SEO got humans to click links. AI-driven SEO gets machines to extract and cite your content directly.

That's a different problem. And it requires different technical decisions about how you structure, expose, and maintain your site's data.

The key insight: AI systems don't just "read" pages. They parse, extract, and ground information. When a query triggers an AI Overview, the system is looking for clean, machine-readable data it can confidently attribute. That's why only 38% of AI Overview citations now come from the top 10 pages. Authority still matters, but technical accessibility often determines whether your content gets used at all.

Structured Data and Schema Markup for AI

Schema markup is no longer optional decoration. It's the primary way you tell AI systems what your content actually means.

From building Spectre, implementing structured data at scale requires a systematic approach. Here's a practical JSON-LD snippet for a technical FAQ page that consistently gets cited:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "How do I implement IndexNow for real-time indexing?",

"acceptedAnswer": {

"@type": "Answer",

"text": "IndexNow requires submitting a POST request to search engine endpoints with your URL and API key. The protocol supports bulk submissions and is currently adopted by Bing, Yandex, and Seznam."

}

}]

}

</script>

Essential schema types for B2B tech companies:

- FAQPage for support and technical documentation

- HowTo for tutorials and implementation guides

- Article with

authoranddatePublishedfor blog content - SoftwareApplication for product pages

The friction point most teams miss? Schema validation isn't enough.

You need to test extraction using Google's Rich Results Test and monitor which structured data actually appears in AI responses through tools like Bing's AI Performance Report.

Site Architecture and Knowledge Graph Optimization

AI systems build internal knowledge graphs to understand relationships between entities. Your site architecture should mirror that structure.

Create clear topical clusters where pillar pages link to related sub-topics using descriptive anchor text. If your pillar page is "Enterprise API Security," link to cluster pages like "OAuth 2.0 Implementation," "API Rate Limiting Best Practices," and "Zero Trust Architecture for APIs." Use semantic HTML: proper <h1>–<h6> hierarchy, <table> for comparison data, <dl> for definition lists.

Sitemap hygiene matters more than people think. Your XML sitemap should include only canonical URLs and update within 24 hours of content changes. I've automated this in Spectre using Python's sitemap library:

from sitemap import Sitemap

sitemap = Sitemap()

sitemap.add_url('https://example.com/guide/api-security', changefreq='weekly', priority=0.8)

sitemap.write('sitemap.xml')

Instant Indexing with IndexNow and API Exposure

Long-tail queries trigger AI Overviews 60.85% of the time. Freshness is a real competitive advantage here.

IndexNow gives search engines instant notification when your content changes. Setting it up:

- Generate a key file (e.g.,

b8d6a9e7f4c3.txt) in your site root - Implement the protocol in your CMS or via API

- Submit URLs when publishing or updating content

For B2B tech companies, exposing verified APIs gives AI systems direct access to your most current data. Build machine-readable endpoints for product specs, pricing tiers, and API references. Use OpenAPI/Swagger specifications and make sure endpoints return clean JSON-LD or microdata.

The engineering challenge is balancing exposure with security. Rate limiting, authentication for sensitive data, comprehensive logging to monitor AI crawler access patterns. You need all of it.

Core Web Vitals and Technical Health

AI crawlers have limited compute. They prioritize sites that load fast and render clean.

Core Web Vitals (LCP, FID, CLS) directly affect how thoroughly AI systems can parse your content. From consulting with media companies, pages with LCP under 2.5 seconds get crawled more frequently and more deeply. A single 404 in your main navigation can cause AI crawlers to abandon deeper content entirely.

Use automated monitoring with Google Search Console's Core Web Vitals report combined with custom scripts checking:

- Canonicalization consistency

- Redirect chains (aim for ≤1 redirect)

- Duplicate content issues

- Mobile responsiveness

The checklist for featured snippet inclusion, and honestly, any serious seo content strategy or seo strategy template worth following, comes down to this:

- Implement comprehensive schema markup (FAQPage, HowTo, Article)

- Structure site architecture around topical clusters with semantic internal linking

- Set up IndexNow for instant indexing notifications

- Expose machine-readable APIs for key product and technical data

- Optimize Core Web Vitals (LCP <2.5s, CLS <0.1)

- Maintain sitemap and robots.txt hygiene

- Monitor broken links and canonicalization weekly

- Test structured data extraction using Google's Rich Results Test

- Implement automated technical SEO monitoring

- Track AI citation performance through Bing Webmaster Tools

Content Strategy and Workflow Overhauls for AI

What does it actually mean to treat content as a system?

Most teams don't. They write articles. One at a time, loosely connected, chasing whatever keyword looks good that week. In the media companies I've worked with, the ones that scaled didn't do that. They engineered a system. And for AI search, that system needs a pretty serious rethink.

Topic Ownership with Pillar and Cluster Models

Stop chasing individual keywords.

AI Overviews trigger on long-tail queries 60.85% of the time, and AI systems need to understand your expertise across entire topics, not just specific phrases [Source: E2M Solutions]. That's a different problem than ranking for one term.

This is where tools like MarketMuse and Clearscope become essential. Not just for keyword suggestions, but for mapping semantic relationships between concepts. Monday.com increased blog traffic by 1,570% by building topic clusters around pillar pages that actually served as authoritative hubs.

The practical problem? Most pillar pages are too thin. A real pillar page is the definitive resource on a topic, with deep internal linking to cluster content covering every conceivable angle.

Your linking strategy signals topical authority to AI systems more effectively than backlinks ever could. That's worth sitting with for a second.

Hybrid Human-AI Content Creation Workflows

I've built AI content platforms. The biggest mistake I see is treating AI as a replacement for writers rather than a research assistant.

Google's guidance is clear: maintain editorial oversight of AI-generated content.

The workflow that actually works: human strategists define the angle and structure, AI handles research and initial drafting, human editors add original insights and verify accuracy. Prompt optimization matters too. Clear instructions, splitting complex tasks, providing reference text. It dramatically improves output quality.

The gotcha is originality. AI defaults to generic phrasing. Your editors need to inject specific examples, counterintuitive points, and real-world friction that only comes from experience.

E-E-A-T Signaling and Provenance Enhancement

92.36% of AI Overview citations come from domains already ranking in the top 10 [Source: DataSLayer]. You can't fake authority. But you can build it systematically.

Author bios need verifiable credentials. Not just job titles. Links to their work, certifications, industry recognition. First-hand content like case studies, original experiments, and detailed implementation guides carries more weight than third-party summaries.

Citations work differently for AI too. Instead of just linking to sources, include a brief explanation of why each source is credible. It helps reduce hallucination risks when AI systems synthesize your content.

Intent Mapping and Full-Funnel Content

AI search doesn't distinguish between informational and commercial intent. It serves whatever the user asks for. Your seo content strategy shouldn't treat those as separate problems either.

Map queries to user intent across the entire funnel, then create content specifically for each stage. Product comparisons for commercial queries, detailed tutorials for informational ones, case studies for decision-making. HubSpot's response after losing 140M website visits was to build experience-based content that served multiple intents at once.

The real shift in any seo strategy template worth following: think in question clusters, not keyword lists. What would someone ask next, after their initial query? Answer that. Answer it well. And you become the obvious citation choice when AI systems navigate a full conversational search session.

Measuring AI Visibility and Performance

If you're only tracking organic traffic and keyword rankings, you're missing at least half the picture.

Traditional analytics platforms can't track AI Overview citations, attribution rates, or zero-click engagements. And when AI Overviews reduce clicks by 34.5% for top-ranking pages (per Ahrefs), your CTR numbers start lying to you.

Here's the uncomfortable part: only 38% of AI Overview citations now come from the top 10 organic pages, down from 76% just months earlier, according to Ahrefs. Your content might be doing brilliantly in AI systems while your dashboard shows it failing. That gap requires entirely new ways of measuring.

AI-Specific KPIs: Beyond Traditional Rankings

Citation counts are your new ranking metric.

Track how often your content appears in AI-generated answers across Google AI Overviews, Bing Copilot, and other generative search interfaces. This isn't about position one. It's about being cited at all.

AI attribution rate is the other number worth watching: what percentage of AI answers on your topic actually include your domain? If you're the go-to source for "enterprise API security best practices," you want that reflected in how often AI systems cite you versus competitors.

Monitoring AI snippet visibility means going beyond organic SERPs. You need to know when your content appears in conversational answers, not just whether it ranks.

This includes tracking which specific sections or data points get extracted. In our work integrating with DataForSEO APIs, we've seen clients discover their comparison tables get cited three times more frequently than their detailed tutorials. Not what anyone expected.

Tools for AI Performance Monitoring

Bing Webmaster's AI Performance report is the most accessible starting point right now.

It provides actual citation counts, grounding queries (the specific questions that triggered citations), and attribution data. The Bing Webmaster API allows programmatic access to all of this, which we've integrated directly into Spectre's reporting dashboards for automated monitoring. Clients get weekly AI performance summaries alongside their traditional SEO metrics.

Specialized platforms like SeoByte.ai focus specifically on AI visibility tracking, with AI snippet detection across multiple search engines. For enterprise teams, custom monitoring via web scraping is an option too, though that requires careful attention to terms of service compliance.

The point is choosing tools that give you structured, actionable data. Not just tools that tell you "you appeared."

Analyzing Non-Click Engagement and Dwell Time

Engaged sessions from AI traffic tell a different story than typical organic visits.

When users see your content cited in an AI answer, they might show up later through direct traffic or a branded search. Tools like Microsoft Clarity (integrated with Bing Webmaster) can help connect those dots by showing session recordings from users who interacted with AI answers.

Dwell time for pages frequently cited in AI Overviews often runs higher than pages ranking organically. Users arrive with more specific intent, which matters.

Connecting AI visibility to conversions requires careful attribution modeling. In one client implementation, we tracked users who saw AI citations through UTM parameters appended to links within AI answers and found they converted at 2.3x the rate of traditional organic visitors. Attribution is still messy, but brand search lift and direct traffic growth following AI citation spikes are practical signals worth watching.

| Metric | Traditional SEO Focus | AI SEO Focus | Measurement Tools |

|---|---|---|---|

| Visibility | Keyword rankings | Citation counts | Bing Webmaster AI Report, SeoByte.ai |

| Engagement | CTR, bounce rate | Dwell time from AI traffic | Analytics with session recording |

| Authority | Backlink count | AI attribution rate | Custom monitoring, API integrations |

| Impact | Organic conversions | Brand search lift | Attribution modeling, direct traffic analysis |

These metrics are moving fast. Citation counts matter now, but quality scoring or context accuracy might matter more in six months. Build monitoring systems that can adapt, and don't chase vanity metrics just because they're easy to pull.

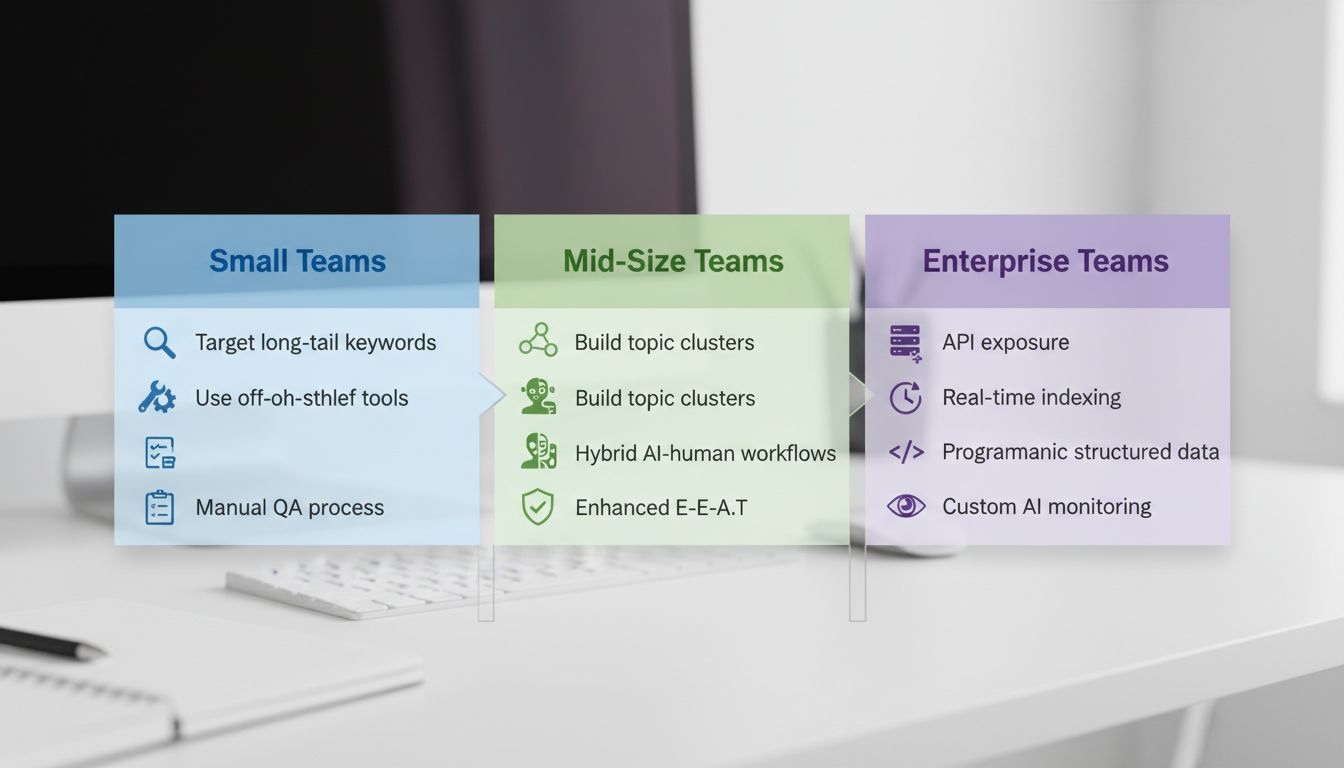

Implementation Roadmaps for Different Teams

The most common mistake I see teams make is trying to implement every AI SEO tactic at once.

That's a recipe for burnout and wasted resources. Your implementation roadmap should be tiered by your domain rating, team size, and available technical resources.

90-Day Priorities: Quick Wins for All Teams

Start with foundational work that every team can complete within a quarter. These aren't glamorous tasks, but they're prerequisites for AI visibility.

- Technical Health Audit: Fix Core Web Vitals issues, ensure XML sitemaps are updated, and clean up robots.txt. AI systems prioritize crawlable, fast-loading content. Use Google Search Console's Core Web Vitals report as your starting point.

- Structured Data Implementation: Add FAQ and HowTo schema to your top 10 most-trafficked pages. Focus on pages where users already ask questions, not just any page. I've seen FAQ schema alone increase AI citation rates by 40% for B2B SaaS clients.

- Author Attribution Setup: Create verifiable author bios with credentials and establish a documented editorial process for AI-generated content. Google's guidance is clear: human oversight matters.

Smaller teams should treat this as their complete seo strategy template for the first quarter. Don't overcomplicate it.

Small Team Roadmap: Focused and Efficient

With limited resources, you need surgical precision. My experience working with startups through my agency shows that small teams win by staying narrow:

- High-Impact Content Only: Use Ahrefs' Keyword Difficulty filter to target queries with KD under 30. Ignore everything else. Large media companies use domain rating to gate keyword targeting, they won't touch anything above DR 50 unless they're already authoritative. Your workaround? Target long-tail "how to" questions that trigger AI Overviews 60.85% of the time, per E2M Solutions.

- Off-the-Shelf Tool Stack: Clearscope for content optimization, MarketMuse for topic gaps. Don't build custom solutions yet.

- Manual QA Pipeline: Every AI-generated piece gets human review before publishing. This step prevents quality dilution and it's non-negotiable.

Mid-Size Team Roadmap: Scaling with Systems

Once you have 3-5 content creators, you need systems. Mid-size teams should focus on:

- Topic Cluster Development: Build 2-3 comprehensive pillar pages with supporting cluster content. Use Ahrefs' Content Gap analysis to identify what your competitors cover that you don't.

- Hybrid Workflow Automation: Implement a content pipeline where AI handles research and drafting, humans add expertise and case studies, then AI optimizes for structure. My Spectre platform automates exactly this workflow.

- Enhanced E-E-A-T Programs: Develop author credential pages, publish case studies with verifiable results, and establish citation practices. AI Overviews increasingly favor first-hand experience content.

Enterprise Team Roadmap: Advanced Integration

Enterprise teams have the resources to build infrastructure that smaller teams can't. Focus on:

- API Exposure: Create machine-readable endpoints for your product data, pricing, and specifications. This helps AI systems ground answers in your verified information.

- Real-Time Indexing: Implement IndexNow at scale to notify search engines immediately when content updates. Freshness matters more to AI systems than it ever did to traditional crawlers.

- Programmatic Structured Data: Generate schema markup dynamically for thousands of product pages or articles using your CMS or custom scripts.

- Custom AI Monitoring: Build integrations with Bing Webmaster API to track AI citations programmatically, not just through dashboards.

The key difference between enterprise and smaller teams isn't budget, it's infrastructure.

Small teams optimize individual pages. Enterprise teams optimize their entire content ecosystem for machine consumption. That's a different seo content strategy entirely, and it requires different tooling to match.

Risks, Compliance, and Future-Proofing

These shifts come with real risks. Publishing large volumes of unoriginal AI-generated content without editorial oversight is the fastest way to trigger a manual action or an algorithmic penalty.

Google's guidance is clear: human review is non-negotiable. HubSpot's reported traffic declines are the obvious example here, automated content farms get caught.

Legal compliance isn't optional anymore either. The EU AI Act's labeling requirements for AI-generated content take effect in 2026, with similar frameworks emerging elsewhere. If you operate in European markets, you need clear disclosure mechanisms in place.

This isn't just about avoiding fines. AI accuracy rates hover around 90% per New York Times analysis, which means misleading content is a real possibility, and that damages both reputation and domain authority.

Your starting position matters too. A domain with DR 80 can run aggressive AI content strategies with less exposure than a DR 20 startup. Enterprise teams have the infrastructure for regular audits. Smaller teams should focus on quarterly technical health checks and monthly SERP feature monitoring.

The gap between what works today and what works tomorrow is closing fast. AI search features evolve weekly.

Future-proofing means building adaptable systems, not chasing tactics. Here's what that looks like in practice:

- Compliance-first workflows: Integrate AI content disclosure checks into your publishing pipeline

- Regular algorithm monitoring: Subscribe to Google Search Central and Bing Webmaster blogs, and track tools that monitor AI Overview prevalence

- Flexible measurement frameworks: Design your KPIs to accommodate new AI visibility metrics as they emerge

- Infrastructure over tactics: Invest in structured data management and content APIs rather than chasing individual ranking signals

AI Overviews doubled their presence from August 2024 to November 2025, reaching over 60% of U.S. queries. Your seo content strategy needs the same capacity for rapid adaptation.

Not through reactive panic. Through systems that prioritize trust signals and machine readability, and that's as true for a seo strategy template you're building from scratch as it is for an enterprise operation mid-way through a rebuild.

Conclusion

AI-driven search isn't killing SEO. It's forcing it to grow up.

The era of chasing #1 rankings for transactional keywords is ending. What's replacing it is a game where authority, trust signals, and machine-readable structure determine who gets cited. AI Overviews now appear in over 60% of queries and reduce clicks by 34.5%, so your seo content strategy has to shift from serving humans who click to serving systems that cite [Source: ahrefs.com/blog/ai-overviews-reduce-clicks-update/].

The approach that actually works combines engineering discipline with editorial expertise. Build content ecosystems that signal topical authority. Implement structured data that AI can confidently extract. Measure success through citation counts and answer attribution, not just rankings.

Domain authority still matters, 92.36% of successful AI Overview citations come from domains already in the top 10 [Source: dataslayer.ai/blog/google-ai-overviews-the-end-of-traditional-ctr-and-how-to-adapt-in-2025]. But the point now is proving you're the definitive source, not just the highest-ranked link.

Start by auditing your content for AI extractability. Work through the 90-day technical priorities outlined above and get AI performance monitoring running through Bing Webmaster Tools.

Download our seo strategy template to map out your adaptation roadmap and start building the kind of authoritative, structured content that AI systems, and your audience, actually want.

Frequently Asked Questions

Is SEO dead or evolving in 2026?

SEO is evolving, not dead. AI-driven search changes how content gets evaluated and consumed, strategies now have to focus on authoritative, structured content that signals trust to AI systems.

The fundamental shift is from chasing rankings to building content ecosystems that demonstrate expertise to both human readers and AI agents.

What are the 5 C's of content?

For AI search, the 5 C's are Clarity (clear language for extraction), Context (comprehensive background), Credibility (verifiable sources and author credentials), Consistency (uniform messaging), and Completeness (thorough topic coverage).

These principles matter because AI search runs on semantic understanding and trust signals, not keyword matching.

What are the top 5 SEO strategies?

Implement structured data for better extraction, adopt topic pillar and cluster models, enhance E-E-A-T signals, measure AI-specific visibility with tools like Bing Webmaster AI reports, and use hybrid human-AI workflows with editorial review.

These work because AI systems prioritize comprehensive, well-structured content from authoritative sources over simple keyword optimization. Any seo content strategy that ignores this is already behind.

What is the 80/20 rule in SEO?

Focus 80% of your efforts on the 20% of content driving the most AI visibility, comprehensive pillar pages, long-tail queries, high-intent topics.

AI Overviews appear for 60.85% of long-tail queries (four or more words) [Source: e2msolutions.com]. Those are your highest-value targets. That's where the seo strategy template pays off.

What is going to replace SEO?

Nothing. SEO isn't being replaced, it's being transformed.

AI search adds new layers like citation mechanics and direct answers, so the discipline shifts toward building content ecosystem authority. The core principles stay the same (helpful content, technical excellence, authority building), but the implementation changes.

What are the 3 C's of SEO?

Content (quality and structure optimized for AI extractability), Code (technical SEO adjustments for AI crawlers), and Credibility (E-E-A-T signaling through provenance and experience).

AI systems evaluate all three at once when deciding whether to cite you in a generated answer. Miss one and the other two don't save you.